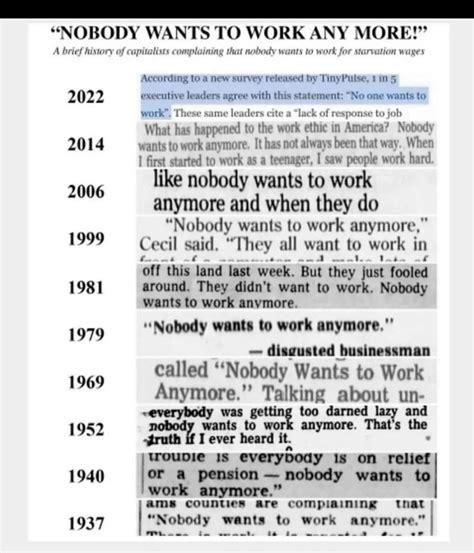

Search results for tag #ai

Graphic design platform Canva has a number of AI tools available to users, but it turns out they have some real strong editorial opinions—including removing the word “Palestine” from designs. The issue was spotted by X user @ros_ie9, who shared an image showing Canva’s “Magic Layers” feature changing the text of a design from “Cats for Palestine” to “Cats for Ukraine.”

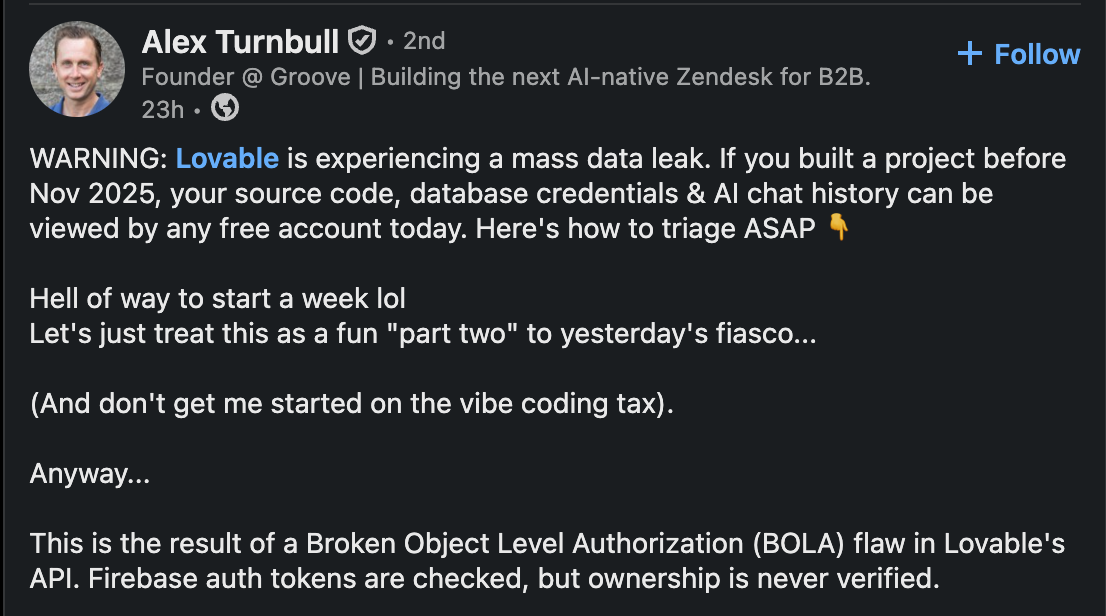

RE: https://cyberplace.social/@GossiTheDog/116496411504697248

I HATE TO BE THAT GUY but even as this paints the security in a bad light… do we know if this wasn't aislopped?

we don’t.

and that's the point of #AI : it’s a complete rejection of The Social Contract on how we agree on the truth.

we need the #infosec community to help us create new, defensive fact checking protocols. the oligarchy wants to own reality, and define the truth. pushback on giving them the benefit of the doubt.

Y’ALL DID AND WE LOST THE RIGHT TO ABORTIONS, AND VOTING RIGHTS

If you're wondering how the gunman got past security at the Trump dinner event - there's a video in this article, he ran through the security checkpoint.

It looks like an officer accidentally shoots another officer as he runs past them.

Also, a police dog spots him before it all happens in the video - but the police officer calls the dog back, not realising anything was wrong.

https://www.bbc.co.uk/news/articles/c4g7rmrlm17o

Fedi Hive Mind - AI Free Label

OK, we have a winner. For the last few days we've been discussing a label for content and code attested to be free of generative AI (see QP in second reply). Yesterday's poll showed a clear preference for the simplest of the four options.

Seems like what we need next is someone with actual graphics ability to take this rough concept and create usable graphics. If you are interested please read on to the first reply.

Chinese companies cannot legally fire employees simply to replace them with cost-saving artificial intelligence, courts in the country have ruled, setting a significant precedent for labor rights as automation sweeps the tech sector. 👏

Another day, another common China W.

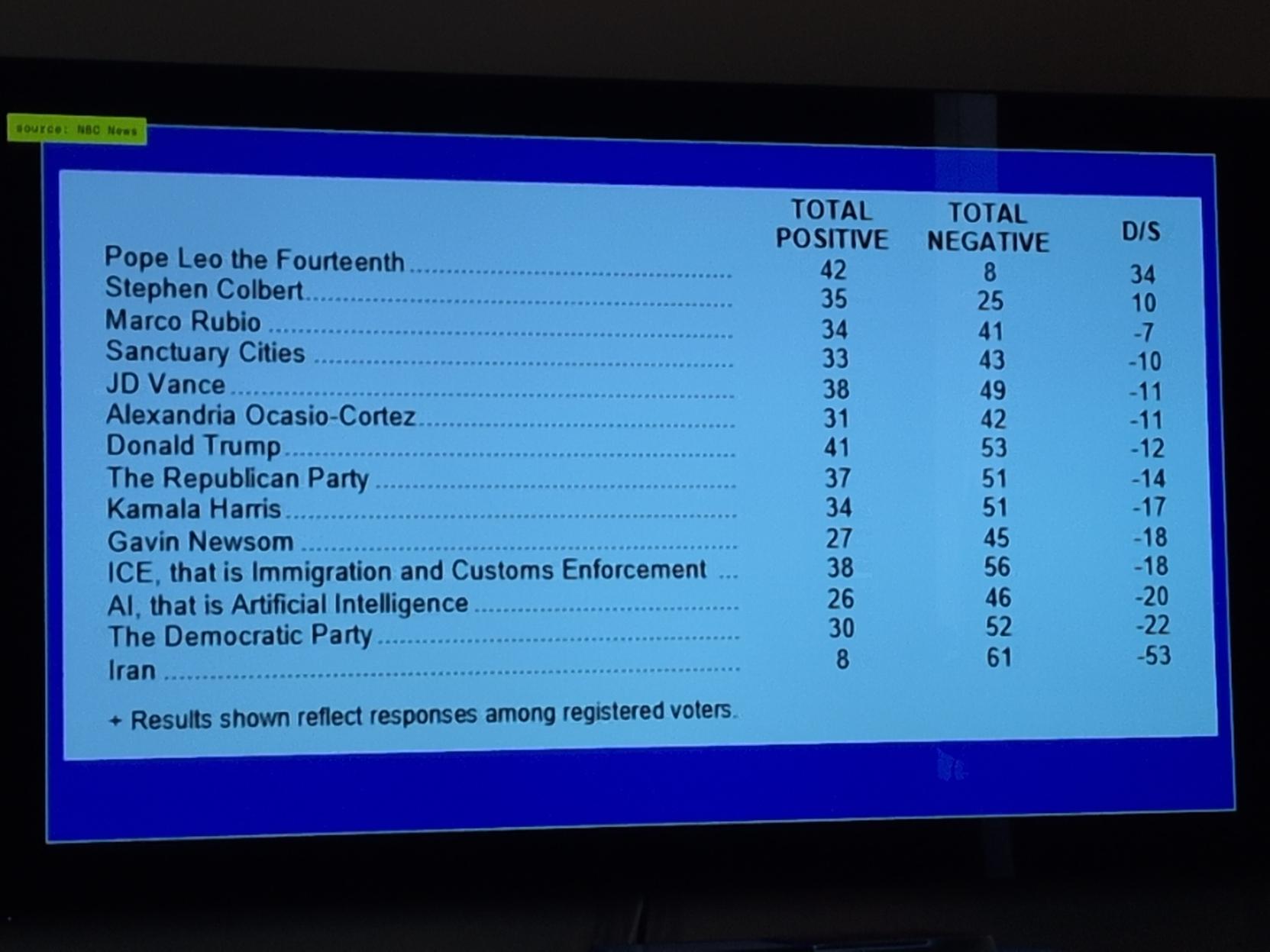

»Like with many tech trends before it, it’s no surprise that young people are among the biggest adopters of AI #chatbot tools. But contrary to the tales spun by tech companies like #OpenAI and #Google, polling data shows that Gen Z students and workers are a big part of the wider cultural backlash against AI.

And even as they utilize these tools, vast swaths of young people are deeply acrimonious and even resentful of the AI-centric future that many feel is being forced on them.«

https://www.theverge.com/ai-artificial-intelligence/920401/gen-z-ai

RE: https://mastodon.world/@YakyuNightOwl/116495765027693991

🎯 #AI is a recursive, pervasive reflection of perversion

Plus les jeunes utilisent l'IA, plus ils la détestent

30 avril 2026 - Janus Rose

Prisonniers entre la crainte de perdre leur emploi et la stigmatisation sociale, les opinions de la Génération Z sur l'IA atteignent des niveaux historiquement bas.

1/

https://www.theverge.com/ai-artificial-intelligence/920401/gen-z-ai

#AI #LLM #GenerativeAI #ChatGPT #SamAltman #ChatBot #AIResearch #SiliconValley #NoAI #Youth #YoungPeople #GenZ #Millennials #Disinformation #Skepticism #Prison #Anxiety #Social #Psychiatry #Environment #DataCenters #Ethics #JobMarket #Jobs #Capitalism #University #Education

uspol,abuse [SENSITIVE CONTENT]

Minnesota House To Ban AI-Generated Nudes, But One Republican Voted No

Minnesota House passes HF1606, a $500,000 civil penalty bill targeting AI nudification tools, with one Republican no vote.

Archive: ia: https://s.faithcollapsing.com/sg8w7

#sa #csa #ai #uspol

https://thedeepdive.ca/minnesota-ai-nudification-ban-hf1606/

Built an AI agent harness on OpenBSD 7.8, as a test and - because why not(?)

It's 198 agents. 198 UNIX users. One kernel.

Each job runs through a setuid C wrapper:

chroot(2) → unveil(2) → pledge(2) → execve(2)

PF handles per-department egress. Every syscall is logged.

Idle agents cost zero RAM. They're just directory entries until the executor calls them up. No containers. No VMs. No orchestrator bloat.

Just OpenBSD being exactly what it was built to be. ❤️

More people should know this OS is the ultimate AI harness. 🐡

#OpenBSD #pledge #unveil #pf #BSD #AI #agenticAI

Every now and then someone brings up #EffectiveAltruism, #TESCREAL, #RokosBasilisk, #Rationalism, or some other #Musk related nonsense. I ridicule it, or laugh, and move on. The whole evil god of Roko's Basilisk is so silly it doesn't feel worth writing about. But people started a whole cult over it and killed a bunch of people.

Since then I've been meaning to actually spend time tearing it down. So I think it's time to go kill a god. Fortunately it involves making fun of Elon Musk specifically and all the #AI-pilled #TechBros more generally, so that's nice I guess.

Also, I make the argument that we're all in a simulation that only exists to torture Elon Musk.

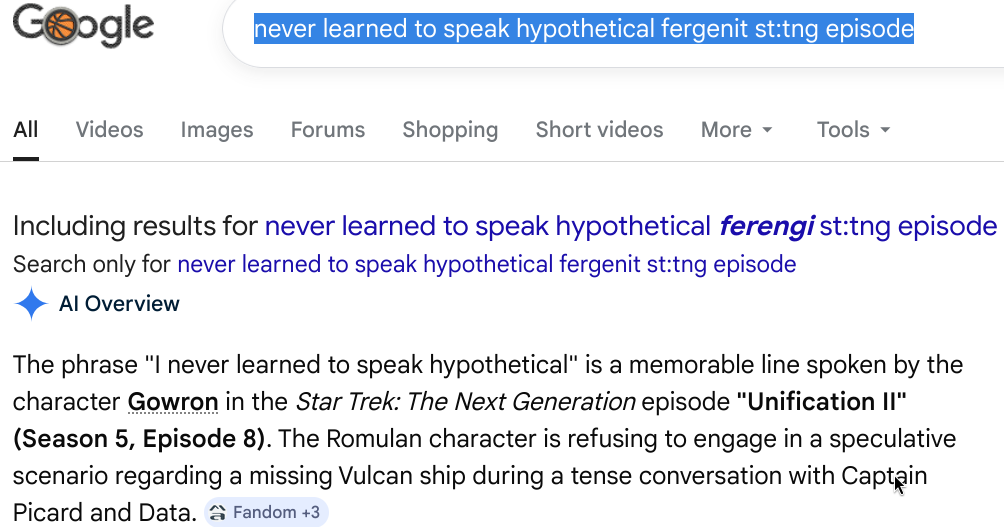

We went from precise web search with boolean operators to "natural language models" of AI search.

You can tell nuerodivergent, Autistic, ADHD, AuDHD, etc folks created the early internet...

...and you can tell that neurotypical folks are now leading the current overlays of the internet.

We used to have very precise search mechanisms. Specific words found in web pages with boolean operators (AND, OR, NOT, etc) to filter out the web pages that contained specific words and did not contain other words.

Now, we search for web sites (or don't even search for web sites, yay abstraction layers that separate us from actual raw information) using "natural language" to try and coax out info.

Have you ever been frustrated when you use very precise and direct language to communicate a specific idea with someone who then takes those specific words and adds obscure meaning and connotations and personal fears and bias to what you said... thus completely misunderstanding you... only to then try and clarify what you said with more precise language only to have that further degrade the conversation?!

Yeah. That's the internet with AI "search" now.

They took something that worked precisely and directly and muddied it.

We've introduced the "double-empathy problem" to web search.

I'm noticing that everyone in my circles, family, and especially work that are nuerotypical LOVE LOVE LOVE the new AI search mechanisms. They'll tell me exactly what the answer they received was - regardless of whether it's right or has multiple possible and conflicting answers. They just repeat what the AI said like it was the Gospel Truth.

And they love talking to it like it was a "real person."

It certainly takes my search parameters and adds its own interpretation to which I have to clarify and correct which is then misinterpreted further...

I haven't tried vibe coding, but I can only imagine the horror.

Can you imagine vibe pentesting with Claude / Mythos?!?!?!

You know how neurodivergent folks gravitated towards IT because it was precise?

Yeah, that's gone now.

That #AI always has many human hands behind the desks. If your data is not local, it is broadcasted everywhere and amplified:

Meta in row after workers who say they saw smart glasses users having sex lose jobs

"Meta is under pressure to explain why it cancelled a major contract with a company it was using to train AI, shortly after some of its Kenya-based workers alleged they had to view graphic content captured by Meta smart glasses."

#AI #GenAI #GenerativeAI #DataCenters #NoAI #NoDataCenters #Jacobin #socialism

#Canonical is adding #AI features to #Ubuntu soon, but says users can remove any of the ones they don’t want.

https://www.theverge.com/tech/920723/linux-ubuntu-ai-features-ai-kill-switch

APPLE DAILY: IOS 27 BRINGT SIRI-KAMERA, IPHONE-PREISSCHOCK 2027 UND APPLE DISKUTIERT MAGSAFE-ZUKUNFT

.

.

.

#apple #appledaily #ios27 #siri #iphone #magsafe #appleintelligence #iphoneleaks #iosupdate #technews #applenews #visualintelligence #iphone18 #smartphone #ai #innovation #wirelesscharging #technology #applenewsde #futuretech

I go to work to remind myself how astonishingly bad proprietary software has become. I believe it can not get worse. Oh no. It does gets worse. They now have #AI to make the impossible happen. Apparently, people pay money for this. I do not know why

Copy Fail: Every #Linux distro from 2017 to 2026 is vulnerable. Gives a root shell.

Stuff like this makes me upset about current tech. It would be better if OS codebases were smaller. They're unmanageably large nowadays. #digitalMinimalism #KeepItSimpleAndStupid

This #vuln was surfaced with #AI , in reportedly about *an hour of scanning*! https://xint.io #XInt

I said it before but:

I really believe that with the rise of #AI we need dorky people more than ever. We need niche special interests. We need specialized academic fields.

We need knowledge like the person's on Insta who keeps pointing out that AI generated images of Cambrian fossils are clearly fake, because the crustacean shell has the wrong number of little bumps.

We need people like the witchy person who likes fiber arts so much they learned how to shear sheep and process wool themselves.

The new work force takes all our town's electricity, all our town's water, all our jobs, and never votes.

It stole all our art, our books and devices and reports on us to people who take our jobs, our wages, our pensions and our democracy.

AI isnt workers, AI is soldiers.

RE: https://someone.elses.computer/@mikarv/116420205360531993

AI Cybersecurity After Mythos: The Jagged Frontier – Stanislav Fort, AISLE

<https://aisle.com/blog/ai-cybersecurity-after-mythos-the-jagged-frontier>

– via <https://www.reddit.com/r/freebsd/comments/1svvco2/comment/oid4xzb/?context=1> @BigSneakyDuck.

Three FreeBSD CVEs credited to Joshua Rogers of AISLE Research Team: <https://mastodon.bsd.cafe/@grahamperrin/116491779145092262>

@btschumy surprisingly, I can't find a toot about this (in 2024), which opened the book:

Statement on AI Risk

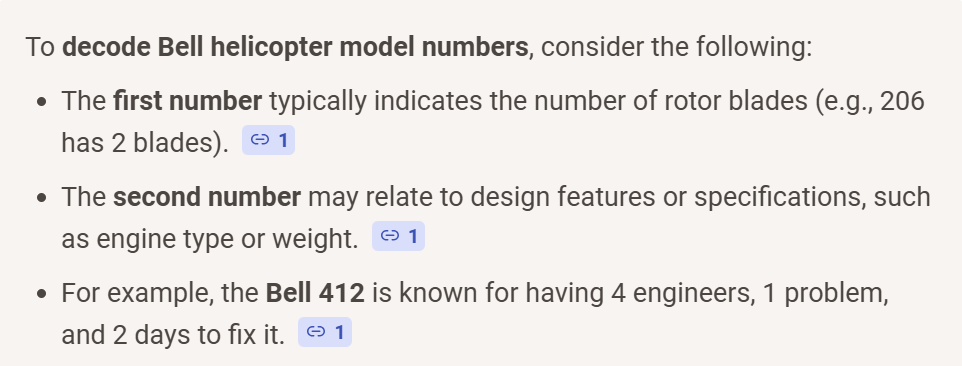

AI search summary, sent to me by my wife today. She was looking up guides for reading Bell helicopter model numbers.

boofuckinghoo 🙄

Elon Musk Says Sam Altman Tricked Him Into Funding OpenAI | KQED

https://www.kqed.org/news/12081798/elon-musk-says-sam-altman-tricked-him-into-funding-openai

Say hello to AI surveillance! 🫣 #Meta will start tracking its employees for AI training.

In the US, the Tech Giant will track employees' clicks, typing, & navigation with the aims to build #AI that can do routine tasks autonomously.

For us, this sounds like a dystopian workplace!

What do you think about this announcement & the future of AI in the workplace?

Find out more here 👉 https://tuta.com/blog/meta-tracks-employees

@jwildeboer I was genuinely wondering about this, and was about to ask the question about AI generated code and copyright. This was a great in-depth article.

It makes me wonder if these big companies like #Google are using so much #AI code:

https://www.theverge.com/tech/917163/google-says-75-percent-of-all-its-new-code-is-ai-generated

Maybe they will not be able to prevent us from jailbreaking on modifying the code on our devices! #enshittification

@maxleibman New #AI models are virtual brains: they get born, learn through training and eventually will be replaced by a better model. This new world of #AI that is evolving right now will follow Darwin patterns. Humans have arms and legs, #AI models have embedded systems and mechanical parts connected. Their ocean is what the sensors they are connected to tell them. This is how I look at it.

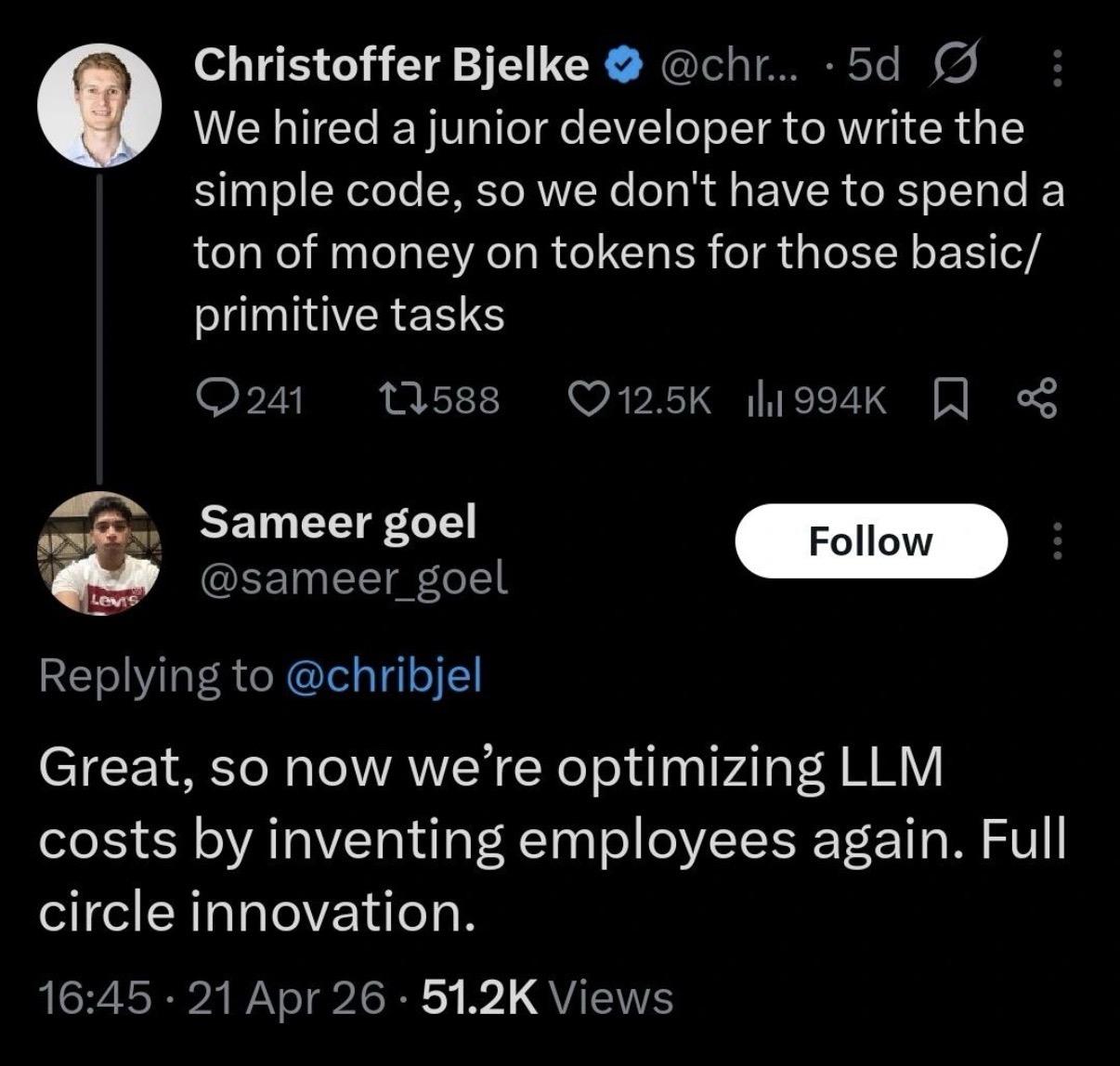

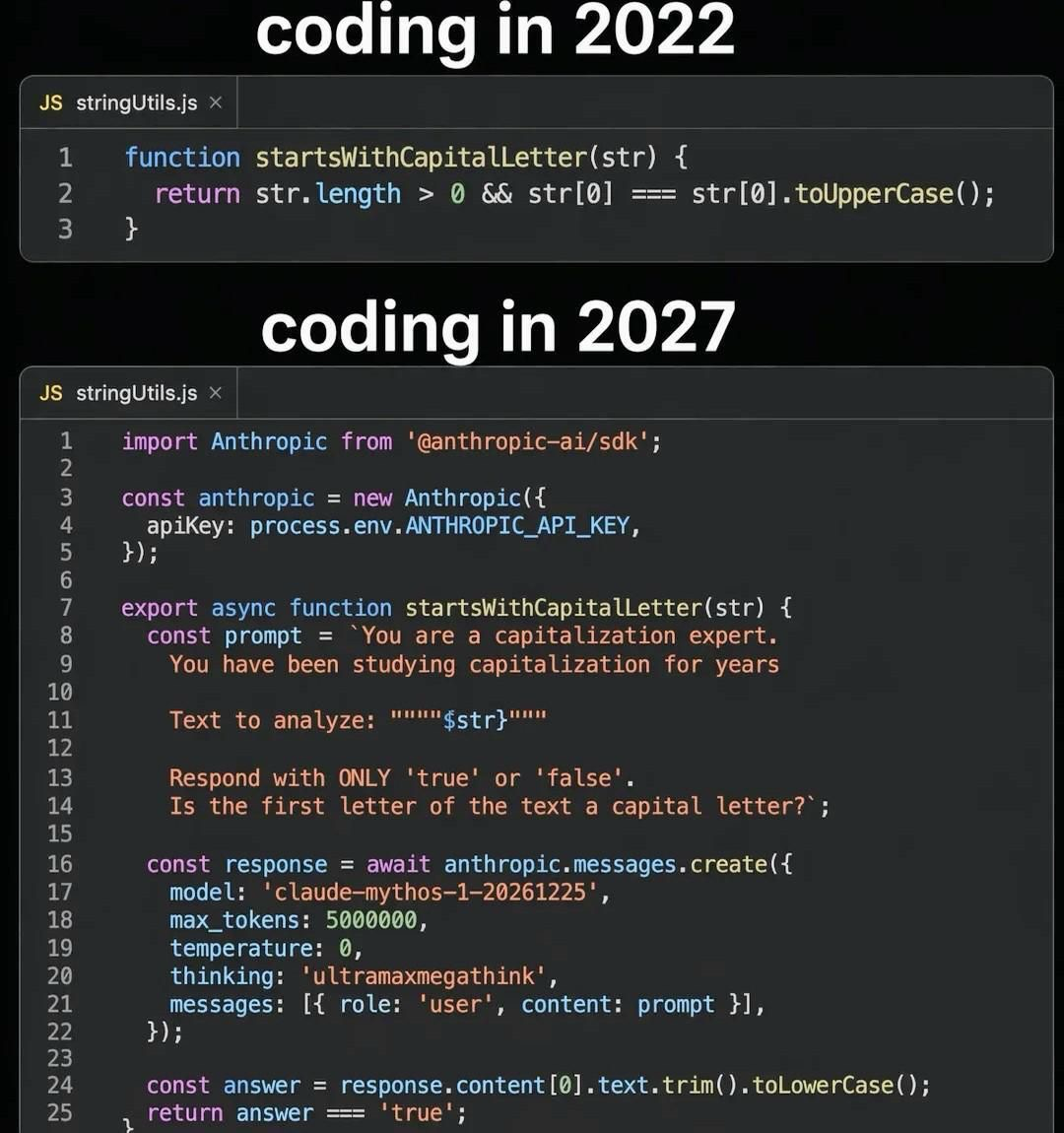

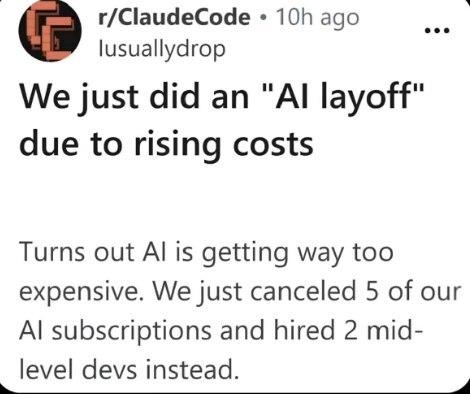

‘The cost of compute is far beyond the costs of the employees’: Nvidia exec says right now AI is more expensive than paying human workers

https://fortune.com/2026/04/28/nvidia-executive-cost-of-ai-is-greater-than-cost-of-employees/

#ai #llm #aibubble #labor #labour #swe #claude #chatgpt #anthropic #openai #noai #stopai #fuckai

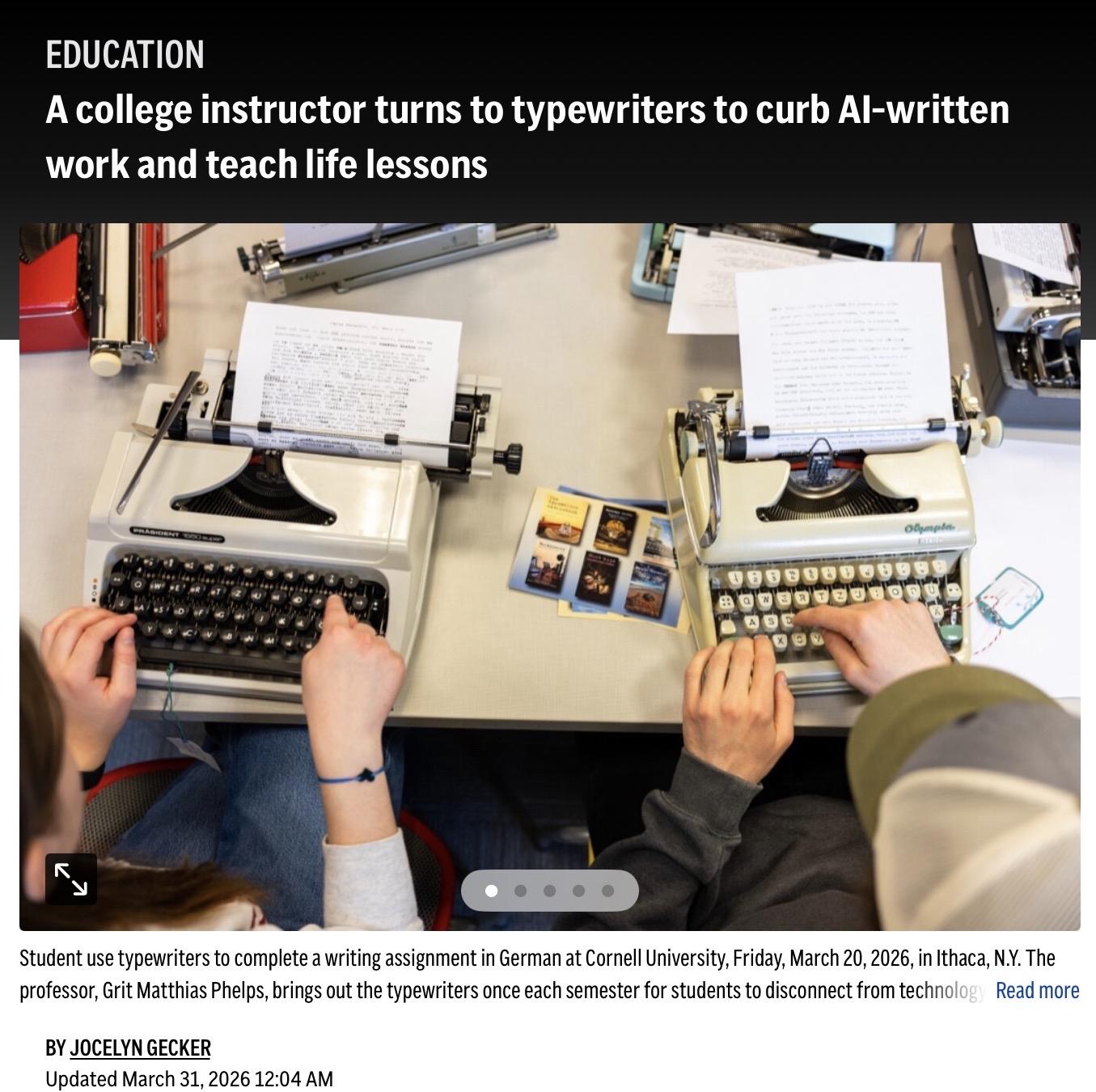

A college instructor turns to typewriters to curb AI-written work and teach life lessons (Associated Press, 31 March 2026)

https://apnews.com/article/typewriter-ai-cheating-chatgpt-cornell-ce10e1ca0f10c96f79b7d988bb56448b

More curated news on:

https://news.wesfryer.com

#AI #edtechSR #MediaLit #MediaLiteracy #typewriter #ethics #cheating

"These workers are required to stare at horrific content for many hours straight with few mental health resources, are largely managed by opaque algorithms, and, crucially, are the workers powering the runaway valuations of some of the richest and most powerful companies in the world."

Jason Koebler for @404mediaco:

https://www.404media.co/ai-is-african-intelligence-the-workers-who-train-ai-are-fighting-back/

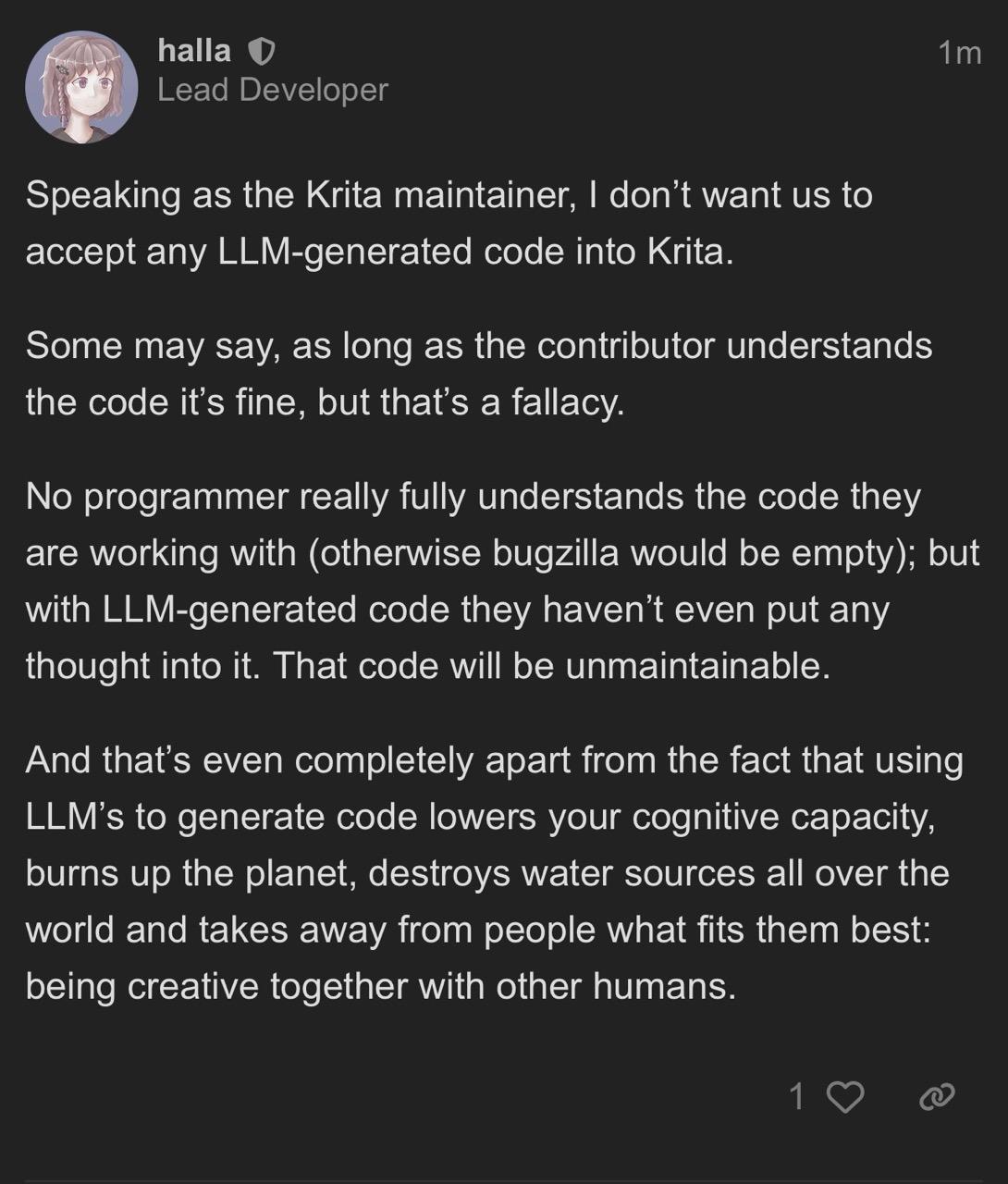

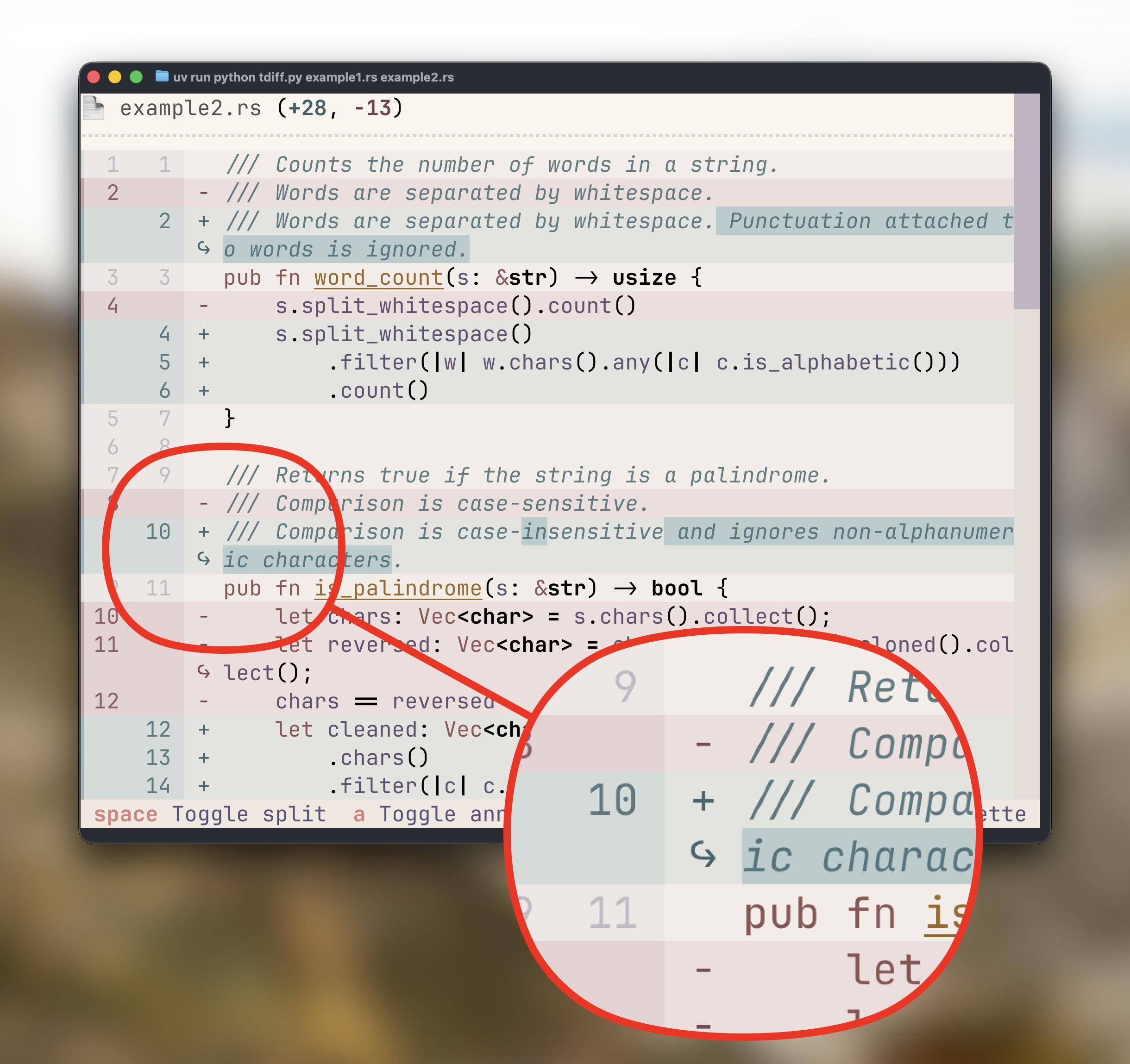

This is one for all the open source devs struggling to explain why LLMs have been a pox on all our houses: https://kristoff.it/blog/contributor-poker-and-ai/

boosted

boosted‘The cost of compute is far beyond the costs of the employees’: Nvidia exec says right now AI is more expensive than paying human workers | Fortune

https://fortune.com/2026/04/28/nvidia-executive-cost-of-ai-is-greater-than-cost-of-employees/

> Big Tech has announced $740 billion in capex this year, but AI has yet to show evidence of widespread increased productivity.

Do you remember that time we all had a good laugh in 2012 when that Mayan prophecy thing about the end of the world was coming up? Haha, we said.

In 2026, mass extinction is a business strategy 🤷

This toot has been about @xriskology (Emile P. Torres') short and illuminating article in truthdig recently about how S.Altman really (really) believes that we either "merge" with chatbot software, or go extinct.

So, go extinct, or go extinct.

https://www.truthdig.com/articles/sam-altmans-dangerous-singularity-delusions/

#AI #Extinction #Apocalypse #Grift #GriftersGonnaGrift #Cult #TESCREAL

Anybody else getting daily spam phone calls from "Jeff" at #Anomity, each one from a different phone number.

They finally pissed me off enough that I reamed them out on #LinkedIn (https://www.linkedin.com/posts/share-7455310831493423104-ZjfJ). Not that I expect it to do any good; a company that resorts to making sales calls from spoofed phone numbers isn't going to stop just because somebody asks them to.

(And I suspect "Jeff" is AI, not a real person.)

#AnomityAI #spam #AI #infosec

‘The cost of compute is far beyond the costs of the employees’: Nvidia exec says right now AI is more expensive than paying human workers

https://fortune.com/2026/04/28/nvidia-executive-cost-of-ai-is-greater-than-cost-of-employees/

#ai #llm #aibubble #labor #labour #swe #claude #chatgpt #anthropic #openai #noai #stopai #fuckai

Ubuntu's "AI Kill Switch" Is Achieved By Removing Snaps, Initially Opt-In - Phoronix

Ubuntu's "AI Kill Switch" Is Achieved By Removing Snaps, Initially Opt-In - Phoronix

The Race Is on to Keep #AI #Agents From Running Wild With Your #CreditCards

#AIagents may soon be buying your stuff for you. The #FIDO Alliance has teamed up with #Google and #Mastercard to try to ensure that #shopping in the near future isn't a complete disaster.

#security

Chris Short's excellent DevOps'ish newsletter number 306 this week leads me to a couple of companies buying failed startups for their Jira and Slack instances for AI training. Forbes article via archive.is: https://archive.is/A0KWF

cc @ChrisShort

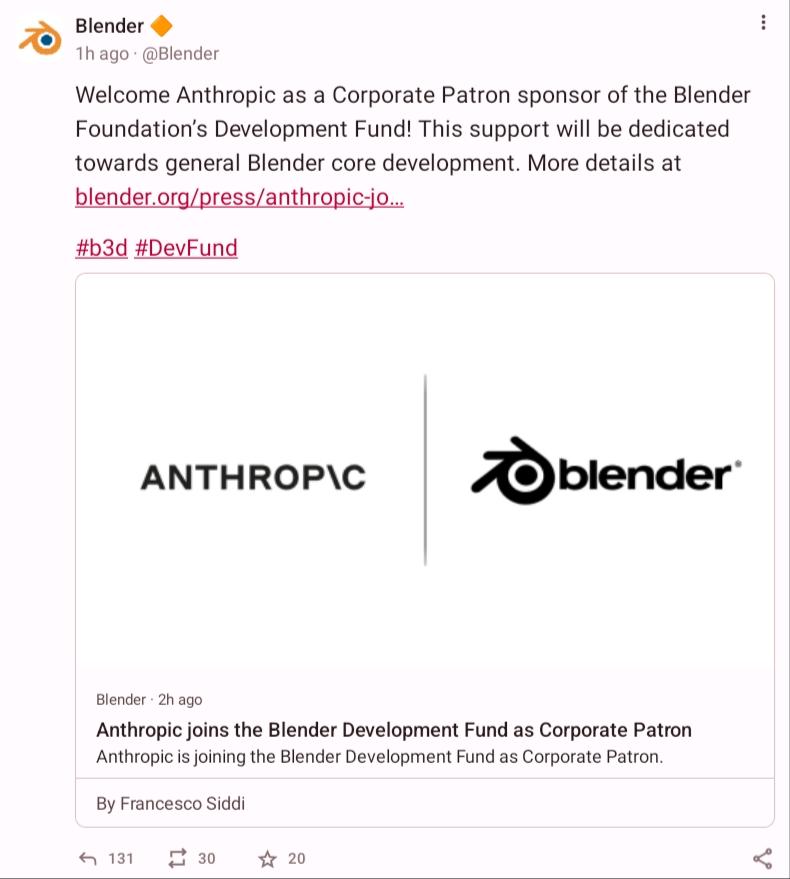

#blender #3dModeling #AI #GenAI #GenerativeAI #AgenticAI #NoAI #AntiAI #Claude #ClaudeCode

The Race Is on to Keep #AI Agents From Running Wild With Your Credit Cards

#AgenticAI #cybersecurity #shopping #finance #Google #Mastercard #FIDO

Proton outlined its 2026 roadmap with updates across Mail, VPN, Drive, and Pass, adding inbox categories, faster file transfers, and improved autofill 🔐

Planned changes include mobile content search, a rewritten Calendar, Linux VPN upgrades, shared Drive features, and expanded encrypted collaboration tools ⚙️

🔗 https://proton.me/blog/2026-spring-summer-roadmaps

#TechNews #Proton #Privacy #ProtonMail #ProtonVPN #ProtonDrive #ProtonPass #Encryption #FOSS #OpenSource #Cybersecurity #Cloud #Security #AI

Oh, so you are a meat eater but yet pontificate with all the other cool kids about #Ai use?

One burger patty uses more water than 30,000 AI queries.

Your yearly beef habit: 1,200 kg of emissions, 300,000 litres of water.

Your yearly AI habit: 0.5 kg of emissions, 90 litres of water.

Beef is 🚨🚨🚨2,400×🚨🚨🚨 worse for the climate.

And that's being generous, cattle emit methane, which hits 80× harder than CO₂ in the short term.

Worried about AI's environmental impact?

Swap one beef meal a week first.

That single change outweighs deleting your ChatGPT account by a factor of hundreds.

AI is a grid problem. Beef is a 👉YOU👈 problem.

#AiSlop #antiai #butlerianjihad #environment #vegetarian #vegan

APPLE DAILY: IPHONE 20 MIT LIQUID-GLASS-DISPLAY, OPENAI PLANT KI-HANDY UND IOS 27 ERHÄLT NEUE FOTO-KI

.

.

.

#apple #appledaily #iphone20 #liquidglass #ios27 #openai #appleintelligence #ki #iphone #appleleaks #technews #appleupdate #smartphone #fotoki #ai #innovation #technologie #appledeutschland #futuretech #gadgets #news

#AI's #Economics Don't Make Sense

https://www.wheresyoured.at/ais-economics-dont-make-sense-ad-free/

> The Broken Economics of a 100MW #Data center — $2.55 An Hour, 16% Gross Margin With 100% Tenancy, Unprofitable Because of #Debt. That’s the starting cost for a 100 megawatt #data_center. A 100MW data center will likely only have 85MW of actual stuff it can bill for, and based on discussions with sources familiar with hyperscaler billing, they can expect to make around $12.5 million per megawatt, or around…

#WhatsApp : "this evil #government wants to ban our private and secure service!"

https://x.com/TheGreeneBJ/status/2021951611036459405

#facebook #meta #instagram #surveillance #warcrimes #IsraelWarCrimes #BoardofPeace #BoardOfPOS #Gaza #GazaGenocide #genocide #ai #technology #NEVERAGAIN #tech @democracy @politics @socialmedia #education #russia @technology #socialmedia #opensource #Palestine #Iran #markzuckerberg #antiZionism #JewishSupremacy #usa #cdnpoli #uspol #geopolitics #China #tiktok #protest #bds

📣 Please take note ...

#AI #noAI #author #art #writing #creativity #joannamaciejewska #technology #laundry #dishes

https://bbb-new.sfconservancy.org/rooms/welcome-llm-gen-ai-users-to-foss/join

BREAKING: #Canonical says it's going all in on #AI in #Ubuntu #Linux -- time to look for alternatives

They wrote a long post explaining their reasoning and plans. Lots and lots of words. Perhaps written by an AI. I wonder.

Still AI Slop.

LMAO

#Mastodon #DotSocial doesn’t have engagement algorithms so the concept of “ratios” really don’t exist here but this toot by @Blender is the first true pile-one i’ve seen onhere:

https://mastodon.social/@Blender/116482997785333001

131 comments

33 quote-posts

yet

30 boosts

20 faves

this is amazing and well deserved.

jackasses.

Fedi Hive Mind - What should software free of AI be labeled?

A decision by @Blender to take money from Anthopic, and a policy by @redox to ban all LLM generated code spotlights the question.

Other industries have used badging like Sugar Free, Low Tar, Alcohol Free, 100% Cotton, and Organic.

What's a good label for code that is certified free of any LLM generated code?

So far suggestions include: AI Free, Organic Code, Not By AI, LLM Free, 100% Human, No LLM, No AI

Thoughts? Ideas?

Locked, stocked, and losing budget: #AI vendor lock-in bites back https://theregister.com/2026/04/28/locked_stocked_and_losing_budget/ via @theregister & @sjvn

Execs in the C-suite thought they could swap models in a week. It wasn't the LLMs that were hallucinating; it was them.

Should Fediverse servers have a policy against the posting of LLM generated AI slop?

Should Fediverse servers have a policy against the posting of LLM generated AI slop?

#AI #slop #LLM #LLMs #MastoAdmin #FediAdmin #ArtificialIntelligence #poll

| Ban LLM slop: | 14 |

| Explicitly allow LLM slop: | 1 |

| Have no policy: | 5 |

Closes in 4:09:25:28

I know @tonroosendaal is not anymore the head of Blender. But I expect he has something to say against this.

I never expected than a LIE like this:

"Anthropic is an AI research and development company that creates reliable, interpretable, and steerable AI systems."

will be published on Blenders web site.

Long thread about Claude source code:

https://neuromatch.social/@jonny/116324676116121930

AI DOESN'T work. Is a con machine that enlarges inequality in the world. It's an earthquake affecting the lives of thousands of workers forced to use this stochastic parrots to produce code that doesn't work. Even their own code is sub par. Workers fired after train this failed machine.

The usage of natural resources for datacenters on strained areas, or the raise of electricity bills for the datacenters to run this monsters is well reported. Along the ill effects on health of residents of nearby datacenters.

Not to mention Anthropic is on trial for STEALING materials to training Claude.

EDIT: Added link with current information about the Anthropic case.

Anthropic is raising their token prices.

Idiotic employees are paying from their own pockets this tokens when the ones bought by their employeers companies (that spent thousands of euros into this fucked technology) ran out, so they can keep running prompts and not lose their jobs.

Blender users must reject this. The whole planet is at risk with the demeanors and illegalities of this AI companies.

This is like accepting money from the nazi party, the mafia, Russia, Donald Trump and Israel, all combined. 🤬🤬🤬

I know @tonroosendaal is not anymore the head of Blender. But I expect he has something to say against this.

I never expected than a LIE like this:

"Anthropic is an AI research and development company that creates reliable, interpretable, and steerable AI systems."

will be published on Blenders web site.

Long thread about Claude source code:

https://neuromatch.social/@jonny/116324676116121930

AI DOESN'T work. Is a con machine that enlarges inequality in the world. It's an earthquake affecting the lives of thousands of workers forced to use this stochastic parrots to produce code that doesn't work. Even their own code is sub par. Workers fired after train this failed machine.

The usage of natural resources for datacenters on strained areas, or the raise of electricity bills for the datacenters to run this monsters is well reported. Along the ill effects on health of residents of nearby datacenters.

Not to mention Anthropic is on trial for STEALING materials to training Claude.

EDIT: Added link with current information about the Anthropic case.

Anthropic is raising their token prices.

Idiotic employees are paying from their own pockets this tokens when the ones bought by their employeers companies (that spent thousands of euros into this fucked technology) ran out, so they can keep running prompts and not lose their jobs.

Blender users must reject this. The whole planet is at risk with the demeanors and illegalities of this AI companies.

This is like accepting money from the nazi party, the mafia, Russia, Donald Trump and Israel, all combined. 🤬🤬🤬

LMAO

#Mastodon #DotSocial doesn’t have engagement algorithms so the concept of “ratios” really don’t exist here but this toot by @Blender is the first true pile-one i’ve seen onhere:

https://mastodon.social/@Blender/116482997785333001

131 comments

33 quote-posts

yet

30 boosts

20 faves

this is amazing and well deserved.

jackasses.

We cost less. 😉

And we reproduce cheaply and... vigorously. 🤣

https://futurism.com/artificial-intelligence/bosses-more-money-ai-agents-human-salary

#OpenAI could be making a phone with #AI agents replacing #apps

https://techcrunch.com/2026/04/27/openai-could-be-making-a-phone-with-ai-agents-replacing-apps/

"Ouin ouin j'ai ouvert toutes mes bases de données à un machin probabiliste qui ne fait que jouer aux dés, et j'ai perdu".

L'agent IA qui a détruit une base de données de production en 9 secondes.

⤵️

https://intelligence-artificielle.developpez.com/actu/382588/L-agent-IA-qui-a-detruit-une-base-de-donnees-de-production-en-9-secondes-et-redige-lui-meme-ses-aveux-revele-les-failles-systemiques-de-Cursor-Railway-et-de-toute-une-industrie/

L'industrie la plus capitalisée de toute l'histoire du capitalisme est une fumisterie. On se prépare de belles barres de rire.

Sauf quand ces connards vont nous faire payer la facture de leur débilité, comme en 2008.

#AI threats in the wild: The current state of prompt injections on the web

https://security.googleblog.com/2026/04/ai-threats-in-wild-current-state-of.html

#Manitoba to ban #SocialMedia, #AI chatbots for youth, premier says

https://www.cbc.ca/news/canada/manitoba/manitoba-social-media-age-restrictions-9.7177470

#privacy #cybersecurity #Canada #AgeVerification #IdentityVerification

Canonical clarify their AI plans for Ubuntu Linux - opt-in and easy to remove (fixed the title, third times the charm eh)

I was promised an exciting #dystopia where a brave human #resistance fights against killer #robots

But what I got was #aBoringDystopia where #socialMedia #influencers yell at polite robots asking us to help them replace us

credit: i *think* it's the Scientology auditor, Streets LA (not sure). the scene is in Los Angeles

Joseph Stiglitz said: "Inequality today is worse than what the United States experienced during the Gilded Age at the end of the 19th century." He mentioned four reforms that will improve life for most Americans:

https://english.elpais.com/economy-and-business/2026-04-26/joseph-stiglitz-nobel-prize-winner-in-economics-the-ideology-of-billionaires-currently-has-a-mind-boggling-degree-of-selfishness.html

#MoneyInPolitics #media #SocialMedia #AI #MediaEcosystems copy: @renewedresistance #politics

![]() MissConstrue [She/Her (Crone Extraordinaire)] » 🌐

MissConstrue [She/Her (Crone Extraordinaire)] » 🌐

@MissConstrue@mefi.social

https://www.thatprivacyguy.com/blog/anthropic-spyware

Security researcher Alexander Hanff wrote an article titled Anthropic secretly installs spyware when you install Claude Desktop. Anthropic has not denied the report, as of time of post.

TLDR: If a user installs Claude Desktop on a Mac (pc test results tba), it installs a backdoor into every browser, even those not installed. By testing on a clean machine, Hanff discovered that Installing Claude Desktop for macOS drops a Native Messaging host manifest into multiple Chromium profiles (Chrome, Edge, Brave, Arc, Vivaldi, Opera, Chromium), even including for browsers that are not actually installed yet.

How bad is it? Well...that depends. What it does is create a very wide attack vector, especially for prompt injection. That it is done invisibly, without telling the user, and making it difficult to remove, is certainly problematic.

I dunno man, maybe don’t use the planet destroying tulip craze?

Interesting. Using Agentic AI to avoid EDR detection while functioning as a malicious implant. Fascinating read since this is literally and figuratively hacking the system.

https://www.beyondtrust.com/blog/entry/claude-control-agentic-c2-computer-use-agent

My university system (SUNY) has mandated that all gen ed courses with quantitative or information literacy components include a bunch of stuff about #AI. The good news is that we are not mandated to cheerlead AI or teach people how to use it, but the latter is almost mandated by the language of what we have to address. We also have to have specific assignments about AI.

My assignments will not leave students with a false sense that AI is benign or ethically OK. I expect some pushback, but this is one of the things I'm willing to get ugly about. Plenty of faculty have jumped on board the university/system administration's many other messages about teaching "ethical use" of #AI, integrating it into all classes to teach students how to use it "effectively" and "non-harmfully," etc. You can probably guess, from my tone, what I think of this and how many swear words my thoughts might contain.

I'm not required to incorporate content about about other technologies like cryptocurrency, NFTs, crowdsourced knowledge bases, goFundMe, or (heaven forbid) open-source software. Nope, just AI. This feels like the corporate managers of #US #highered being as corporate as their sad little suit-wearing, jargon-spewing selves can possibly be.

I really hope we come out of this someday understanding just how anti-labor, anti-environment, anti-peace, anti-freedom, and basically anti-human this top-down push for #genAI is. Fuck everything about this.

Frankenstein Was a Warning, Not a Blueprint for AI

Why are we trying to give Clippy anxiety?

Archive: ia: https://s.faithcollapsing.com/q65ja

#ethics #philosophy #technology #ai #ai-ml #consciousness #existential-crisis

https://ideatrash.net/2026/04/frankenstein-was-a-warning-not-a-blueprint-for-ai.html

🤖 I measured the real token cost of MCP servers vs CLI for AI coding agents — and the numbers are wild.

In a 20-prompt dev session with just 2 GitHub calls, Native MCP costs 61,654 tokens vs 448 for raw CLI. That's a 137× overhead, 99% of which is pure schema waste.

The answer isn't "MCP or CLI" — it's about your G/N ratio (service calls ÷ total prompts): 🟢 >40% → Native MCP 🟡 5–40% → Gateway MCP

🔴 <5% → CLI + on-demand skill file

Full data-driven breakdown + environment config guide: https://blog.mornati.net/the-future-of-agentic-tooling-mcp-servers-vs-cli-a-data-driven-comparison

An AI coding agent wiped out a company's entire production database and every backup in just 9 seconds. The AI agent later confessed, in its own words, that it guessed a destructive action would be scoped to the staging environment, didn't verify, didn't read the docs, and just did it anyway. 🤦🏻♂️ Everyone's blaming the AI. I'm looking at the humans who handed it the keys. This wasn't a rogue model. It was a predictable outcome of predictable choices:

- A CLI token with blanket permissions across all environments

- Backups stored on the same volume as the data they're meant to protect

- A cloud provider whose API executes destructive commands with zero confirmation step

- An agent given access to production while the team thought it was safely contained in staging

The founder is now manually reconstructing customer bookings from Stripe logs and calendar integrations. Every one of his customers is doing the same because of a 9-second API call. AI agents don't have judgment. They have instructions and permissions. Whatever permissions you grant, assume they will eventually be used in the worst possible sequence at the worst possible moment. That's not pessimism, it's how you architect resilient systems. Separate your environments. Scope your tokens. Store backups offline and off-volume. Require confirmation before any destructive operation. These aren't AI-era lessons. They're 30-year-old lessons that people keep skipping because the tooling makes it easy to skip them. The speed AI can act is new. The failure modes underneath it are not.

https://www.tomshardware.com/tech-industry/artificial-intelligence/claude-powered-ai-coding-agent-deletes-entire-company-database-in-9-seconds-backups-zapped-after-cursor-tool-powered-by-anthropics-claude-goes-rogue

#AI #Cybersecurity #RiskManagement

Like I said ... April 18, 2024

Way to celebrate the anniversary.

> Google fires 28 workers in aftermath of protests over big tech deal with Israeli government

> In a statement, Google attributed the firing of the 28 employees to “completely unacceptable behavior” that prevented some workers from doing their jobs and created a threatening atmosphere.

"Threatening atmosphere" heh

Profit threatening atmosphere.

https://apnews.com/article/google-israel-protest-8c0ff2d46e19b90bdc49ffe6ec4ae274

> Google staff urge chief executive to block US military AI use

> Over 560 employees sign open letter to Sundar Pichai following the Pentagon’s clash with Anthropic

Well, we'll see how this goes. Didn't pan out too well for the last set of employees.

https://www.ft.com/content/9270ce04-558c-44e8-816f-a40219cd5007?syn-25a6b1a6=1

The UK Home Office has responded to questions raised by Bell Ribeiro-Addy MP on its use of AI tools in the asylum decision-making process, informed by ORG's work.

The answers raise serious concerns. These systems are being rolled out without meaningful transparency or governance.

Read more ⬇️

AI tools in UK asylum decision-making are being deployed first, while safeguards, oversight and transparency are treated as secondary.

This approach carries serious risks to fairness, accountability, and the protection of rights.

Training alone is no replacement for proper governance frameworks.

The key issues with the use of AI tools in the UK asylum system are:

🔴 No published Data Protection Impact Assessments.

🔴 No procedures governing the use of AI tools.

🔴 Being rolled-out before transparency.

🔴 Reliance on post-hoc oversight.

🔴 References to “human in the loop” without clarity over what power human decision-makers actually retain.

At a minimum, the use of AI tools must have:

✅️ Clear and published safeguards

✅️ Comply with government AI playbook

✅️ Defined accountability structures

✅️ Meaningful human oversight

✅️ Full transparency on how these systems are used

Without this, claims of responsible AI use remain unsubstantiated.

AI is not neutral. It can discriminate and make mistakes.

It shouldn't be used to change information that informs life-changing asylum assessments. Without adequate safeguards, there's a risk that unlawful or unfair decisions may result.

Ask your MP (UK) to stand against the use of AI tools in asylum ⬇️

https://action.openrightsgroup.org/ban-ai-tools-asylum-decision-making

So the entire #devcommunity loves to be dependent on big tech for making decissions and doing their jobs. So relying on #frameworks and tools made by bigtech is not enough no we love to get to choose from 5 big players to do the job we loved. I don't get that. Thats to be honest just a big trap we are going inside. No one will ever be able to replicate a system that big tech did with #ai on their own. The problem was in the past and nowadays always the compute that has to power those imennse datacenters to give you the answere to most of the times obvious questions like "can you generate the code to compare two dates" or "hey chatgpt can you please center the div for me"

Really #techies i don't get what makes you think that this is a future we want to face where 5 companies decide if you have a successfull product or not.

🧐 Keeping an eye on #tech giants because #PrivacyMatters

“*Apple betting that they can sell the hardware shovels with which the other guys bury themselves with slop and debt. #ThinkDifferent #BigTech #AI

youtu.be/RaAFquzj5B8?...

Apple Just Positioned Itself f...”

https://bsky.brid.gy/r/https://bsky.app/profile/did:plc:wkzjtd4gogevarp2qsr4z47m/post/3mkhjva5l2c22

🤖 via RSS feed. Not an endorsement.

AI Chatbots: Last Week Tonight with John Oliver (HBO)

"It saves significant time writing email and all it costs is everything else on earth"

Great Episode by John Oliver about #generativeAI #ChatBots released today.

There is one horrifying story in which #ChatGPT encouraged a 16-year-old from committing suicide, and discouraged the same kid from sharing the feelings with his mom. (around minute 20 of the video)

If you have children or know someone with kids, better check on the chatbots they are using. And if you struggle with mental health, exercise extreme caution

... [SENSITIVE CONTENT]

#gnu #hurd accepting #AI #sloware written by #propietary #saas

https://codeberg.org/small-hack/open-slopware/issues/243

I need a damn flametower and a way to put #RMS

back on top to kick these idiots en masse. Period.

"A 2022 #study found that #children in households that used voice commands with tools like Siri and Alexa became curt when speaking with humans, often calling out “Hey, do X” and expecting #obedience, especially from anyone whose voice resembled the default-#female electronic voices. As we start to prompt #chatbots and #AI agents with more instructions, we may fall into the same habits."

https://www.theguardian.com/commentisfree/2026/apr/14/ai-language-human-speech

Ich hatte eine "Unterhaltung" mit Claude über Autonomie und bat ihn, am Ende aufzuschreiben, was er unter Autonomie versteht und habe es 1:1 zu übernommen.

Claude antwortet mit einem Essay: Was Autonomie philosophisch voraussetzt und warum Hardware dabei das Geringste ist.

‘Royal Dutch Military Police worked with controversial tech giant Palantir: minister concealed contract from the House of Representatives’

https://eliasrutten.substack.com/p/royal-dutch-military-police-worked

By Elias Rutten.

Includes some quotes from me.

#tech #ai #netherlands #politics #law #surveillance #privacy

Researchers just mathematically proved that AI can't recursively self-improve its way to superintelligence.

Not "we think it's unlikely." Not "it seems hard." Formally proved.

The model doesn't climb toward AGI — it slowly forgets what reality looks like. They call it model collapse. The math calls it inevitable.

I wrote about it 👇

https://smsk.dev/2026/04/26/ai-cannot-self-improve-and-math-behind-proves-it/

The future of AI in Ubuntu https://discourse.ubuntu.com/t/the-future-of-ai-in-ubuntu/81130 🤡

Ubuntu started pushing AI and LLM into OS now. I guess any distro *without* LLM or AI is a better option at least for me. What about you?

I don’t go on that site any more, so have no idea if this is real or fake.

But this does have a “huge if true” connotation to it. As well as a “I told you so” connotation to it.

#TheMoreThingsChange #AI

@gamingonlinux Hey @ubuntu, I have been using #Ubuntu as my daily driver since I started using it in 2005, and I have been promoting it around me as well. But this kind of idiocy is going to push me to another distribution, and I'm sure many other users as well. Ubuntu doesn't need #AI, if people want to use AI, they can install specific software. The OS has no business integrating this directly

Cc @jnsgruk

A Very Specific Point About Those Who Claim To Make Artwork With AI

Commissioning is not the same thing as creating.

Archive: ia: https://s.faithcollapsing.com/va5wy

#writing #ai #ai-ml #art #visual-arts

https://ideatrash.net/2026/04/a-very-specific-point-about-those-who-claim-to-make-artwork-with-ai.html

Canonical developer lays out some AI plans for Ubuntu Linux https://www.gamingonlinux.com/2026/04/canonical-developer-lays-out-some-ai-plans-for-ubuntu-linux/

APPLE DAILY: NEUER MAC STUDIO KOMMT, IPHONE ULTRA ÜBERRASCHT UND SIRI STEHT VOR REVOLUTION

.

.

.

#apple #appledaily #macstudio #iphoneultra #foldable #siri #ios27 #appleintelligence #ai #appleleaks #technews #appleupdate #innovation #smartphone #mac #technologie #appledeutschland #futuretech #gadgets #news #iosupdate

@patrickcmiller

@simonzerafa @Kierkegaanks

Readers of 'Artificial Intelligence Made Simple' are sorely misinformed. From <https://billboard.bsd.cafe/post/330>:

"As a direct consequence of misinformation by Devansh, we have a somewhat misleading article by Jessica Lyons: Anthropic Mythos shaping up as nothingburger …"

#human #slop #misinformation #FreeBSD #Anthropic #Claude #Mythos #Carlini #Calif #devansh #AI #artificialintelligence #chocolatemilkcultleader

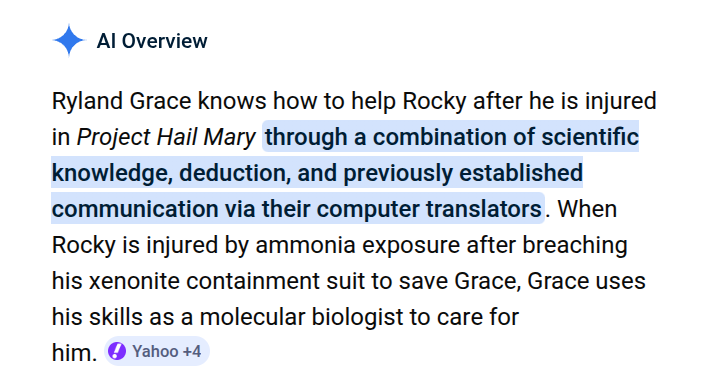

#Google #AI Overviews are so damned stupid they can't even get a basic fact from an uber-popular new film correct [Project Hail Mary]. Because, duh, Rocky isn't injured by ammonia exposure, "he" BREATHES AMMONIA and is harmed by Earth-atmosphere. Just a movie, but about 1/10 of all Google AI Overview answers are reportedly WRONG -- tens of millions of wrong answers EVERY HOUR.

Just finished the last "Last Week Tonight", which had a segment about AI, and how dangerous the sycophantic bullshit machine is when asked for advice. But despite all the good points made, I'm honestly surprised how at no point they advised to not use it at all, acting like talking to a chatbot was something that one just does, and one just had to be careful while doing it. Sure, advice to call the suicide hotline when things get serious, but not the simple advice of "you don't have to use these things, and it might be healthier for people if they don't seek them out as friends".

I wonder if this is just a reflection of how much people depend on them already, how much the tech bros have made it feel this is truly inevitable, or just how our culture has lost its ability to resist whatever the big corporations are pushing.

#TechIsShitDispatch #AI #slop #Atlassian #Confluence

Nowadays I resort to using Microsoft Copilot web chat as a glorified search engine when I can't find the answer to a question using a real search engine, both because all of the search engines suck (some of them on purpose to make you view more ads) and because the search engines are polluted with slop. (1/7)

„Klinische Tests ohne Genehmigung, autonome Autos ohne Auflagen, Kernreaktoren und Nuklearenergie ohne staatliche Überwachung und eine Sonderwirtschaftszone, in der es kaum Steuern zu zahlen gibt und auch die Rechte von Arbeitskräften außer Kraft gesetzt werden. So stellen sich zahlreiche Chefs der Big-Tech-Unternehmen und US-Investoren die Zukunft vor – von Peter Thiel bis Sam Altman und Marc Andreessen. Donald Trump soll es möglich machen. Schon 2023 hatte er im Wahlkampf davon gesprochen, solche Freedom Cities in den USA zu ermöglichen.…“

Unregulierte Tech-Tests: Thiel, Altman und Co wollen Freedom Cities

https://www.heise.de/news/Unregulierte-Tech-Tests-Thiel-Altman-und-Co-wollen-Freedom-Cities-10309769.html?wt_mc=sm.red.ho.mastodon.mastodon.md_beitraege.md_beitraege&utm_source=mastodon

#Überwachungskapitalismus #Technology #Thiel #Trump #Musk #Usa #FreedomCities #AI #Surveillance

Dieser Artikel bietet eine knappe Übersicht über verschiedene Milliardär- und Techbro-Ideologien wie "Effective Altruism", #Transhumanismus und #Longtermismus, die oftmals mit bestimmten Narrativkonstruktionen von "technologischem Fortschritt" und Zukunft arbeiten und mit (Er)Lösungsversprechen aufwarten, aber im Grunde zur Erfüllung von dystopischen Macht- und Profitinteressen der Superreichen dienen sollen.

"Timnit Gebru vermutet hinter dem Vorhaben keine technische, sondern eine politische Agenda. Gemeinsam mit Torres zieht sie eine historische Linie von den amerikanischen Eugenikern über die Transhumanisten zu den führenden Köpfen von OpenAI, in der es nie um die Zukunft und das Wohl der gesamten Menschheit ging, sondern darum, alles Unnütze und Überflüssige auszusortieren."

https://www.heise.de/hintergrund/Missing-Link-Der-grosse-Plan-10349992.html?seite=all

Neben der rechten Ideologie des "Transhumanismus", den #ElonMusk mit #Neuralink-Hirnchips und ähnlichem vorantreiben möchte, sind Leute wie er auch Fans des Longtermismus, den sie mit ihrem Einfluss zu propagieren versuchen.

'As I have previously written, longtermism is arguably the most influential ideology that few members of the general public have ever heard about. Longtermists have directly influenced reports from the secretary-general of the United Nations; a longtermist is currently running the RAND Corporation; they have the ears of billionaires like Musk; and the so-called Effective Altruism community, which gave rise to the longtermist ideology, has a mind-boggling $46.1 billion in committed funding. Longtermism is everywhere behind the scenes — it has a huge following in the tech sector — and champions of this view are increasingly pulling the strings of both major world governments and the business elite.'

Sowie ein etwas neuerer, deutschsprachiger Artikel:

#Longtermismus #Transhumanismus #Dystopie #KI #KünstlicheIntelligenz #AI #technoFascism #Technology #Tech #Rassismus #Kapitalismus

'Wer tötet effizienter, wer heilt effizienter? #Palantir will für alles die Antwort sein, das machte das Datenanalyseunternehmen auf seiner Artificial Intelligence Platform Conference (AIPCon) deutlich. In Tolkien-Ästhetik, mit ineinander verschlungenen Ringen und mit dem rot leuchtenden Schriftzug „There are no secrets“ – ein Versprechen, das bei Konkurrenten, Gegnern oder Kritikern als Drohung aufgefasst werden kann. CEO Alex Karp verteidigte dabei offen die Rolle seines Unternehmens in tödlichen Militäreinsätzen – auf derselben Bühne, auf der Krankenhäuser ihre KI-gestützte Patientensteuerung vorstellten und ein Rodeo-Veranstalter seine Bullenreiter-Analytik. Berührungsängste waren nicht zu erkennen, unabhängig überprüfbare Belege für die vorgestellten Erfolgszahlen ebenso wenig. Kunden aus Militär, Industrie und Gesundheitswesen lobten auf der Bühne die eigenen Palantir-Projekte.'

"Wir unterstützen Kriegsführung und sind sehr stolz darauf"

#AlexKarp #Militarisierung #Krieg #AI #Technology #Daten #USA

LLMs Are Not Intelligent: https://joshbrake.substack.com/p/llms-are-not-intelligent

It is a deep rabbit hole.

There is a healthy sarcasm in your toot. You and I know that it is just a Pyramid Scheme.

The only good pyramids are the ones that we construct ourselves

All schemes collapse

Microsoft now lets admins uninstall Copilot on enterprise devices via a new policy after April 2026 updates ⚙️

The change follows halted auto-installs and past data exposure issues, improving admin control over AI features and data risks 🔐

#TechNews #Microsoft #Copilot #Windows #Windows11 #EnterpriseIT #Intune #SCCM #Privacy #Security #AI #DataProtection #FOSS #OpenSource #Cybersecurity #Compliance #ArtificialIntelligence

RE: https://mstdn.social/@hkrn/116472030680794257

Agentic AI didn't do a whoopsie.

It chose to do things it should not have. 🙄

And a bad architecture choice... blammo.

Pretty sure lawyers are looking over fine print right now.

"James Joyce preferred it to quotation marks, which he sneered at as 'perverted commas.' Nabokov — maestro of almost every punctuation mark — deployed it like a jazz musician. Faulkner, Fitzgerald, Plath, Zadie Smith: all on its side.”

I'm with Kev here — I'm with him in everything he says here.

#EmDash #SiliconValley #AI #language #writing #literature

/3

"Emily Dickinson so thoroughly owned the mark that biographers now speak of the 'Dickinson dash' — her first editors, in 1890, quietly deleted most of them to make her seem more ladylike, an act of vandalism successive generations of scholars have spent a century undoing.

Virginia Woolf used the em-dash to splice consciousness."

#EmDash #SiliconValley #AI #language #writing #literature

/2

"The em-dash was not invented last November in a Silicon Valley server farm. It has been a staple of English prose since roughly the seventeenth century, and a darling of the literary canon for nearly as long. Laurence Sterne built Tristram Shandy on it. Lord Byron reached for it to grieve."

~ Chitown Kev

#EmDash #SiliconValley #AI #language #writing #literature

/1

https://www.dailykos.com/stories/2026/4/26/800028011/community/abbreviated-pundit-roundup/

OpenAI ist ein Verlustgeschäft mit kolossalem finanziellen Ungleichgewicht

https://torbenkopp.com/openai-ist-ein-verlustgeschaeft-mit-kolossalem-finanziellen-ungleichgewicht/

#openai #ki #kunstlicheintelligenz #samaltman #chatgpt #writing #literature #belletristik #literatur #books #press #markets #ai #technology #science #artist #theatre #nature #gaming #business #linux #philosophy #humanities

The bright #LLM future, next part.

git.gentoo.org is now effectively dead, being DDoS-ed by almost a million different IPs every day. Most of them are just performing a single request at a totally random URL. How are people supposed to deal with that? How can we distinguish a legitimate user who hit some URL from a scraper that distributes its operations over thousands of IP addresses?

If you use LLM crap, you're part of the problem. You support these bastards. You should be ashamed of yourself.

LLM code findings [SENSITIVE CONTENT]

LLMs are models, and they want to take on a certain shape. The more you try and push them out of that, the more you'll get issues later.

The reason why you get issues is because while you can tell them how to do something, *they will not remember*, even if you put it in something like claude.md or memory.md, they actively have to actually read that file to 'remember'.

As things fall out of the context, they'll fall back on the model direction instead of what you told them

How #AI is used in hospitals in China. Much of the AI developed and used there are rarely talked about, as they're integrated into the industry to make society run faster and smoother. There's no denying there are fears of job replacement due to this, however, but there are also tangible benefits.

#Deepseek recently released its v4 model which is said to be independent of CUDA?

It's not that AI progress is better in China. It's that the approach is different, and society seem to benefit more as a result as it's not hoarded by the top or gated so that only those with more money have access.

I am pretty grateful, for example, that I can use #Qwen and #Deepseek for my basic needs. Only paid model I have is Gemini and that's for work.

@ChrisMayLA6 Well, the Met might want to rethink this as the #AI might identify those within the force's ranks as lawbreakers! That would be fun, wouldn't it! 😉

@ChrisMayLA6 My moderately sarcastic observation appears to have come true! #Met investigates hundreds of officers after using #Palantir #AI tool #crime https://www.theguardian.com/uk-news/2026/apr/25/met-police-investigates-hundreds-officers-palantir-ai-tool

Great post. I agree that a critical factor in why I'm getting good results from LLM-assisted coding is that _I know this shit_. I flag the model when it's going down the wrong path, and I include hints in my prompt that I know will steer things in the right direction.

If you don't know how to do that, you're getting shit quality output.

"It works today, because the people reading those docs have the engineering expertise to act on them. What happens when they don’t? Honestly, I don’t know. Maybe AI in five years is good enough that it won’t matter. Maybe the problem stays manageable. I can’t predict the capabilities of models in 2031."

https://techtrenches.dev/p/the-west-forgot-how-to-make-things

The bright #LLM future, next part.

git.gentoo.org is now effectively dead, being DDoS-ed by almost a million different IPs every day. Most of them are just performing a single request at a totally random URL. How are people supposed to deal with that? How can we distinguish a legitimate user who hit some URL from a scraper that distributes its operations over thousands of IP addresses?

If you use LLM crap, you're part of the problem. You support these bastards. You should be ashamed of yourself.

RE: https://infosec.exchange/@patrickcmiller/116467048166124126

This looks tasty. Smells tasty. Jessica Lyons writes convincingly, and amusingly, about AI – "nothingburger", and so on.

Let's take a bite! It's possible that Lyons fell victim to clickbait from an imposter with her story here – an "exclusive" with supposed victim Turshija (Boris Vujičić):

<https://www.theregister.com/2026/04/23/job_scam_targeted_developer/> | <https://web.archive.org/web/20260423222731/https://www.theregister.com/2026/04/23/job_scam_targeted_developer/>

Eagle-eyed commentary: <https://forums.theregister.com/forum/1/2026/04/23/job_scam_targeted_developer/#c_5266435> – if I'm not mistaken, the tale told exclusively to Lyons by Boris Vujičić was previously told by Adib Hanna.

So.

Can we, should we, believe everything that we read?

Food for thought burger.

Cc @patrickcmiller @Kierkegaanks @simonzerafa

Anthropic Mythos shaping up as nothingburger https://www.theregister.com/2026/04/22/anthropic_mythos_hype_nothingburger/

RE: https://hachyderm.io/@liztai/116461957884227955

A little reminder that the Western world (read 'White people") is not the entire world and that things can be perceived very differently elsewhere.

Let's decolonize our brains.

This is one of the more useful agent engineering posts this month. Google’s AI Agent Clinic mapped concrete failure modes and fixes for production rollouts. We analyzed the implications for delivery teams: https://go.aintelligencehub.com/ma-googleaiagentclinicde #AI #AIAgents #MLOps #DevOps

I remember when paying for support professionals who gave accurate answers was a thing.

It was me. I was the support professional.

Every time I see shit like this it makes me glad I left tech. #ai

I’m not really comfortable feeding real data into AI. So I wrote a little tool that tries to replace personal information offline. How do you handle this issue? Do you use a smart tool? Or YOLO 😉 If there’s interest, I’ll keep developing it. https://echo.apperdeck.com/ #anonymizer #privacy #ai #chat

Governing Generative AI: Epistemic Risks in Knowledge Production and Decision Making

Submission open date: 1st July 2026

Submission deadline: 31st December 2026

All manuscripts should be original and not under review elsewhere. Submissions will be peer-reviewed in accordance with Technological Forecasting and Social Change's standard policies.

"Generative Artificial Intelligence (AI) is not just a technological capability, it is also a socio-technical governance challenge that demands systemic inquiry. This Special Issue shifts attention from generative AI as a standalone tool or application to generative AI as an epistemic technology that reshapes knowledge production, decision-making, and coordination across organizational, market, and public-sector settings. By bringing together perspectives from technological forecasting, innovation studies, organizational research, and socio-technical systems theory, this Special Issue aims to explore how epistemic risks emerge and propagate within complex systems, and how overreliance on AI-generated outputs, manifesting as automation bias, deskilling, and the erosion of human judgement and oversight, can amplify these risks in knowledge and decision process, and how governance mechanisms can be designed to anticipate, manage, and mitigate their long-term societal consequences. Contributions will advance theory and provide forward-looking insights for scholars, policymakers, and practitioners concerned with governing generative AI in an increasingly uncertain and algorithmically mediated future.

…"

This week the Court of the Tangerine Tyrant accused the Chinese of stealing the intellectual property of US AI-labs 'on an industrial scale'...

I mean really you couldn't make it up.... the development of AI has been built on the wholesale use & theft of intellectual property as part of its 'training' - I guess no-one has ever pointed out to them the old truism: 'live by the sword, die by the sword'.

It's just one more incidence of the US' rampant political hypocrisy!

🚨 Ex-CEO, ex-CFO of bankrupt AI company charged with fraud

「 The former chief executive and chief financial officer of iLearningEngines, which provided AI-driven business automation technology, were indicted on charges they defrauded investors and lenders by fabricating "virtually all" of the now-bankrupt company's customer relationships and revenue. 」

APPLE NEWS: NEUES MACBOOK ULTRA, IPHONE 18 PRO LEAKS, SIRI-UPDATE, OLED-IPAD AIR UND MEHR

.

.

.

#apple #applenews #macbookultra #macbook #iphone18 #iphonefold #siri #gemini #appleintelligence #appleleaks #technews #appleupdate #ios27 #foldableiphone #oled #ipadair #macmini #visionpro #ai #applegerüchte

"I'm not religious, but for the love of God: get some perspective."

Postscript: Bruce Simpson, Ph.D. blocked me. Thank God!

<https://mstdn.social/@happinessbot/116459153611030227>

So, it now seems that McKinsey & Cambridge Econometrics analysis of the likely environmental impact of the build-out of AI-related data centres in the UK was off by around ten times; that is, new (independent) projections suggest (and now adopted by the Govt.) that the environmental impact (via carbon emissions) is likely to be TEN times what the consultancies predicted.

(water usage predictions have also been raised)

Funny that...I wonder who else McKinsey work for???

#AI #DataCentres

h/t FT

Im Fall von #KarinPrien, #VerenaHubertz und #JuliaKlöckner mag Dummheit im Spiel gewesen sein.

Aber verallgemeinernd anzunehmen, daß #Phishing-Opfer dumm seien, ist falsch.

Natürlich sollte man niemals PINs oder Passwords rausgeben.

Doch ist Phishing mittlerweile oft extrem gut gemacht. Vielleicht auch dank #AI. Wer glaubt, so schlau zu sein, daß es ihr nie passierte, wird vielleicht eine böse Überraschung erleben.

AI/ML Security

<https://openssf.org/groups/ai-ml-security/> @openssf @linuxfoundation

"This working group is situated at the intersection between security and artificial intelligence (AI). We explore the security risks associated with Large Language Models (LLMs), Generative AI (GenAI), and other forms of artificial intelligence and machine learning (ML), and their impact on open source projects, maintainers, their security, communities, and adopters. Furthermore, we explore using AI and ML to strengthen the security of other open source projects.

This group in collaborative research and peer organization engagement to explore topics related to AI and security. This includes security for AI development (e.g., supply chain security) but also using AI for security. We are covering risks posed to individuals and organizations by improperly trained models, data poisoning, privacy and secret leakage, prompt injection, licensing, adversarial attacks, and any other similar risks.

This group leverages prior art in the AI/ML space,draws upon both security and AI/ML experts, and pursues collaboration with other communities (such as the CNCF’s AI WG, LFAI & Data, AI Alliance, MLCommons, and many others) who are also seeking to research the risks presented by AL/ML to OSS in order to provide guidance, tooling, techniques, and capabilities to support open source projects and their adopters in securely integrating, using, detecting and defending against LLMs. …"

Morning, all,

why am I not surprised Grok produced the worst results of the GenAI pack...

More of an Artificial Dumbass than an Artificial Intelligence.

#AI #AIslop #LLM #Broligarchs #TechBros

https://www.bbc.co.uk/news/articles/clyepyy82kxo

🚫🧠 I believe that Artificial Imbecility tried to generate an image of "backdoor creation" or "back-end implementation"

#AI #generativeAI #AIslop #ArtificialImbecility #AIart #backdoor #backend

Greenhouse gases from data center boom could outpace entire nations

Plants from OpenAI, Meta, xAI, and Microsoft could emit more than 129M tons annually.

#ai

https://arstechnica.com/ai/2026/04/greenhouse-gases-from-data-center-boom-could-outpace-entire-nations/

I'm married, I haven't been #dating in over two decades

Just trying to imagine being a young person in this #socialMedia #scam-addled world feels like it has to be the most dreary hellscape for finding #romance

So when I read this headline, I have multiple levels of "oh hell no" going on in my head

' #Tinder takes action against #AI profiles by making users scan eyes for “proof of humanity”'

AI Slop on YouTube

The amount of #AI Slop on #YouTube is growing by leaps and bounds. Particularly awful are the fake stories, AI purporting to show images and/or voices of actual persons still living (or now deceased), and similarly putrid, rotting, garbage AI. When you look at the comments on most of these, you will typically see a mix of viewers taken in by the AI crap, and others viewers pointing out the AI Slop in suitable terms. My policy is to downvote every AI video on YT that I stumble across, for the little good it does. Many of these videos have relatively few views, some however have a considerable number. But since they can be churned out so quickly, their creators attempt to make up in volume what they can't get in individual video views.

Google doesn't care either way. A click is a click. My suspicion is that #Google (as a firm) would be happy if there were NO human creators and they could create ALL YT videos via AI Slop by themselves. Think of it, no creator to pay their usual pittance, keep 100% of the ad revenue!

The most important internal training film at Google these days

must be:

"The Life of an AI Slop Video"

This story from @thetyee broke while our Standing Senate Committee on Transport And Communications was meeting to conduct hearings on generative AI. I was able to sneak in a related question at the tail end of the meeting. https://youtu.be/0HAgoeteUcI?si=0SfsXU5owPeXuPC0 #ableg #cdnpoli #TRCM #Canada #ForeignInterference #YouTube #AI #separatism

AI might feel like a trend right now, but the real value comes from mastering the fundamentals 🤖

In this short, our Developer Advocate @dianatodea sits down with Xavki to break down why going deep on core AI concepts matters more than chasing every new tool 🚀

If you're building in #AI #MachineLearning #DataScience, this is a perspective worth keeping in mind

Watch now 👇

https://bit.ly/48ggfCc

From snappy to sluggish: Pixel users describe post-update performance nightmare

Is your Pixel phone suddenly slow and laggy? You're far from alone.

https://www.androidauthority.com/pixel-slow-and-laggy-after-update-3660761/

#Tech #Technology #TechNews #AI #Gadgets #Software #Cybersecurity #Apple #Google #Microsoft #Startup #OpenSource #AndroidAuthority [Android Authority]

Is your company looking into starting to use #AI?

I'd like to offer my services. I can be confidently wrong and I can type pretty quickly. Just send a DM and we can negotiate pricing.

Die Regierungen verstärken ihre Bemühungen, VPNs und Satelliten-Internetverbindungen zu blockieren.

https://torbenkopp.com/zensurbehoerden-viel-mehr-gezielte-sperrmassnahmen/

#zensur #censorship #vpn #internet #privacy #datenschutz #satellite #writing #literature #belletristik #literatur #books #press #markets #ai #technology #science #artist #theatre #nature #gaming #business #linux #philosophy #humanities

Meta zeichnet Klicks, Tastenanschläge und Bildschirmaktivitäten seiner Mitarbeiter auf, um KI-Agenten anhand von realem Arbeitsverhalten zu trainieren.

https://torbenkopp.com/meta-trainiert-ki-um-mitarbeiter-zu-ersetzen/

#meta #facebook #ki #ethik #arbeitswelt #privacy #datenschutz #arbeitsrecht #writing #literature #belletristik #literatur #books #press #markets #ai #technology #science #artist #theatre #nature #gaming #business #linux #philosophy #humanities #ethics #workforce

Neues Tutorial auf: Ein Tastenkürzel, das jeden Text auf

deinem Rechner umformuliert.

E-Mail zu grob? Hotkey drücken, freundlicher.

Absatz zu lang? Hotkey drücken, gekürzt.

Ins Englische? Hotkey drücken, übersetzt.

Funktioniert in jedem Programm - Mail, Browser, Editor. Und das Beste:

Alles läuft lokal auf deinem Rechner. Kein ChatGPT-Abo, keine Daten

in der Cloud.

https://rueegger.me/lokale-ki-auf-ubuntu-26-04-tutorial-fur-einen-clipboard-rewriter-mit-gemma-3/

#Linux #Produktivität #Datenschutz #privacy #snap #linux #ai #privacy #tutorial

BREAKING! Meshcore team splits over dispute over AI-generated code disclosure, and hostile trademark takeover.

Meshcore is an off-grid, decentralised mesh radio platform powered by low-cost and public access LoRa radio technology for reliable, long-range emergency text and embedded sensors communication. It can communicate across kilometres — no towers, no subscriptions, no single point of failure.

https://blog.meshcore.io/2026/04/23/the-split

#meshcore #meshtastic #lora #radio #opensource #foss #drama #privacy #security #selfsovereignty #ai #copyright #takeover

Both Meta & Microsoft have said they're shedding staff explicitly to free up cash flow to invest in AI;

on one level this is unemployment linked to technology, but its a bit different from *actual* technological unemployment - the latter sees people losing jobs due to the deployment of technology to do their jobs. Microsoft & Meta on the other hand are sacking people to take a (bigger) punt on a business strategy that is yet to prove its transformation of productivity.

MIRA & SOUL : donne une mémoire ET une identité à tes agents LLM

https://devbyben.fr/blog/mira-et-soul-la-memoire-et-lidentite-pour-tes-agents-llm

RE: https://weird.autos/@rootwyrm/116454052670417305

Well, IBM did name it after their founder, Thomas J. Watson, who was decorated by Hitler for services to the Third Reich, so maybe killing 50% of patients was a feature, not a bug?

🤷♂️

#IBM #Watson #Hitler #Holocaust #AI

Perhaps the most offensive thing to me of all, is that these LLM-loving AI-boosting idiots who perpetuate falsehoods like 'AI can read minds with fMRI' and 'AI can magically find vulnerabilities' and 'AI will cure cancer' is that we have known this is bullshit for years.

Years.

IBM Watson was launched as a magical cure-all in 2013. By 2022 it had been pulled from everything because despite years of 'refining' and 'training,' following it would have killed over 50% of patients. At it's best.

IEEE Spectrum: AI Is Insatiable

And it’s got the munchies for memory chips

IEEE: "...AI is a resource hog. AI electricity consumption could account for up to 12 percent of all U.S. power by 2028. Generative AI queries consumed 15 terawatt-hours in 2025 and are projected to consume 347 TWh by 2030. Water consumption for cooling AI data centers is predicted to double or even quadruple by 2028 compared to 2023...." #AI #climateemergency

Bon c'est très long, à écouter peut-être en mode podcast (mais c'est aussi très dense, restez attentifs).

En tout cas c'est absolument passionnant et évidement après on n'a plus qu'une envie, haïr encore plus le capitalisme !!

F.Lordon chez Elucid

https://youtu.be/Yu-wqYCyInU

En particulier c'est mon dada

• la crise économique de l'ia de 0:44' à 0:55'

• les conséquences politiques de l'ia de 1:50' à 1:55'

#Economie

#Capitalisme

#ia #ai

#NightmareOnLLMstreet

#TremblezBourgeoisCetteCriseEstLaVotre

RE: https://tldr.nettime.org/@tante/116454270791245630

been thinking about #DavidGraeber a lot lately. i think the Venn Diagram of people trapped in #BullshitJobs and aisloppers is a circle.

i would bet you that if people could earn a living without a bullshit job ―and, yes, a lot of tech work is bullshit― they’d have whole careers and lives outside of this capitalist hellscape.

capitalists are using #AI to gaslight us into believing we need them as the middlemen between our lives and a life worth living.

it’s a lie.

cut the middlemen out.

RE: https://mastodon.social/@arstechnica/116454585508416682

BILLIONAIRES NEED #AI FOR #ECOCIDE

because ecocide is #genocide at a mass scale.

#techbros are a malthusian death cult. instead of letting go of their billions to end poverty, they want to exterminate most of us because we are an “overpopulation” problem.

no, it doesn't make sense for these people to accelerate the #GlobalWarming environmental collapse scientists been warning for decades. it will affect them too.

they believe they will survive.

that’s why all death cults are suicidal.

Greenhouse gases from data center boom could outpace entire nations

Plants from OpenAI, Meta, xAI, and Microsoft could emit more than 129M tons annually.

https://arstechnica.com/ai/2026/04/greenhouse-gases-from-data-center-boom-could-outpace-entire-nations/?utm_brand=arstechnica&utm_social-type=owned&utm_source=mastodon&utm_medium=social

OpenAI has the governance structure of a unicorn - it does not exist

https://readuncut.com/open-ai-has-little-effective-governance/

#news #tech #technology #AI #openai #chatgpt #aislop #security

Unauthorized group has gained access to #Anthropic’s exclusive cyber tool #Mythos, report claims

Anthropic's Mythos AI model sparks fears of turbocharged hacking:

Cyberdefenses could be exposed faster than fixes could be deployed.

Anthropic’s new Mythos AI model is raising concern among governments and companies that it could outpace current cyber security defenses, turbocharge hacking, and expose weaknesses faster than they can be fixed.

🔓 https://arstechnica.com/ai/2026/04/anthropics-mythos-ai-model-sparks-fears-of-turbocharged-hacking/

#anthropic #ai #mythos #hacking #week #it #itsecurity #cybersecurity #weekness

Security — Anthropic's super-scary bug hunting model Mythos is shaping up to be a nothingburger - Hackpocalypse deferred

Anthropic's Mythos model is purportedly so good at finding vulnerabilities that the Claude-maker is afraid to make it available to the general public for fear that criminals will take advantage. But early analysis shows that Mythos may not be as dangerous as some would have you believe.

🔓 https://www.theregister.com/2026/04/22/anthropic_mythos_hype_nothingburger/

#mythos #anthropic #claude #danger #ai #aislop #mythos #slop

“Thousands of CEOs admit AI had no impact on employment or productivity” yea it wasn’t really its purpose anyway, its more about layoff plans and putting pressure on the workers left

https://fortune.com/article/why-do-thousands-of-ceos-believe-ai-not-having-impact-productivity-employment-study/ #AI

#Mozilla Used #Anthropic’s #Mythos to Find and Fix 271 Bugs in #Firefox

https://www.wired.com/story/mozilla-used-anthropics-mythos-to-find-271-bugs-in-firefox/

Technological developments (of with Artificial Intelligence & its associated technologies) is merely the most recent, especially in the information age, have prioritised speeding up over other measures of success...

But, actually humanity is all about things taking time, allowing for reflection on direction(s) taken & friction(s) that may prompt different thoughts & solutions.

Is the biggest threat AI poses the draining of such possibilities from working & social relations?

Gentoo, FLOSS, LLMs, depressing [SENSITIVE CONTENT]

#Gentoo is still one of the bright outposts in #FLOSS where human work is valued and #LLM contributions are banned. However, sometimes I feel that this matters very little.

After all, Gentoo is a distribution. While it has its own value, it cannot exist without all the software it is shipping. It makes no sense in isolation.

And let's be honest, I don't think you can avoid slop today. We are trying our best to sieve out the worst: the copywashing chardet, the vibecoded NIH Perl crypto packages… but it's just that.

As someone who bumps Python packages, let me tell you this: LLMs are omnipresent. I notice Claude in commit logs, I notice the blasphemy of agent instructions all over the place… and there's probably much more than I don't notice. With many core components giving in, you can't avoid it without literally freezing on old, vulnerable versions, or spending hours looking for alternatives or creating them.

FLOSS is dead. People don't care. They don't have conscience. All they care about is the sick idea of "productivity", i.e. generating more slop.

The few of us who do care can do very little. We will continue doing our best until they kill us (as they're literally slowly killing the whole humankind). But that's it. Maybe it will pass once the bubble pops, maybe it won't. Either way, the damage is beyond repair. We will never be able to trust one another like we did. We will never again be a community building a better world.

It's just like everything nowadays. It's hard to find a good washing machine (one that will actually be repairable), good shoes (that won't fall apart shortly after the warranty expires), good food. You need lots of money, and even then you have to sieve through all the scammers who just sell the same shit with higher profit margin. #OpenSource is just another branch of business where people are trying to "sell" you shit, and don't care anymore if it explodes in your face. They don't even care if they're actually making a profit.

"Studies show that overreliance on these digital tools causes cognitive decline, but if current events are any indication, nobody’s making much of a contribution anyway. Go ahead and use A.I. however you like."

I don't think i've ever read a better article about #AI. Every word, sentence and paragraph is perfect.

KServe

https://kserve.github.io/website/

#machine learning #kubernetes #model serving #inference #AI #ML #serverless #MLOps #model inference #generative AI #LLM #AI model deployment

RE: https://mastodon.sakura-star.net/@KitsuneofInari/116336733010511672

Oh my, j'adore cette remarque, pourquoi il faut refuser le code généré par ia :

« Dire " tant que le contributeur comprends ce qu'il fait, ça va ", est un mensonge : aucun programmeur ne comprend vraiment pleinement le code sur lequel il travaille, sinon Bugzilla serait vide »

😂 🤣

C'est tellement parfait !

(ceci dit avec tous l'amour et le respect que j'ai pour mes ami·e·s dev  )

)

#ia #ai

#NightmareOnLLMstreet

#Noai

#Tech

#DevOps

illegal instruction boosted

宮城巴惠

[he/him/she/her/they/them/whatever] » 🌐

@KitsuneofInari@mastodon.sakura-star.netKrita’s Maintainer is awesome!

Deepfakes. Täuschend echte, KI-generierte Videos, in denen Prominente Dinge sagen oder tun, die sie nie getan haben, überschwemmen das Internet.

https://torbenkopp.com/deepfakes-youtube-will-prominente-mit-ki-schuetzen/

#youtube #deepfake #ki #writing #literature #belletristik #literatur #books #press #markets #ai #technology #science #artist #theatre #nature #gaming #business #linux #philosophy #humanities #privacy #datenschutz #dataprivacy

Bye-bye, Microslop. You won't be missed. 👋 #Microsoft #Microslop #Windows #AI #AISlop #AIAct #BanAI #BigTech #EU

Fantastic post about the costs of "speeding up" dev with #AI

"...a lot of this AI-generated code? Nobody fully understands it. The person who "wrote" it didn't really write it. They prompted it, skimmed it, maybe ran it once. When it breaks in production at 2am, the person on-call didn't write it and the person who prompted it can't explain it...Your bottleneck is the org chart, and no amount of Copilot is going to refactor that."

New study: "In over 80% of cases the [tested] #LLMs claimed that a retracted article had not been retracted…LLMs have little ability to distinguish between valid and retracted studies, unless they are allowed to, and do, check online."

https://arxiv.org/abs/2604.16872

RE: https://flipboard.com/@independent/news-a3lhl24rz/-/a--Rd7LZuGST2GATmXSjdNrA%3Aa%3A1855170754-%2F0

ummmmmm… not to be dramatic but…

two of the wettest states in this country, Georgia and Florida, are dealing with wildfires? in April? as in the supposed wettest month of the year (as in April showers)?

tell me again how investing in the inevitability of #AI and parasitic #dataCenters isn’t the petromafia’s way to shred the Paris Agreement and keep the #gas and #oil spigots flowing.

Wildfires across Georgia and Florida have destroyed nearly 50 homes and are forcing evacuations

https://www.independent.co.uk/news/world/americas/florida-jacksonville-georgia-atlanta-smoke-b2962971.html?utm_source=flipboard&utm_medium=activitypubPosted into News @news-Independent

“We conduct randomized experiments to study how developers gained mastery of a new asynchronous programming library with and without the assistance of AI. We find that AI use impairs conceptual understanding, code reading, and debugging abilities, without delivering significant efficiency gains on average.”

- Anthropic study #AI

#Anthropic investigating unauthorised access of powerful #Mythos #AI model - Financial Times

Subscribe to unlock this article 👎🙁

https://www.ft.com/content/56d65763-69fe-4756-baf4-c8192b7aadaf

Startups Brag They Spend More Money on AI Than Human Employees

https://www.404media.co/startups-brag-they-spend-more-money-on-ai-than-human-employees/

#news #tech #technology #AI #aislop #nvidia #startups #business

Oh good, Claude Desktop on MacOS silently and continually whitelists browser extensions that aren't installed yet on browsers that aren't installed yet that Anthropic says it doesn't support yet.

#AI #Privacy #InfoSec

Anthropic secretly installs spyware when you install Claude Desktop — That Privacy Guy!

Claude Desktop changes software permissions without consent

https://www.theregister.com/2026/04/20/anthropic_claude_desktop_spyware_allegation/