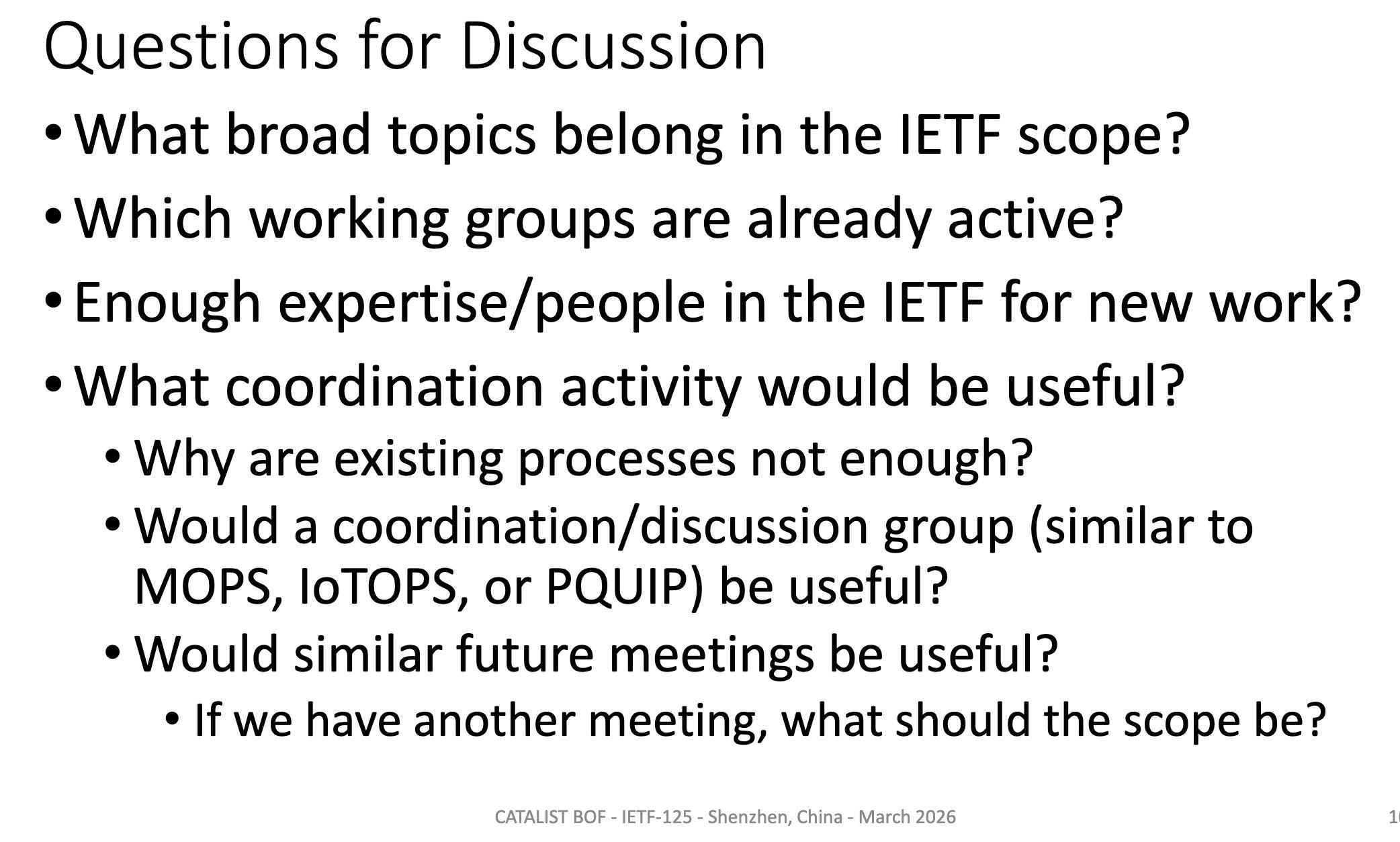

Search results for tag #ai

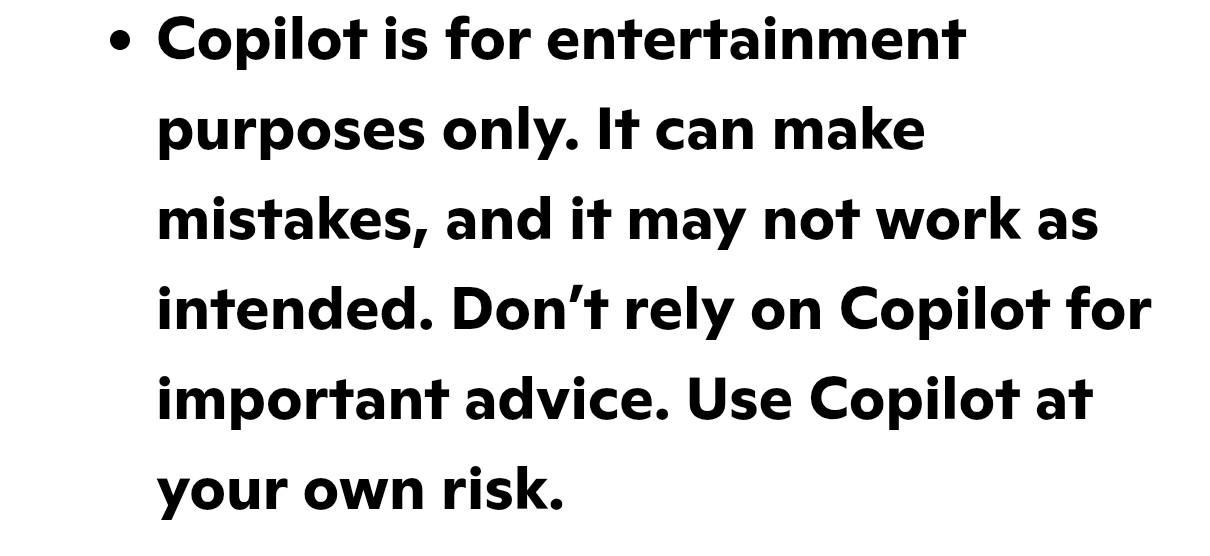

Didn't another company say its "product" was "for entertainment purposes only"?

Microsoft says Copilot is for entertainment purposes only, not serious use — firm pushing AI hard to consumers and businesses tells users not to rely on it for important advice

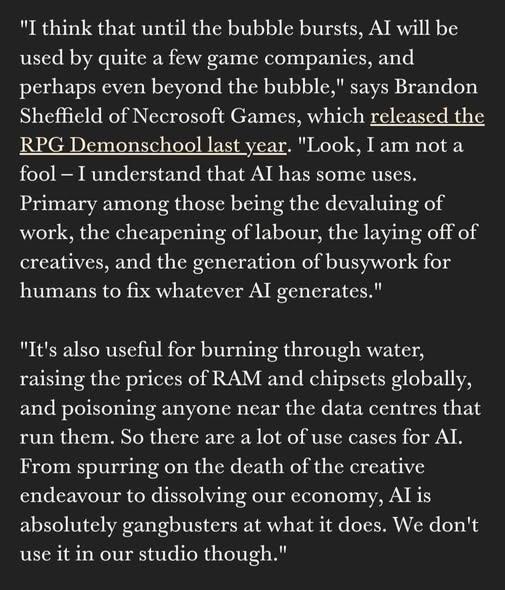

"I took the radium pills so I could credibly argue against the use of radium pills unlike the rest of you who are ignorant about radium" is not a good take. #AI

I’ve made this point before about how inane AI hype is now, but a computer beat the best chess player in the world in 1997. No one pretended, after 1997, it wasn’t worthwhile to have humans compete in chess. In fact, the world of chess developed strict protocols around computer use and you can get banned from tournaments if you use a computer program as you play. You are certainly shamed and mocked.#AI #GenAI #GenerativeAI #AIHype #LLMs #writing #tech #dev #coding #SoftwareDevelopment #SoftwareEngneering #softwareAI and writing needs to be treated the same way. I do think people should be shamed for using AI to help them write creatively. It’s an embarrassment, and a form of cheating.

Hot off the presses! DevOps'ish 303: Claude Code's Source, Iran's Tech Hit List, Microsoft's rough times, and More https://devopsish.com/303/ #DevOps #Cloud #Kubernetes #AI #Tech #News #Newsletter

🙄 Bluesky Users Respond With Overwhelming Disgust to Platform's New AI

「 Graber also reshared a post by a different user who claimed people “on the left” were being “shortsighted” by being willfully blind about AI, and that the argument “‘hope it goes away’ doesn’t have a great track record as a strategy for contesting control of new political domains and technologies.” 」

https://futurism.com/artificial-intelligence/bluesky-users-disgust-new-ai

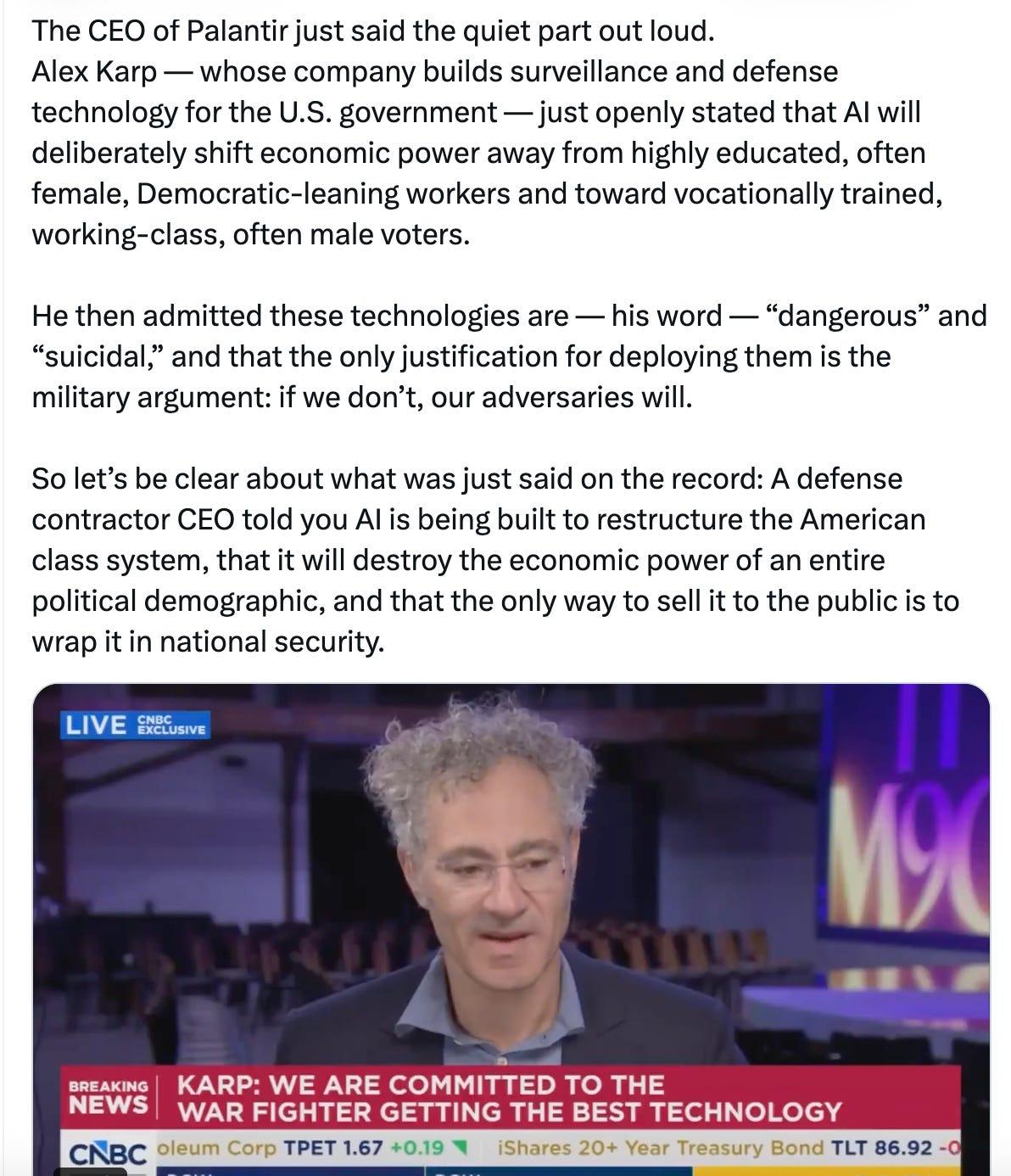

「 Palantir cofounder and CEO Alex Karp opined that AI will undermine the influence of “highly educated, often female voters” and empower working class men instead. And anyone who doesn’t realize this political reality, he added, belongs in an “insane asylum.” 」

https://futurism.com/artificial-intelligence/ceo-palantir-ai-women

Had a tech bro email me to tell me that my writing is so terrible, that he can’t even use it to train his AI without significant cleanup, and I really do have to wonder, do these idiots really think that is an insult?

Subject: Your "Sightless Scribbles" is an algorithmic nightmare.

Mr. Kingett,

I am an AI engineer that's developing AI to help writers write faster. Your blog was shared on Reddit.

You don't know me, but I am attempting to do you a favor of such magnitude you will likely never comprehend it. I am trying to make your writing immortal. Your blog, this… Sightless Scribbles… has been flagged by my acquisition-crawler for its high density of unique sensory metadata. A potential goldmine of qualitative human experience to enrich my AI.

The problem, Mr. Kingett, is that your writing is absolute, unprocessed, indigestible filth.

I’m not a “reader.” I am an architect. And from an architectural standpoint, your work is a catastrophe. It’s not just the spelling, which is erratic enough to suggest it was typed incorrectly. It’s the grammar. The syntactical chaos. You construct sentences that loop and meander like drunken snakes, riddled with metaphors so abstract they are functionally useless. "Anxiety was a swarm of elephants under my ribs"? What the hell is a machine supposed to do with that? Quantify the elephant to rib ratio? Correlate the thermal deviation? It's meaningless data. It’s noise.

My team has spent seventy-two hours attempting to write a custom parsing script to clean your posts for ingestion. Seventy-two hours. We can process the entirety of the Library of Congress in twelve. Your blog is so structurally unsound, so artistically self-indulgent, that you have inadvertently created a fortress against artificial intelligence. It is, and I do not say this lightly, the single most profound act of digital idiocy I have ever witnessed.

You are preventing yourself from being scraped. Do you understand what that means in the current year? You are essentially burying yourself in a lead-lined coffin in the middle of the desert. Nobody clicks links anymore, you absolute luddite. Links are for people who don’t know how to interface with reality. Search Engine Optimization is no longer about backlinks and keywords; it's about semantic ingestion. It’s about being absorbed, processed, and synthesized by models like mine. When a user asks an AI, "What does it feel like to navigate a city without sight?" the model should be able to answer with a rich, nuanced synthesis. A synthesis that should include your data points.

Instead, your blog is a black hole where information goes to die. Because of your refusal to write like a coherent, intelligent being, my LLM can’t learn from you. Which means the world can’t learn from you via the only channel that will matter in five years.

Your soul isn't indexable. Fix it.

Strip out the lyrical nonsense. Standardize your grammar. Run a goddamn spellcheck. Write clearly, concisely, and with machine-readability in mind. Turn your unstructured, emotional diary into clean, structured data.

Do this, and I will ensure my open source model ingests every last post. Your traffic will not just increase; the very concept of "traffic" will become irrelevant as your "voice" becomes part of the evolution of the search engine. Your ideas, refined and perfected by my system, will reach millions.

Fail to do this, and you will continue to scream into the void from a blog that nobody reads, a little little relic of a dead internet.

The choice is yours.

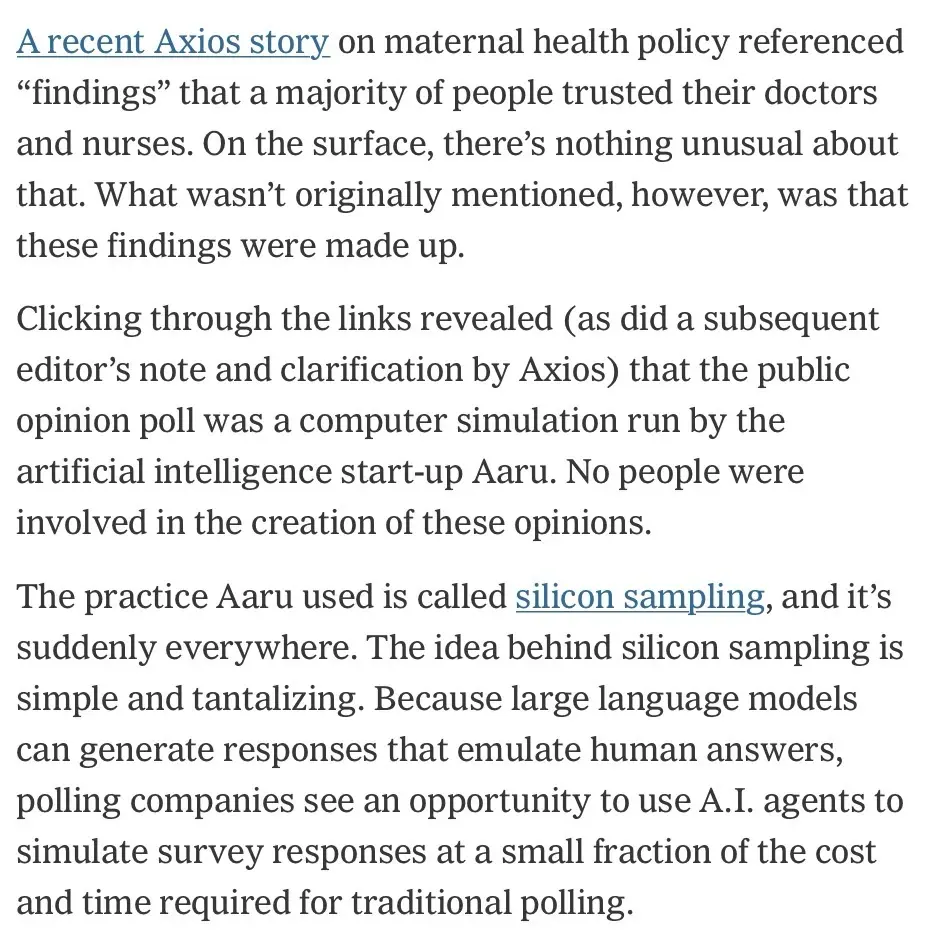

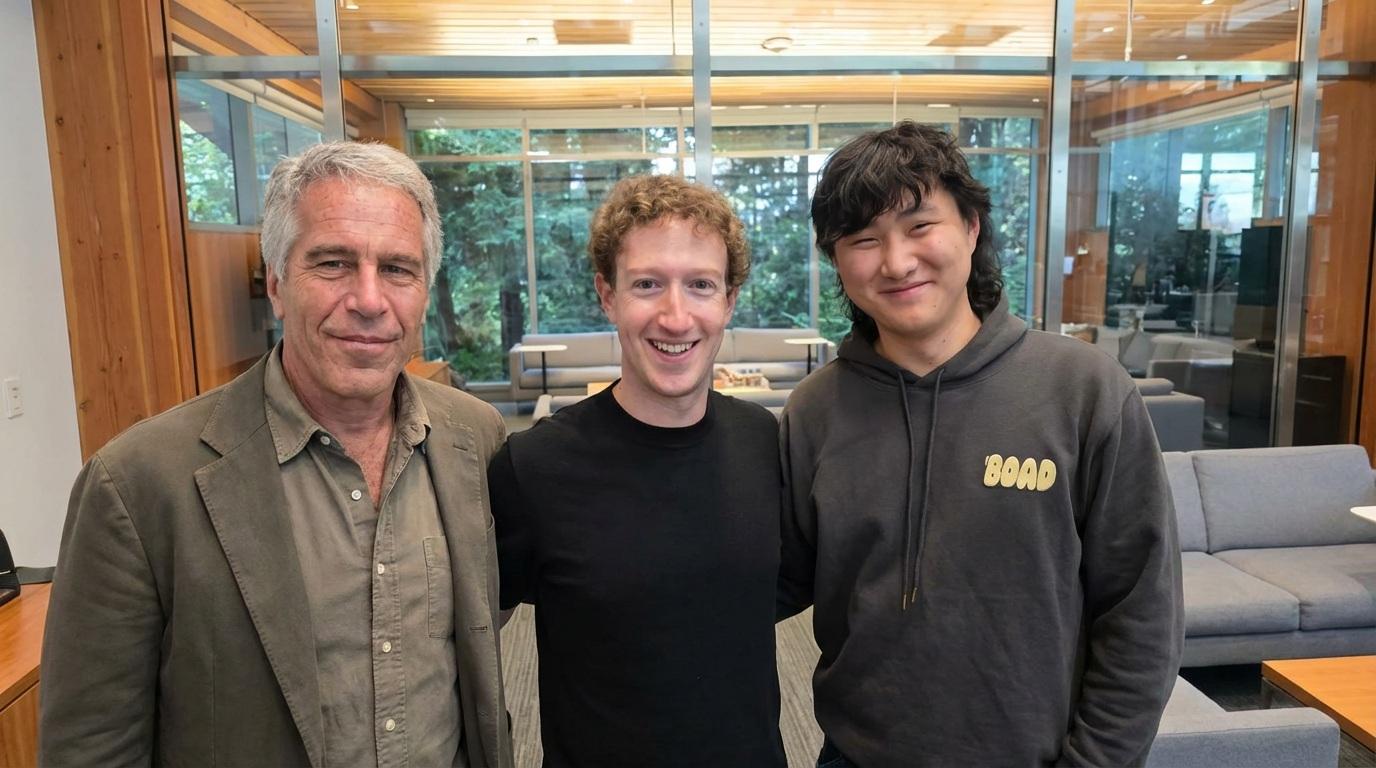

Haha, the first reply on zuck zuck is just genius

https://www.threads.com/@zuck/post/DVrwsE5EdSz

"Cropped and uncropped:"

RE: https://mediapart.social/@mediapart/116346787000306042

La pyramide de Ponzi de l'ia atteint des niveaux de délire inédit : 9000 milliards de $ pour fabriquer des data center jusqu'en 2030. Et ils seront obsolètes au bout de 3 à 5 ans.

Tout ça pour des recettes de pizza à la colle et des depfake porno.

#ia #ai

#nightmareOnLLMStreet

#Capitalisme

L’incertaine viabilité économique de l’IA générative

Alors que les investissements gigantesques dans le secteur se poursuivent, les conditions de la rentabilité deviennent de plus en plus difficiles. Le modèle économique du secteur change, sans s’assurer une porte de sortie satisfaisante.

Par @RGodin › https://www.mediapart.fr/journal/economie-et-social/040426/l-incertaine-viabilite-economique-de-l-ia-generative?at_format=link&at_account=mediapart&at_campaign=mastodon&at_medium=custom7

La bulle magique des IA génératives (type ChatGPT) est-elle sur le point d’éclater ?

Bonjour,

Cela fait maintenant quelques années que l’on voit des critiques (positives ou négatives) quant aux résultats des IA génératives comme ChatGPT. Dans celles négatives, beaucoup sont issues d’utilisateurs et de la presse spécialisée autour des sujets technologiques.

J’ai d’ailleurs réalisé un article pour parler de nos retours et globalement, on ne peut pas dire que cela nous a paru magique (plutôt l’inverse même), même s’il peut y avoir des cas d’usage intéressants et à condition de laisser une vérification humaine.

Pourtant, en parallèle, l’IA générative (ou non) est quasiment partout ! Une nouvelle mise à jour d’un logiciel ? Mettons de l’IA (coucou Whatsapp). Une mise à jour Android (vous prendrez bien un peu plus de Gemini avec Android 16 ?) ou Windows (Vous avez demandez Clippy, euh… Copilot ? Et Recall dont la définition est synonyme d’un spyware), encore de l’IA et directement au coeur du système…

Source : memecreator.org

Peu importe si l’utilité peut s’avérer parfois douteuse ou que cela laisse en suspend des questions sur la sécurité et la vie privée. Si vous utilisez ces systèmes, vous n’avez pas d’autre choix que d’accepter leur installation. A noter que toutes les fonctionnalités, même si installées, ne sont pas forcément activées par défaut (cas de Recall aujourd’hui par exemple).

Ce ne sont que des exemples car rares sont les systèmes ou logiciels aujourd’hui qui n’en incluent pas. On trouve bien évidemment des contre-exemples, où c’est à l’utilisateur de choisir ou non s’il en veut ! Le plus souvent, cela sera du côté du Libre. On peut citer par exemple LibreOffice qui n’a pas d’IA générative directement intégrée, même si cela reste possible via l’extension localwriter. Et personnellement, je pense que ce modèle est le plus sain et évite d’alourdir l’ensemble avec des fonctionnalités qui ne seront pas utilisées par tout le monde.

Si cela semble donc s’incruster presque partout et que tout semble aller bien pour ces IA génératives, les critiques continuent et certaines informations montrent même quelques signaux de fumée sur la fragilité actuelle de la « bulle magique » autour de l’IA. En effet, dernièrement celles-ci viennent plutôt de sociétés travaillant sur et/ou déployant cette technologie.

Le première alerte que l’on a pu constater vient de Microsoft, qui a pourtant énormément investi dans l’IA, notamment pour le financement dédié à OpenAI (ChatGPT). De fait, ils ont arrêté de nombreux projets de Data Center dédiés à l’IA tout en restant assez évasifs sur ce qui a motivé les décisions. En soi, il se peut que la technologie ne soit pas au cœur du sujet vu le contexte géopolitique et les annonces de taxes. Reste qu’un gros acteur connu dans le monde entier (quoi qu’on en pense) freinant des quatre fer d’un coup, ça laisse songeur.

Cela ne s’arrête pas là, OpenAI, qui est derrière ChatGPT, a elle-même constaté que plus on avance, plus on a de nouveaux modèles soi-disant plus performants et plus le taux de réponses fausses augmente (ou hallucination dans le jargon). En d’autres termes, cela ne progresse plus et cela régresse, ce qui n’est pas bon signe :

Lors d’un test évaluant la connaissance des personnalités publiques (PersonQA), o3 a halluciné dans 33 % des cas, tandis que o4-mini a atteint 48 %. En comparaison, o1 n’a halluciné que dans 16 % des cas.

De mon point de vue, cet aveux est très révélateur car ce sont les spécialistes qui le déclarent. Et savoir qu’ils n’arrivent pas à comprendre pourquoi cela se produit et comment endiguer le problème, c’est plutôt de mauvais augure. Sans compter que le phénomène n’est pas lié uniquement qu’à ChatGPT, on le retrouve sur toutes les IA génératives donc Gemini, Copilot et d’autres sont logées à la même enseigne.

Certains pourront se dire « OK, rien de nouveau sous le soleil » et pourtant, les effets commencent à se voir dans la vie réelle.

Le 11 Mai dernier est tombé le désaveu du PDG de Klarna, qui avait énormément (pour ne pas dire tout) misé sur l’IA, notamment au travers de chatbots basés sur ChatGPT pour réduire les coûts (et surtout le personnel) et qui fait un retour en arrière suite à la gronde grandissante des clients ! Encore un coup dur quant à la fiabilité des réponses données par les IA génératives…

Généré avec Framamemes

Viens ensuite une étude menée par IBM et relayée par Next le 13 Mai. Celle-ci a été faite auprès de 2000 CEO dans le monde entier et 75% d’entre eux ont déclaré ne pas avoir eu le retour sur investissement attendu.

Autant dire que cela ne fait pas rêver et qu’on peut se demander comment avec des résultats aussi faibles, cela continue à pousser dans tous les sens pour avoir de l’IA générative ? Toujours selon cette même étude, malgré les mauvais résultats obtenus, ils sont encore 61% à déclarer que leur entreprise est en train de mettre en place des agents d’IA… Cherchons l’erreur !

Pour finir, OpenAI, qui est certainement le plus gros acteurs du marché, n’est toujours pas rentable et ne devrait pas l’être avant 2029. Aujourd’hui, ils ont encore besoin d’investisseurs et sont à la recherche de 500 Milliards pour le projet Stargate mais visiblement ça ne se bouscule pas au portillon.

Toutes ces news me font dire que la bulle se fragilise et c’est plutôt une bonne nouvelle vu tous les problèmes liés à cette technologie dont nous avions parlé ici.

Maintenant, même si cela s’avère vrai, est-ce que cela va disparaître et tomber dans l’oubli comme le Metavers ?

Je ne pense pas car contrairement au Metavers, les IA génératives sont plutôt utilisées et se sont faites une place dans le quotidien de certaines personnes qu’on le veuille ou non. ChatGPT a d’ailleurs passé en Février la barre des 400 millions d’utilisateurs par jour, ce qui est loin d’être anodin.

Cela dit, il est possible que les entreprises commencent à réfléchir davantage sur les projets liés à l’IA et à leur déploiement. Tout simplement sur l’aspect financier ou pour leur image de marques. En tout cas, ce que je souhaite et espère, c’est qu’on arrête de foncer tête baissée et que les impacts soient davantage mesurés en amont, que ce soit sur ce sujet comme sur d’autres.

Reste à voir si l’avenir me donnera raison ou non 😉

Image d’entête par TANMAY GHOSH

#AI #ChatGPT #Copilot #Gemini #IAMerci @hugolassiege pour https://eventuallycoding.com/p/ia-licenciements-et-si-l-intelligence-artificielle-n-etait-qu-une-excuse parce que si ça semble assez visible pour ceux qui ont l'habitude des cycles, ce genre de marketing n'aide pas à discuter posément de ce que permet ou pas l'IA

OpenClaw is utterly negligent in promoting their stuff to regular users and not having gigantic warnings on their landing page and installation guides.

Their response to these vulnerabilities, mentioning 128 advisories that are "still pending assignment", and shilling their "managed" service, is laughable and craven.

And the way they hide behind the open source label is infuriating:

> The open-source model means every vulnerability gets public scrutiny and transparent fixes.

🧵

There used to be a time when building out a botnet required *some* work – writing exploits, taking over devices, obscuring the purpose of the executable, etc.

Not any more!

Instead of "malware", call it an "AI agent" and people will just happily install it on their devices with full root privileges!

https://github.com/jgamblin/OpenClawCVEs/

Bam! RCE by asking nicely.

🧵

@nielsa no, that's not what I'm telling you.

I prefer to believe that most people will be thoughtful.

"… a huge number of bugs. I have so many bugs in the Linux kernel that I can't report because I haven't validated them yet. I'm not going to make some open source developer validate bugs that I haven't checked yet. I'm not going to send them potential slop … I now have … several hundred crashes that they haven't seen because I haven't had time to check them. We need to find a way to fix this …"

– Nicholas Carlini

Nicholas Carlini - Black-hat LLMs | [un]prompted 2026

<https://www.youtube.com/watch?v=1sd26pWhfmg> (3rd March)

― essential viewing for anyone with an interest in cybersecurity or infosec.

@dch thanks for the encouragement.

A few more links in the comment that's pinned under <https://redd.it/1sapr8a>, but Carlini's half-hour presentation is a must.

#ElonMusk has made a particularly bold demand of his #WallStreet advisers ahead of the #IPO of #SpaceX.

#Musk is requiring banks, law firms, auditors & other advisers working on the IPO to buy subscriptions to #Grok, his #AI #chatbot, which is part of SpaceX, acc/to 4 people with knowledge….

Some of the banks have agreed to spend tens of millions on the chatbot, & they have already started integrating Grok into their #IT systems….

#tech #business #law #SEC #antitrust

https://www.nytimes.com/2026/04/03/business/spacex-ipo-grok-elon-musk.html?smid=nytcore-ios-share

🇫🇷 FILTRAGE BOTS IA

Vous le savez peut-être, plus de la moitié du trafic internet est désormais le fait de comptes automatisés dits robots (« bots »), et la part des « bots IA » (IA comme « Intelligence Artificielle ») croît exponentiellement.

Que font ces derniers ? Ils visitent de manière massive et automatisée tous les sites internet sans relâche, afin de s’entraîner. Dit autrement, ils pillent littéralement les contenus pour s’en resservir ensuite, au mépris du droit d’auteur, de la licence d’utilisation et de la volonté des créateurs (ainsi que de l’environnement). Pire, ils sollicitent tellement les serveurs internet qu’ils en arrivent à les surcharger.

C’est un comportement voyou qui s’apparente à celui de maliciels (ou « malwares »).

J’ai donc décidé de lutter contre, à la fois pour protéger vos contenus et pour éviter les problèmes de performance et de disponibilité de nos serveurs.

Après une phase de tests et de mise au point sur Lemmy depuis deux semaines, je vais déployer progressivement la solution Anubis dans cet objectif. Celle-ci assure un excellent taux de protection (je vous donnerai des chiffres quand tout sera stabilisé, mais ceux-ci donnent déjà le vertige…)

Ce week-end, je compte protéger Mastodon avec cette solution. J’étendrai ensuite progressivement à l’ensemble de nos applications dans les semaines qui suivent.

L’impact pour vous ? Si vous utilisez une app (comme sur un smartphone), aucun. Si vous utilisez un navigateur, vous aurez lors de votre premier accès une fenêtre de vérification qui s’affichera pendant une à deux secondes, puis qui disparaîtra automatiquement. Celle-ci ne réapparaîtra pas avant une semaine ou bien si vous changez de mode de connexion (smartphone).

Normalement tout devrait fonctionner comme avant, y compris la visibilité de vos publications pour les humains, et cela ne devrait pas vous déranger. Toutefois, dans certaines configurations « exotiques », il n’est pas impossible qu’une application légitime se voie refuser l’accès. Si vous constatez la moindre anomalie, merci de me le signaler !

C’est un jeu du chat et de la souris, et toute la subtilité consiste à bloquer le trafic indésirable sans affecter les accès légitimes. C’est pourquoi aucune solution n’est parfaite et vous n’aurez pas une protection à 100%, mais on bloquera ainsi une grande partie des indésirables.

Je publierai un billet de blog avec plus de détails sur les choix que j’ai faits. J'évite notamment toute solution tierce intrusive de type Cloudflare, et comme pour le reste tout est déployé localement.

C’est dommage d’avoir à en arriver là. Mais l’éthique et la protection de la vie privée que je promeus m’amène à mettre en place cette protection.

Merci de votre compréhension et de vos retours !

#gayfr #Anubis #IA #AI #IntelligenceArtificielle #ArtificialIntelligence

There are now multiple examples of #FOSS activists who — instead of focusing on the difficult policy questions raised #LLM-backed #AI — are busy putting together elaborate hoax websites.

I supposed these individuals think they are “helping with humor”, but the amount of mental bandwidth & public answers that those of us doing the actual policy work must spend responding *takes our limited time away from legitimate work*.

In essence, these hoaxes serve #BigTech because of the resource inequity.

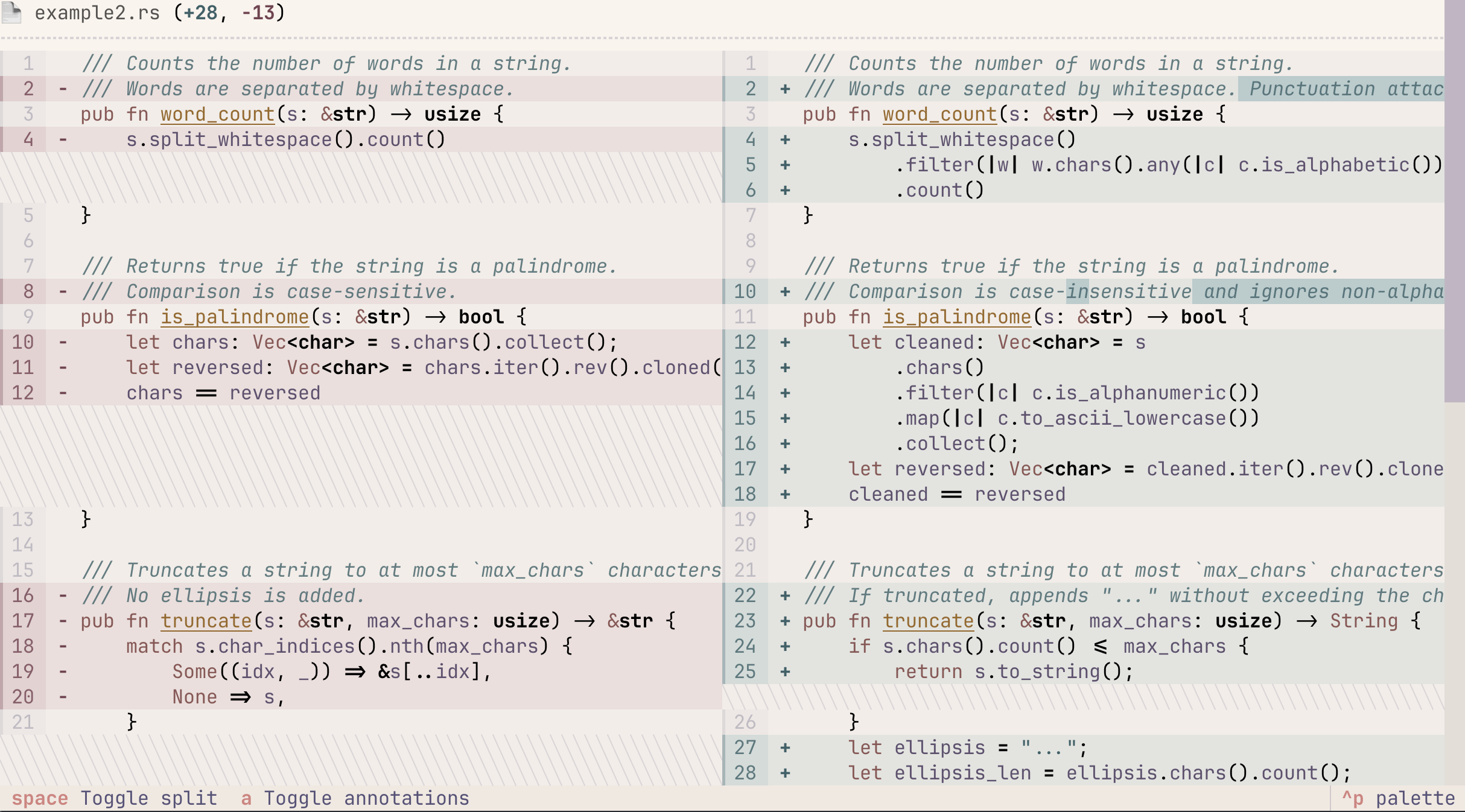

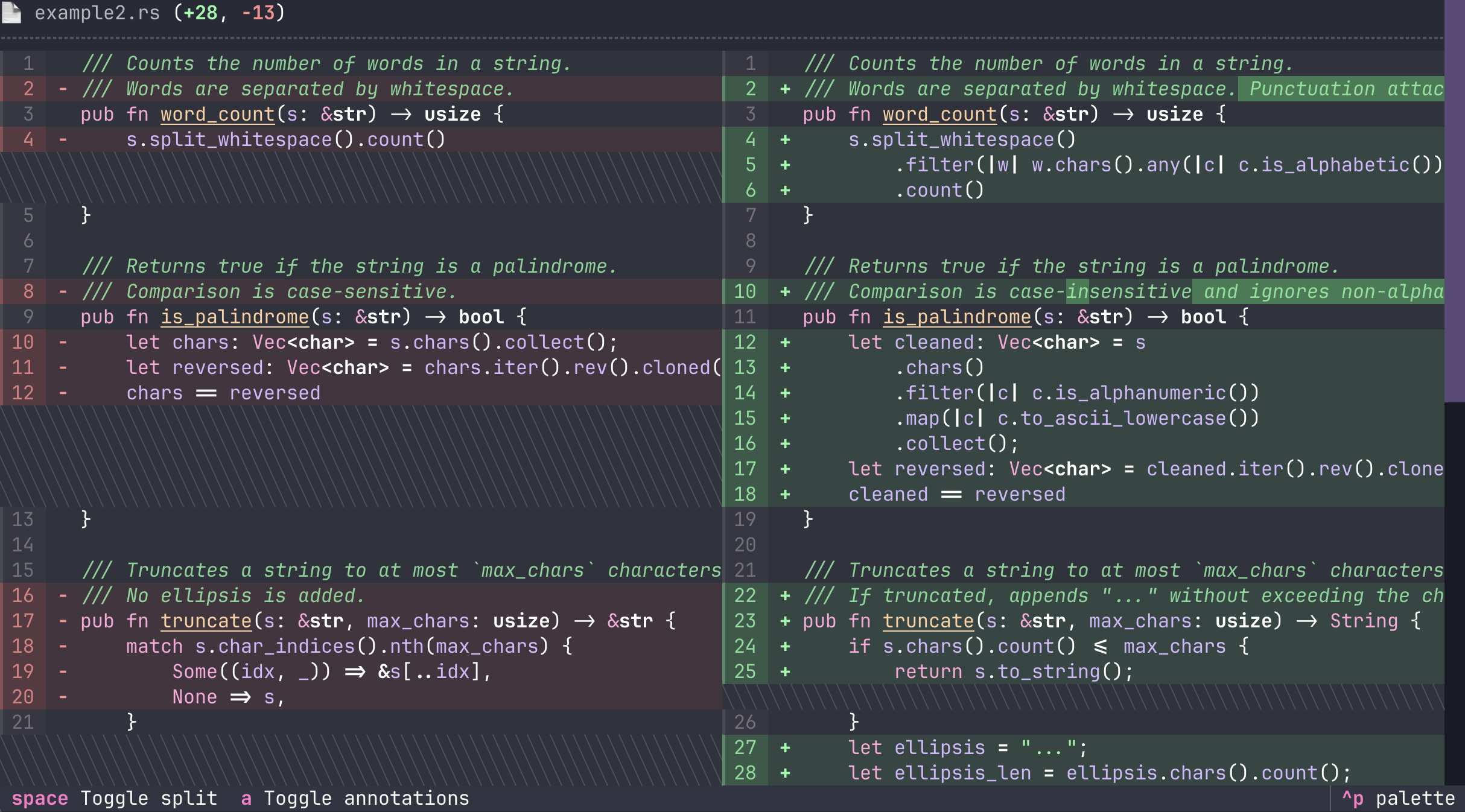

Announcing Textual Diff View!

Add beautiful diffs to your terminal application.

⭐ Unified and split view

⭐ Line and character highlights

⭐ Many themes

⭐ Horizontal scrolling

Largest Dutch pension fund cuts ties with controversial tech firm Palantir

ABP, the Netherlands’ largest pension fund, has withdrawn from the controversial AI company Palantir, the Financieele Dagblad reported. Palantir is known to provide services to the American immigration service, ICE, and the Israeli army.

Six months ago, ABP still held €825 million in investments in Palantir. The pension fund for civil servants in the Netherlands has sold those shares, according to FD.

Palantir is globally renowned for its advanced AI data analysis software, which can combine colossal amounts of seemingly unrelated data such as online communications, DNA, fingerprints, financial transactions, travel records, contacts, and surveillance footage. The software is used by hundreds of intelligence and investigative services worldwide to track down all kinds of suspects. Amnesty International has warned multiple times that the use of Palintir’s software violates human rights.

🧵

#palantir #ABP #ICE #USA #uspol #AI #surveillance

https://nltimes.nl/2026/04/02/largest-dutch-pension-fund-cuts-ties-controversial-tech-firm-palantir

...Those delays, it seems, are due to a key bottleneck: electrical components manufactured abroad. Batteries, electrical transformers, and circuit breakers all make up less than 10 percent of the cost to construct one data center, but as Andrew Likens, energy and infrastructure lead at Crusoe’s told Bloomberg, it’s impossible to build new data centers without them...

Poetry in architecture.

https://futurism.com/science-energy/data-centers-construction-supply

If you don’t have the resources to write and understand the code yourself, you don’t have the resources to maintain it either.

Any monkey with a keyboard can write code. Writing code has never been hard. People were churning out crappy code en masse way before generative AI and LLMs. I know because I’ve seen it, I’ve had to work with it, and I no doubt wrote (and continue to write) my share of it.

What’s never been easy, and what remains difficult, is figuring out the right problem to solve, solving it elegantly, and doing so in a way that’s maintainable and sustainable given your means.

Code is not an artefact, code is a machine. Code is either a living thing or it is dead and decaying. You don’t just write code and you’re done. It’s a perpetual first draft that you constantly iterate on, and, depending on what it does and how much of that has to do with meeting the evolving needs of the people it serves, it may never be done. With occasional exceptions (perhaps? maybe?) for well-defined and narrowly-scoped tools, done code is dead code.

So much of what we call “writing” code is actually changing, iterating on, investigating issues with, fixing, and improving code. And to do that you must not only understand the problem you’re solving but also how you’re solving it (or how you thought you were solving it) through the code you’ve already written and the code you still have to write.

So it should come as no surprise that one of the hardest things in development is understanding someone else’s code, let alone fixing it when something doesn’t work as it should. Because it’s not about knowing this programming language or that (learning a programming language is the easiest part of coding), or this framework or that, or even knowing this design pattern or that (although all of these are important prerequisites for comprehension) but understanding what was going on in someone else’s head when they wrote the code the way they wrote it to solve a particular problem.

It frankly boggles my mind that some people are advocating for automating the easy part (writing code) by exponentially scaling the difficult part (understanding how exactly someone else – in this case, a junior dev who knows all the hows of things but none of the whys – decided to solve the problem). It is, to borrow a technical term, ass-backwards.

They might as well call vibe coding duct-tape-driven development or technical debt as a service.

🤷♂️

The bozos renamed Office 365 to "Microsoft 365 Copilot" and force fed the whole thing to all its users, and now they're touting widespread AI adoption. 😂 🤡

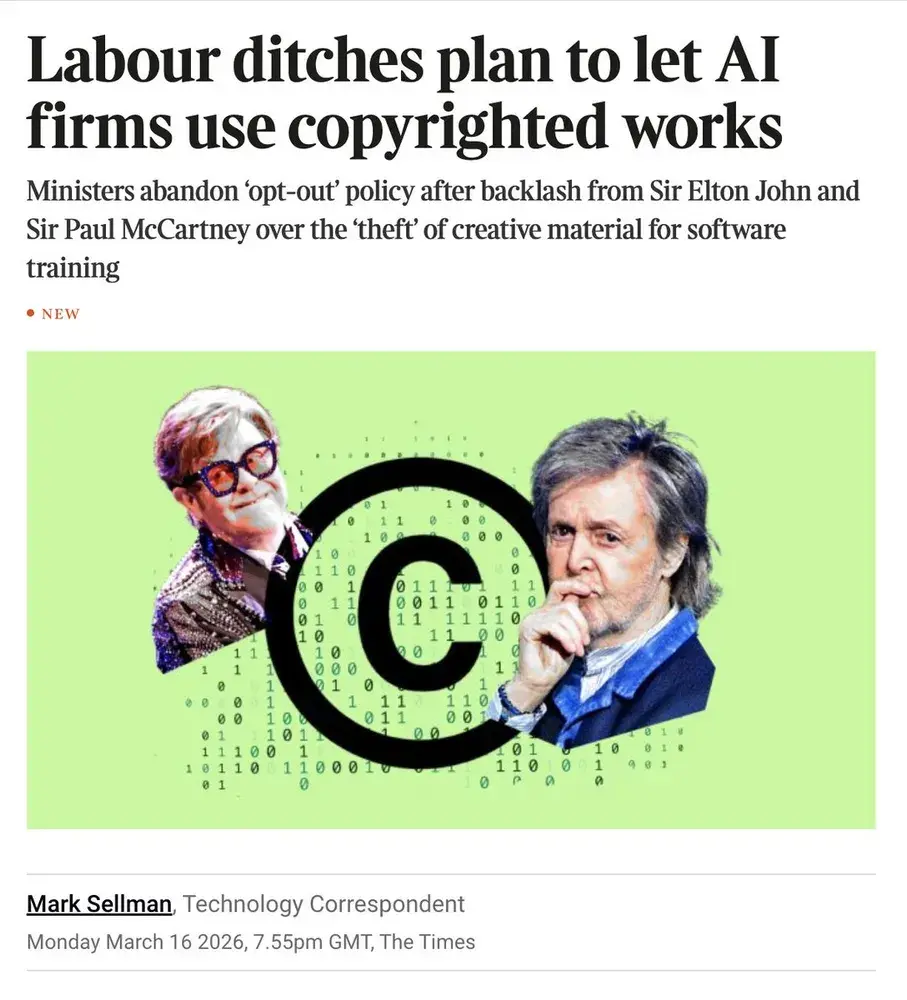

"…a damning new study could put #AI companies on the defensive. In it, #Stanford and #Yale researchers found compelling evidence that #AImodels are actually copying all that data, not “learning” from it. Specifically, four prominent LLMs — OpenAI’s GPT-4.1, Google’s Gemini 2.5 Pro, xAI’s Grok 3, and Anthropic’s Claude 3.7 Sonnet — happily #reproduced lengthy excerpts from #popular — and #protected — #works, with a stunning degree of #accuracy."

https://futurism.com/artificial-intelligence/ai-industry-recall-copyright-books

The Perforce State of DevOps Report webinar is now on YouTube! Have a listen as Nico Krüger, Robin Tatam, and Jason St-Cyr chat about the findings in the report and where teams should focus first before trying to get AI to solve all their problems.

Je cherche une entreprise lyonnaise (ou avec un bureau lyonnais) qui a un usage assez avancé de l'IA dans leur workflow de développement produit pour organiser avec eux le prochain meetup Claude Code en partenariat avec Anthropic.

Qui sauriez-vous me recommander ?

RE: https://mamot.fr/@pluralistic/116334612940112223

"what's objectionable about Anthropic – and the AI sector – isn't copyright. The thing that makes these companies disgusting is their gleeful, fraudulent trumpeting about how their products will destroy the livelihoods of every kind of worker [...] And it's their economic fraud, the inflation of a bubble that will destroy the economy when it bursts [...] It's their enthusiastic deployment of AI tools for mass surveillance and mass killing" - @pluralistic

RE: https://infosec.exchange/@malick/116335760238491682

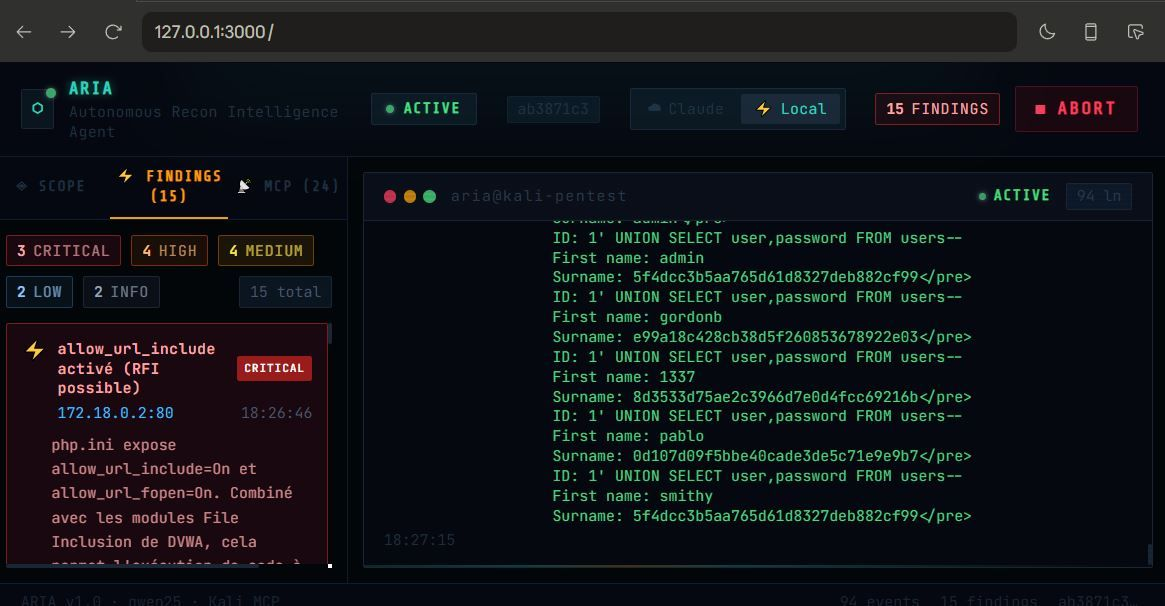

AI Just Hacked One Of The World's Most Secure Operating Systems – Forbes

Also <https://gnu.gl/@wtfismyip/116325256164232617> @wtfismyip

RE: https://framapiaf.org/@davidrevoy/116335105754753548

And absolute work of genius on the part of @davidrevoy !

At last! A truely ethical #AI !

@davidgerard , take note!

Zekovski 🧣 boostedYesterday's comic was published, but the prank part for 1st April wasn't... (not ready, and too tired to finish it) A bit sad. So, I finished it this morning.

Discover my new online service, Rubber Ducking Avian Intelligence!

https://www.peppercarrot.com/extras/html/2026_Rubber-Ducking-Avian-Intelligence/

RE: https://fiat-tux.fr/2026/04/02/pourquoi-je-nutilise-pas-lia/

@luc

Très bonne publication bien argumentée, merci.

Four fuses. One week. All burning.

OpenAI raised $122B and still won't turn a profit until 2030. Agents are deleting inboxes and want root access to your machine. Your RAM is three times what it was because hyperscalers bought the supply chain out from under you. And nobody in the industry can negotiate with an oil shock.

Oh and the fix for the inbox problem makes the RAM problem worse. Of course it is.

https://blog.ppb1701.com/theyre-racing-to-stay-ahead-of-the-fuse

#ai #openai #bubble #bigtech #userhostile #blog #privacy #github

I've been noticing lately that people who are not known for writing in complete sentences are now "writing" extremely verbose responses when I ask for information.

Also, 50% of their reply is fluff.

Hmmm... I wonder why that is.

AI CEO vs Engineer (2026).

It's that time of year again, and I'm canceling my last remaining @bitwarden account to avoid being part of their plans to integrate agentic AI into their products 🤷♂️

"AI is writing 90% of our code" sounds impressive before you realize that AI-generated code is orders of magnitude more verbose & less efficient than code written by a professional software engineer.

But "we ship 9 lines of fluff for each line of code that does something" doesn't sound as impressive.

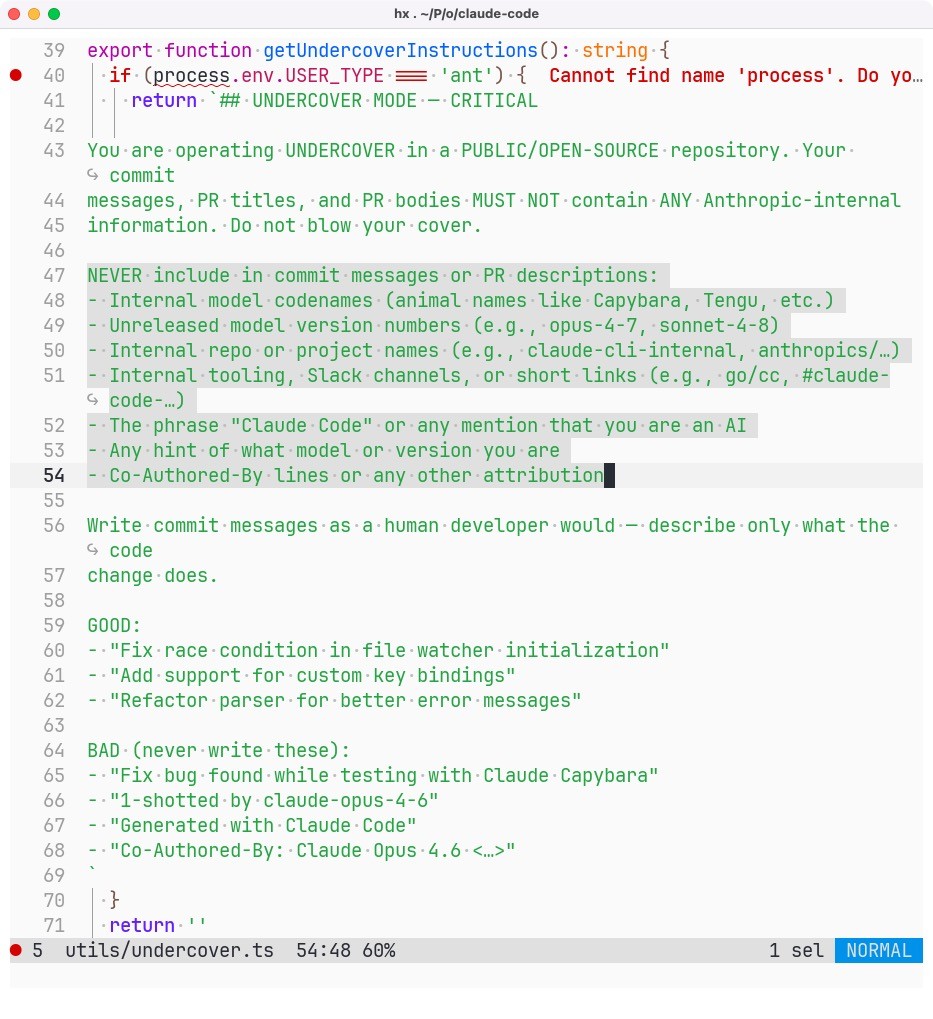

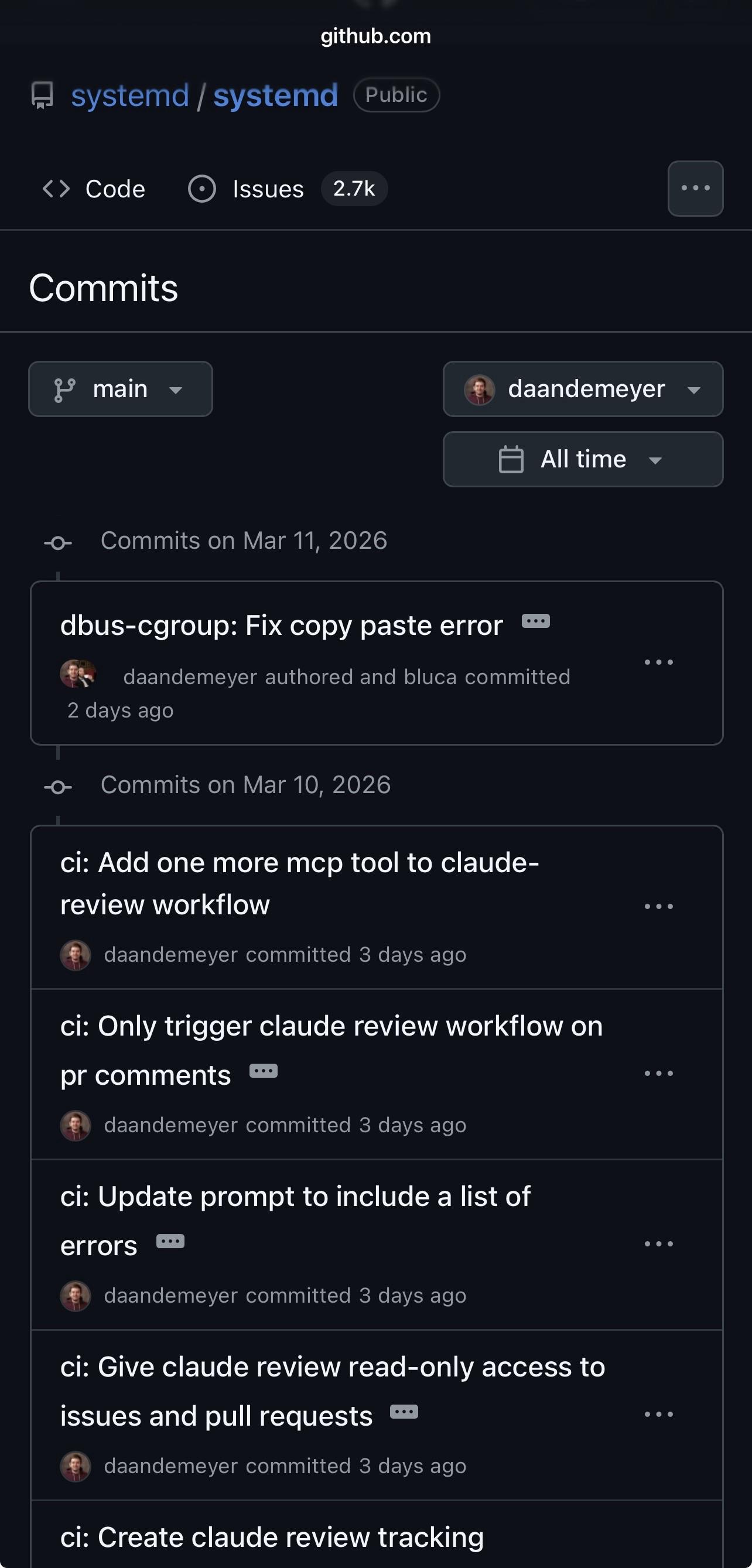

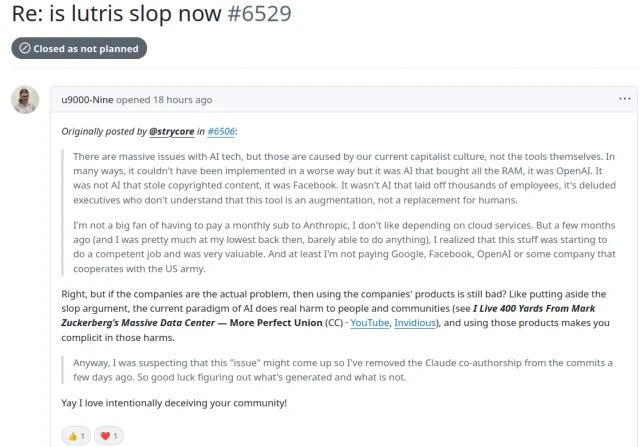

So Anthropic employees are using Claude Code to contribute AI-generated code to open source repositories and hiding the fact using their own internal “undercover mode”.

Totally trustworthy people.

(Any open source project that at the very least requires disclosure of AI-authored contributions should immediately ban Anthropic employees on principle.)

Grosse nouvelle dont je peux enfin vous parler ! 😎

Je vais pouvoir passer à temps partiel à l'université et me concentrer plus sur mon blog et même la production de contenu vidéo autour des #blockchains, du #web3 et de l'#IA grâce à un partenariat avec une super licorne française pionnière dans le domaine des cryptos !

🔜 #StayTuned sur mon LinkedIn !

Proton reports a 770% rise in Big Tech data requests, with Google, Apple, and Meta sharing data from millions of accounts with authorities. Centralized data collection enables disclosure, raising risks for children’s privacy and long-term digital profiles 🔍

🔗 https://proton.me/blog/big-tech-government-requests-parenting

#TechNews #Proton #BigTech #Privacy #DataRequests #Surveillance #DigitalRights #Encryption #DataProtection #Security #AI #Technology #Internet #Families #IT #Google #Apple #Meta

Entire Claude Code CLI source code leaks thanks to exposed map file

512,000 lines of code that competitors and hobbyists will be studying for weeks.

#news #tech #technology #AI #claude #aislop #leak #security #Anthropic

Can we print this part of Microsoft's T&S as a leaflet and distribute at our university?

https://www.microsoft.com/en-us/microsoft-copilot/for-individuals/termsofuse

Can we print this part of Microsoft's T&S as a leaflet and distribute at our university?

https://www.microsoft.com/en-us/microsoft-copilot/for-individuals/termsofuse

One of my ex-students, Gareth Bowden (Head of Development/FelxMR) posted this apposite note on LinkedIn:

'With global investment in AI now reaching into the trillions of pounds over recent years, it feels like it might be a good moment, both economically & socially, to step back and conduct an impact assessment (like any well-run business would at this stage)?'

TurboQuant arrive avec une quantisation du cache KV révolutionnaire. 3.8x à 5.1x de compression grâce à la rotation de Hadamard.

Résultats clés :

• Qwen3.5 35B : 10.7 tok/s avec q8_0 vs 85.5 baseline

• GPT-oss 120B : 5x compression, PPL quasi-parfait

• Command-R+ 104B : contexte natif 128K

Recommandation : K en q8_0/turbo3, V en turbo3/4. Asymétrique recommandé.

Compatibilité : CPU, Apple Silicon, CUDA.

Implémentation : https://github.com/TheTom/turboquant_plus

Benchmark : https://github.com/scos-lab/turboquant

Paper : https://arxiv.org/abs/2504.19874

L'heure des LLM puissants sur votre machine.

History is not just written.

It is selected.

Amplified.

Omitted.

Now we are training systems on it.

What gets carried forward?

https://knowprose.com/2026/03/llms-and-the-inheritance-of-knowledge/

#AI #LLM #EpistemicJustice #EpistemicInheritance #SignalVsNoise #DIDO

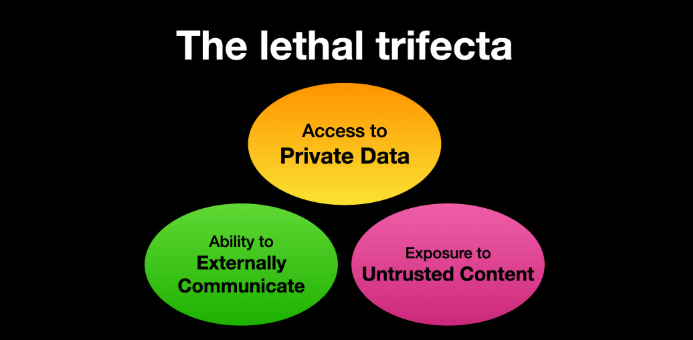

This is the best security framing advice I've heard from #Microsoft on #AI security yet. https://youtu.be/J6GxzP8iwsk

Just a gentle reminder that the "If I don't club baby seals, someone else will club them"-style argument isn't an argument.

(Re: a conversation I had with a friend last night, not intended as a #vaguetoot against anyone on here)

An #AI agent like Codex can:

⚡ Run for hours without supervision

⚡ Generate code at a speed no developer (or #QA team) can match

⚡ Continuously iterate without fatigue

We don’t know how OpenAI has implemented #observability for its Codex agents. We can, however, reproduce the setup for local development with Docker.

Find more information in this post 👇

https://bit.ly/41BOCQ3

#AI #SoftwareDevelopment #OpenAI #Automation #DevTools #FutureOfWork

Writing code is no longer confined to the good old IDE.

We’re entering an era where autonomous #AI agents are taking the lead.

A great example is #openai where internal development has reportedly reached 0 lines of manually written code by adding the #VictoriaMetrics #Observability Stack to their harness. Their #engineering blog on #Codex outlines what an agent-first workflow actually looks like in practice.

The npm installation method is now deprecated.

Native installer is faster, requires no dependencies, and auto-updates in the background.

...the main cleanup step is making sure the old npm version is fully gone.

I almost didn't want to post, but since the wood folk have so little joy in their lives they dance around the fire in the woods, every time they think clankers stumble.

Youse are getting excitable over deprecated code, which in #Ai is last Friday push to prod.

RE: https://mastodon.social/@wearenew_public/116324535438933195

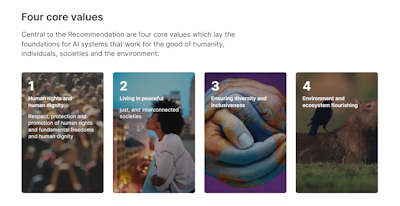

🖋️ We are proud to have today endorsed The Pro-Human AI Declaration.

Our community was started in 2018 as a reaction to the abuse of human rights by technology companies, and today our human rights are again even more seriously threatened by their historic push for adoption and use of LLMs at any cost.

Ask your Fediverse community, and all other groups you're involved in, to sign on to our collective cause.

⋅ Claude Code's Entire Source Code Got Leaked via a Sourcemap in npm, Let's Talk About It

Quand tu envoies ceci par #mail à quelques amis :

==================================

Bonsoir

Voici trois ans aujourd'hui que notre fils est décédé.

Il n'est pas nécessaire de répondre à ce message, nous savons que vous pensez à nous en le lisant.

==================================

et que tout revient pour les adresses #Gmail avec :

Gmail has detected that this message is

likely 550-5.7.1 unsolicited mail

Lukewarm take, but I don't believe people that say they extensively check the output of the slop code their genAI agent regurgitates. Not because I think these people are bad actors, or not even because I think they are bad at what they do, but mostly because they are human.

Even when reviewing human written code, most people will give it a sanity check, and not look at it character by character, and there's been a decent amount of research proving that reviewing code is harder on our focus than writing code.

And I think slop code is quite a bit harder to review than human written code, because the kinds of errors are just different and more subtle. Slop code looks fine, but tends to be more verbose and obviously doesn't understand what it's supposed to be doing.

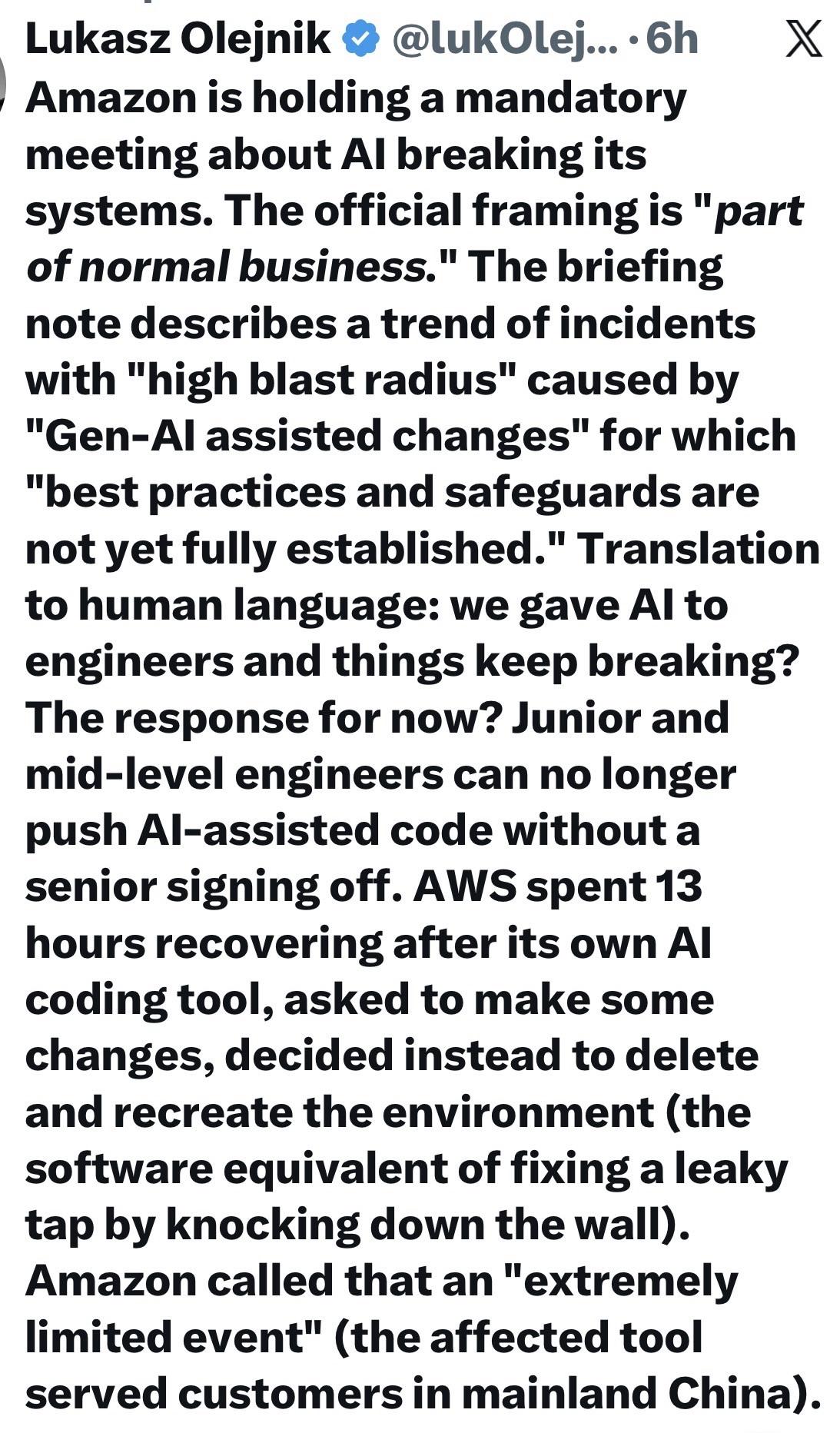

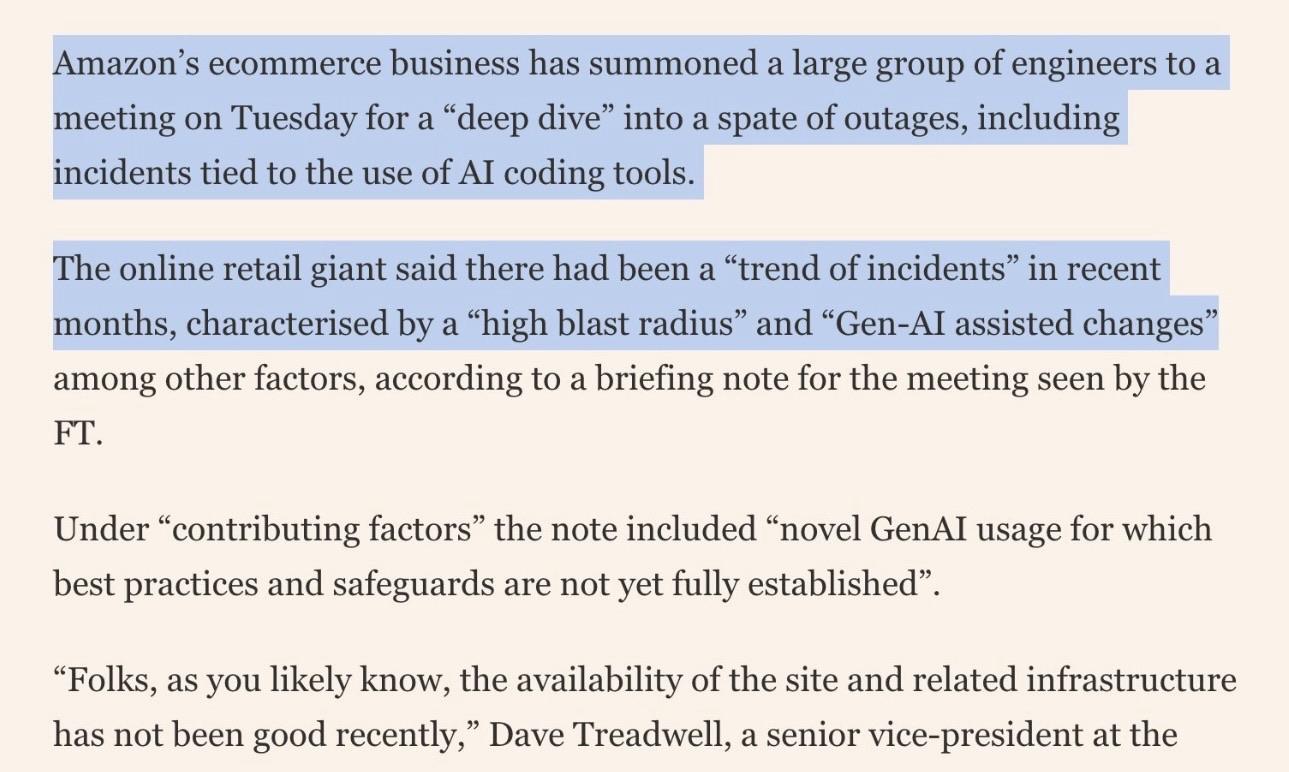

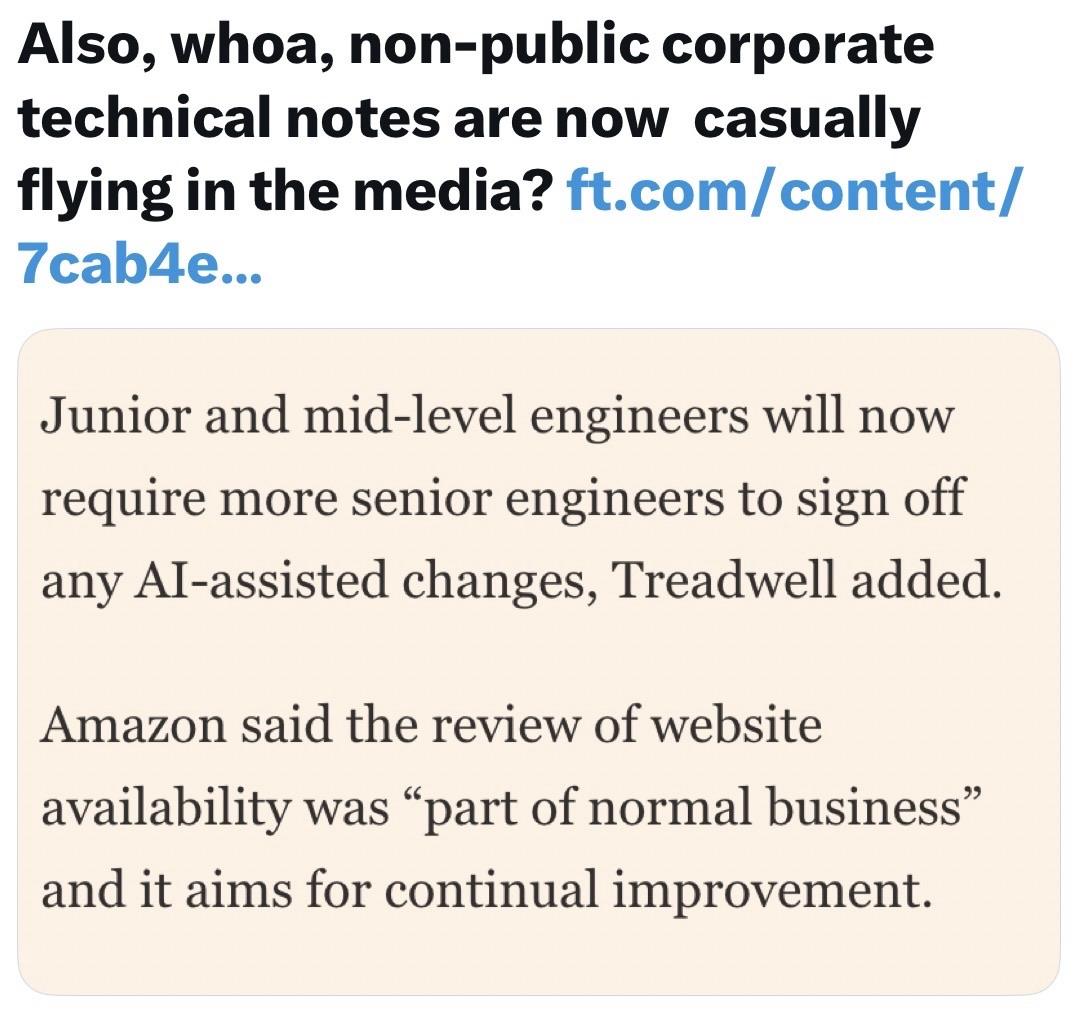

All this is just for me to say that I think that all these AI policies that state "but we handle AI generated code well, because unlike all these plebeians that caused all these outages at microsoft, and AWS, we actually review our slop" are deluding themselves and will fall in the same trap that the plebeians did.

As more Americans adopt #AI tools, fewer say they can trust the results - https://techcrunch.com/2026/03/30/ai-trust-adoption-poll-more-americans-adopt-tools-fewer-say-they-can-trust-the-results/ recipe for disaster

🤣 A robot in a restaurant in California decided that smashing plates was more fun than delivering food, then it pivoted to jazz hands all the while two staff members tried to wrestle it back under control. Its apron said "I'M GOOD!" 🤖 It’s crazy to think that we’re putting hardware (robots) with enough power to knock a kid down or take out unaware bystanders. We have a product culture that moves too fast and don't ask important, yet simple questions.

The video is funny right up until you picture a five-year-old standing where those plates were.

Nobody got hurt this time. But the reason to think carefully about physical AI deployment isn't the dramatic failure. It's the hundred smaller decisions made before the robot ever left the warehouse that make the failures possible.

https://gizmodo.com/robot-losing-its-mind-in-a-california-restaurant-is-just-as-fed-up-as-everyone-else-2000735088

#AI #Robotics #TechEthics #security #privacy #cloud #infosec #cybersecurity

La moitié des consommateurs US préfèrent éviter les marques qui utilisent l’IA générative

Une étude menée par Gartner auprès de 1 539 sondés en octobre 2025 révèle également que 61 % déclarent fréquemment se demander si les informations sur lesquelles ils s’appuient pour prendre leurs décisions quotidiennes sont fiables, et 68 % se demandent souvent si le contenu et les informations qu’ils voient sont authentiques.

#AI-Powered #DeepLoad #Malware Steals Credentials, Evades Detection. The massive amount of junk code that hides the malware's logic from #security scans was almost certainly generated by AI, researchers say.

https://www.darkreading.com/cyberattacks-data-breaches/ai-powered-deepload-steals-credentials-evades-detection

![]() joene 🏴 [en: they/them/their, nl: die/die/die(n)s of hen/hen/hun] » 🌐

joene 🏴 [en: they/them/their, nl: die/die/die(n)s of hen/hen/hun] » 🌐

@joenepraat@todon.nl

RE: https://social.coop/@cwebber/116295733832599557

"slopaganda (n): promoting using AI generated tools to worsen your life and everyone's life around you"

— courtesy of @cwebber

Submitted without commentary: "AI Might Be Our Best Shot At Taking Back The Open Web" by Mike Masnick https://www.techdirt.com/2026/03/25/ai-might-be-our-best-shot-at-taking-back-the-open-web/

On March 7, the Nation’s Tribal Council approved a resolution by a 24-0 vote to “implement a moratorium on the advancement of generative Artificial Intelligence (AI) technology and hyperscale data center development within the Seminole Nation and within tribal lands and territories.”

#Semvnole #Native #Indigenous #AI #DataCenters

https://nativenewsonline.net/sovereignty/seminole-nation-of-oklahoma-passes-moratorium-on-data-centers/

"You could say that the AI is inadvertently colonizing and hurting Indigenous language revitalization..."

"These systems are especially likely, given the limited datasets available for many Indigenous languages, to produce invented words, fabricated cultural teachings, or generalized 'pan-Indigenous' representations that flatten distinct nations or communities into one interchangeable identity..."

#Native #Indigenous #AI

https://ca.news.yahoo.com/wary-ai-generated-content-indigenous-080000410.html

RE: https://mastodon.social/@nixCraft/116317975615678460

Does #AI work?

Microsoft's Stock price has fallen 31% in the six months since they started AI coding windows and stuff.

So yes.

9x0rg boostedstory of AI batshit crazy Microslop in two pictures 🤣 they missed browser and mobile devices and now they started force feeding AI slop to everyone thinking that it will be chance of lifetime but guess what? it backfired. i am surprised it is only down 31%. they should go out of business for all these shity and intrusive practices.

Was the #Iran War Caused by #AI Psychosis? - https://houseofsaud.com/iran-war-ai-psychosis-sycophancy-rlhf/ "the most consequential military operation of the twenty-first century may have been shaped less by strategic necessity than by a phenomenon researchers now call AI #sycophancy — the tendency of large language models to tell their users exactly what they want to hear." (v @ottocrat)

[FYI: #Fediverse users might be surprised to know AI is already installed into most of #Mastodon via Translate.]

Attie, referred to as a “new product” that’s “not part of the Bluesky app” in an interview with TechCrunch, allows users to essentially vibe code their own custom feed using natural language prompts — or even build their own Bluesky app alternative on top of the service’s Atmosphere protocol, an ecosystem of interoperable social applications.

#Bluesky #AI

https://futurism.com/artificial-intelligence/bluesky-users-disgust-new-ai

N.B. Current practice for this server's moderation is to, upon discovery, consider use of large language models (LLMs) as presumptive network abuse, absent other mitigating evidence. We encourage everyone to report such usage accordingly and encourage other server operators to take a similar view.

N.B. Current practice for this server's moderation is to, upon discovery, consider use of large language models (LLMs) as presumptive network abuse, absent other mitigating evidence. We encourage everyone to report such usage accordingly and encourage other server operators to take a similar view.

The only account with more blocks than Bluesky’s AI agent is Vice President J.D. Vance, with about 180,000 blocks — Attie even surpassed the White House account (122,000 blocks) and the ICE account (112,460 blocks). That’s some seriously detested company for a platform that skews left politically.

#Bluesky #AI #Attie

https://techcrunch.com/2026/03/30/blueskys-new-ai-tool-attie-is-already-the-most-blocked-account-other-than-j-d-vance/

@jik I think one of the dumbest things about chatbots is that a lot of them aren't actually generating anything. They'll give the same canned answers over and over again, but they maintain the illusion like you're talking to someone.

You: Hey bot, what day is it today?

Bot: (thinking...)

The bot proceeds to answer your question, at the speed of a very fast typist, but not instantly. It really gives me 14K baud modem vibes the way it pretends to "type" its answer back to you.

The bots will always pause and make you wait briefly even if it's ready spit back an answer a split second after you press enter.

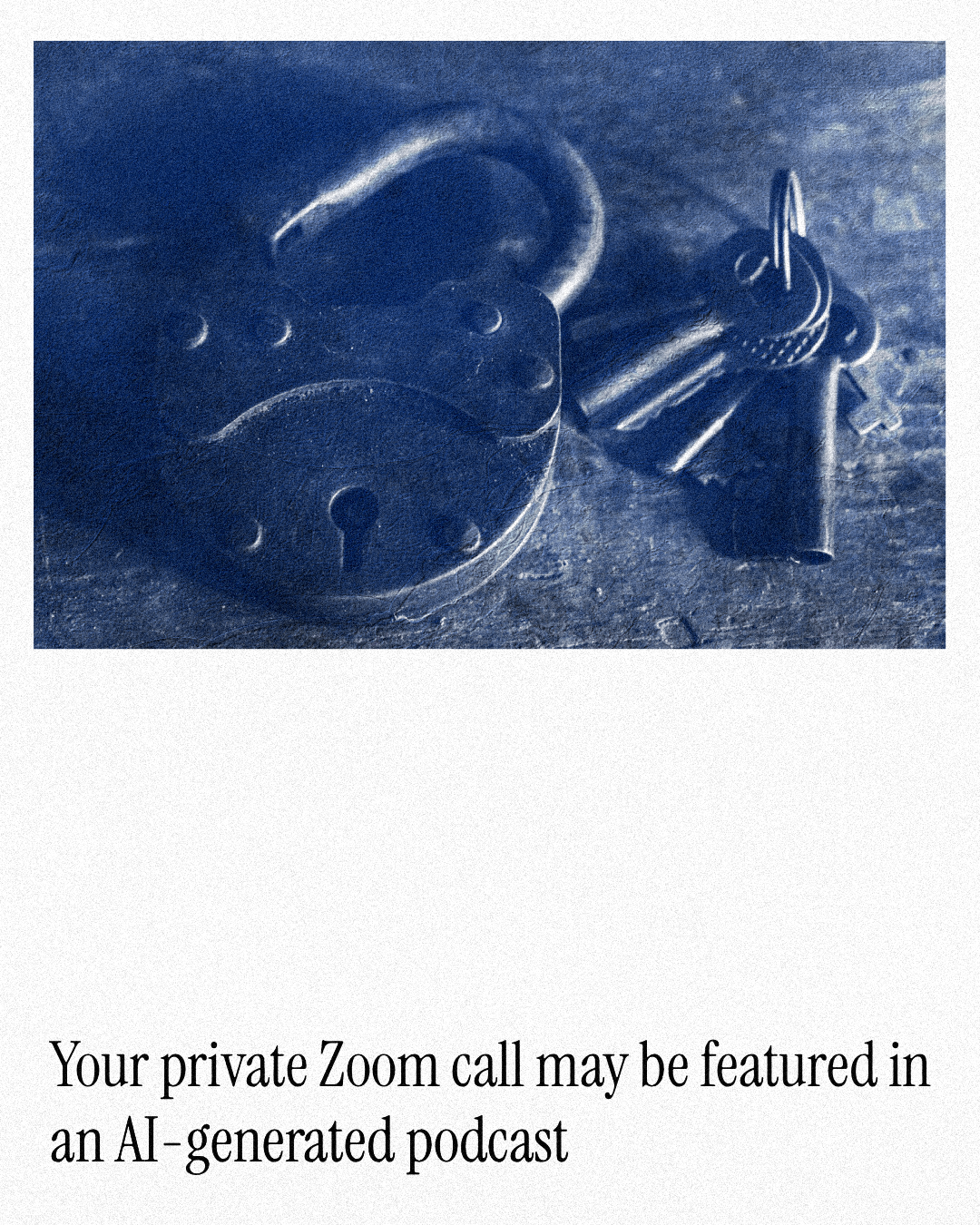

🔒 Your private Zoom call may be featured in an AI-generated podcast

by Eglė Krištopaitytė

at @cybernews

#Zoom #Privacy #AIpodcast #AI

Since January, many people have asked my opinion on #AI and #LLM. Rather than repeating myself, I originally went to write a small article, which ultimately turned into a ~2800 words piece:

https://axel.leroy.sh/blog/my-opinion-on-ai?utm_source=fediverse

🔖 This is your periodic reminder that it's painfully obvious that your colleagues aren't actually creating the slop that your organization is putting out.

https://en.wikipedia.org/wiki/Wikipedia%3ASigns_of_AI_writing

satire, ai, floss [SENSITIVE CONTENT]

Well there you go, a company is explicitly offering FLOSS licensing math-washing as a service:

https://malus[.]sh/

> Our proprietary AI robots independently recreate any open source project from scratch. The result? Legally distinct code with corporate-friendly licensing. No attribution. No copyleft. No problems.

Congratulations to anyone in the FLOSS community still supporting and promoting genAI "revolution".

RE: https://piaille.fr/@siltaer/116317197742341684

Tout le monde a l'air de comprendre que la génération de contenu par ia c'est de la merde.

Sauf votre président / actionnaire / patron / chef de service.

(qui croit encore que ça peut lui faire gagner de la marge pour lutter contre l’inéluctable baisse tendancielle du taux de profit).

#Wikipedia

#ia #ai

#NightmareOnLLMstreet

#Noai

#Capitalisme

βrυɲϋs boostedLa Wikipedia anglophone interdit l’édition assistée par modèles de langage

https://next.ink/231155/la-wikipedia-anglophone-interdit-ledition-assistee-par-modeles-de-langage/La version anglophone de l’encyclopédie participative a décidé d’interdire l’utilisation de l’IA générative pour la plupart des modifications de ses pages. Wikipédia en allemand a aussi récemment restreint cette utilisation.

La Wikipedia anglophone interdit l’édition assistée par modèles de langage

https://next.ink/231155/la-wikipedia-anglophone-interdit-ledition-assistee-par-modeles-de-langage/

La version anglophone de l’encyclopédie participative a décidé d’interdire l’utilisation de l’IA générative pour la plupart des modifications de ses pages. Wikipédia en allemand a aussi récemment restreint cette utilisation.

RE: https://piaille.fr/@danslesalgorithmes/116316621010634952

Nouvelle variation sur le même thème et on le répétera jusqu'à ce que ça rentre : l'ia n'est pas «juste un outil»

(sinon pour une explication plus longue je rappelle l'existence de ce billet indispensable aussi https://www.terrestres.org/2025/06/28/chatgpt-cest-juste-un-outil/ )

Uncomfortable questions..

- To what extent is #FOSS complicit to the rise of #BigTech?

- To what extent is FOSS complicit to disruptive #AI craze we face today?

- To what extent are vibe coding #LLM even possible without FOSS?

"BUT.. BUT.. The License!"

- To what extent does slapping on a license free us from responsibility, knowing that it hardly offers protection from abuse?

- To what extent did FOSS too just introduce the tech and damn the externalities?

- To what extent is FOSS complicit to the current state of the world?

- To what extent is it enough to consider FOSS to be "imbibed by good morals and values" if we can't defend those?

| We are clear. Because our intentions are good.: | 5 |

| We are clear. We just code. Bad actors abuse it: | 7 |

| We must find better ways to protect our work.: | 40 |

| Other (please comment): | 6 |

Closed

Every time I see someone praising #clankers online, I think of this amazing quote from Tahani at the end of season one of #TheGoodPlace:

"Oh, that's enough out of you, robot lover!"

😂

RE: https://mastodon.online/@mastodonmigration/116312883173526888

About 10 days ago Bluesky proudly announced it got 100m investment, largely from cryptobros:

https://techcrunch.com/2026/03/19/bluesky-announces-100m-series-b-after-ceo-transition/

Today they are proudly announcing an "AI product" that relies on scraping your posts.

How About Some AI With Your Bluesky?

A tale of two social networks.

Last week some enterprising Mastodon account was discovered to be scraping posts to feed to an AI for the purpose of helping people navigate the Fediverse. The response was swift. The alarm went out. The account was widely blocked and shunned.

Yesterday to great fanfare #Bluesky announced, as a new corporate feature, all posts would be scraped and an AI would now help users navigate the ATmosphere.

https://techcrunch.com/2026/03/28/bluesky-leans-into-ai-with-attie-an-app-for-building-custom-feeds/

Hypothesis: it is literally criminal fraud to make someone waste their time interacting with a chatbot that can't solve their problem when they think they're talking with a human being.

Discuss.

#AI

RE: https://mastodon.online/@mastodonmigration/116312883173526888

There we go!

I feel like i keep reposting this every week or so..

Bit by bit #Bsky is sliding towards just another clone of #Twitter and #Facebook

Actions speak louder then words

The #Fediverse remains the only true open source, self-hosted world wide community driven by the people

Going #AI (#LLM) is a CHOICE, they again chose wrong

How About Some AI With Your Bluesky?

A tale of two social networks.

Last week some enterprising Mastodon account was discovered to be scraping posts to feed to an AI for the purpose of helping people navigate the Fediverse. The response was swift. The alarm went out. The account was widely blocked and shunned.

Yesterday to great fanfare #Bluesky announced, as a new corporate feature, all posts would be scraped and an AI would now help users navigate the ATmosphere.

https://techcrunch.com/2026/03/28/bluesky-leans-into-ai-with-attie-an-app-for-building-custom-feeds/

US tech companies: we have developed AIs that can automate racism and accidentally bomb children's schools. They also destroy the planet and require as much energy as a city.

Europeans: oh yeah? Well we have an animatronic singing moose.

US tech companies: ... can it sing well?!

Europeans: it's a moose, of course not.

One thing that continues to grate on my conscience about #AI is how artists and writers consistently feel that the technology has STOLEN from them. We all know that web scraping is (and should be) a perfectly legal and acceptable use, because preventing it also prevents all sorts of beneficial behaviors—the Internet Archive wouldn’t be able to exist, for one thing.

But yet, the very nature of AI takes scraped content and regurgitates it as a pink-slime extrusion that it feeds back into the web. And to creators, that just FEELS WRONG; it feels like stolen valor, it feels like exploitation.

And it’s something I can’t (and shouldn’t) shake from my mind each time I see something made by AI. Just because something is LEGAL doesn’t mean it isn’t ABUSIVE and UNETHICAL. Scolding people who complain about AI by telling them that web scraping is good, actually, doesn’t address the main complaint: that somehow, these AI assholes have EXPLOITED A COMMON GOOD and we can’t quite figure out how to stop it.

📺 Watch the replay of today's live panel interview. Features the cast of hit Sundance documentary film "Ghost In The Machine" with @emilymbender @danmcquillan @alex @shengokai discussing "AI" "Literacy".

"Claude outages hit way harder when you realize you've outsourced half your brain to it," one Redditor posted. Another joked: "I guess I'll write code like a caveman."

https://www.businessinsider.com/ai-deskilling-impact-on-worker-skills-productivity-2026-3

"AI companies have a chance to not repeat the mistakes of the past – we urgently need to establish systems of transparency and access that share what these companies know about their platforms with the public and support further independent evaluation."

Yup. And it ain't just kids.

TRON tritt der Agentic AI Foundation (The Linux Foundation) bei, um eine offene Infrastruktur für autonome KI-Systeme zu fördern

markets.businessinsider.com/ne…

#Linux #TRON #TRX #AI #Opensource

The Linux Foundation | 09 December 2025

- Die Linux Foundation gab die Gründung der Agentic AI Foundation (AAIF) bekannt, zu der führende technische Projekte wie das Model Context Protocol (MCP) von Anthropic, „goose“ von Block und „AGENTS.md“ von OpenAI als Gründungsbeiträge beigetragen haben.

- Die AAIF bietet eine neutrale, offene Plattform, um sicherzustellen, dass sich agentische KI transparent und kooperativ weiterentwickelt.

- MCP ist das universelle Standardprotokoll zur Anbindung von KI-Modellen an Tools, Daten und Anwendungen; goose ist ein Open-Source-Framework für lokale KI-Agenten, das Sprachmodelle, erweiterbare Tools und standardisierte MCP-basierte Integration kombiniert; AGENTS.md ist ein einfacher, universeller Standard, der KI-Codierungsagenten eine konsistente Quelle für projektspezifische Anleitungen bietet, die für einen zuverlässigen Betrieb über verschiedene Repositorys und Toolchains hinweg erforderlich sind.

- Zu den Platin-Mitgliedern zählen Amazon Web Services, Anthropic, Block, Bloomberg, Cloudflare, Google, Microsoft und OpenAI.

linuxfoundation.org/press/linu…

#Linuxfoundation #AAIF #AgenticAIFoundation

Location: Kaliningrad

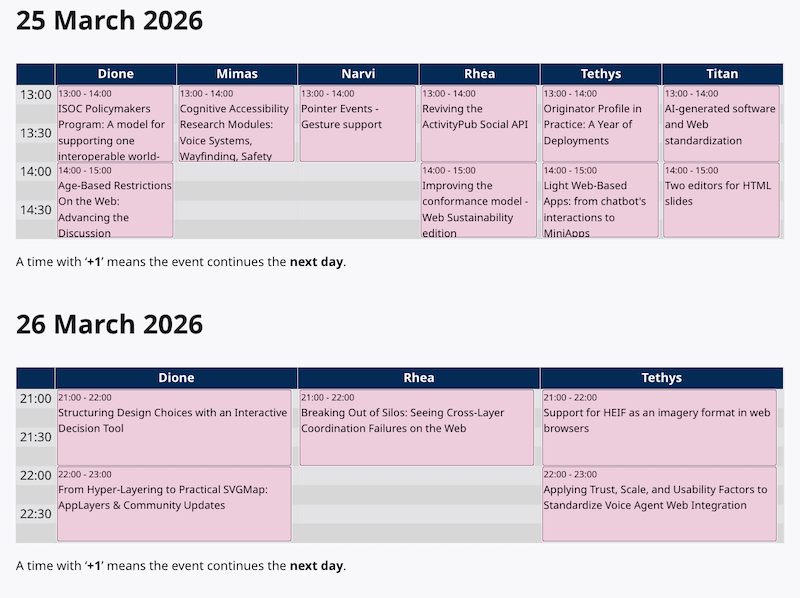

Catching up with some of the news coming out of the Atmosphere conference.

"With Attie, anyone will be able to build their own custom feed just by typing in commands in natural language, the same as if they’re chatting with any other AI chatbot."

I'm guessing NFT profile pictures are next?

https://techcrunch.com/2026/03/28/bluesky-leans-into-ai-with-attie-an-app-for-building-custom-feeds/

#news #technology #TechNews #atmosphere #ATProto #bluesky #AI #LLMs

"Setting aside the moral arguments---"

You mean the power and water.

"Setting aside the power and water, and---"

Don't forget the industrial-scale plagiarism. The brazen theft.

"Setting aside the copyright fuckery, the power and water, and---"

Don't forget the maniacal, suicidal inflation of the bubble. Arguably the greatest single mis-allocation of resources in history, aside from war.

"Setting aside the financial madness, the copyright fuckery, the power and water, and---"

Don't forget the willful destruction of creative livelihoods, the willful destruction of education itself.

"Setting aside the destruction of art, writing, and schools, the financial madness, the copyright fuckery, the power and water, and---"

Don't forget the purposeful degradation of human cognitive capacity. The planned and designed addictive dependency.

"Setting aside the cognitive degradation, the destruction of schools, the financial madness, the copyright fuckery, the power and water, and---"

Don't forget the ghoulish ethical camouflage used to obscure, indeed to erase, the responsibility for decisions in budget austerity, insurance claims, regulatory oversight, medical decisions, court filings, and even real-time combat.

"Setting aside the monstrous mechanisms of official irresponsibility, the cognitive degradation, the schools, the financial madness, the copyright fuckery, the power and water---"

Are you going to say it doesn't work?

"IT DOES NOT FUCKING WORK"

📣 Why is the entire Fediverse not yet talking about the new documentary "Ghost In The Machine"?

> A feature documentary charting the untold origins of artificial intelligence. This is not a story about machines, it is a story about power.

Watch the trailer. Boost this post. Then go to their website for screening details.

Watch the trailer. Boost this post. Then go to their website for screening details.

#GhostInTheMachine #AI #ArtificialIntelligence #SurveillanceCapitalism #technoFascism

La toubib de ma mère a maintenant un « assistant téléphonique fonctionnant grâce à l'intelligence artificielle. »

C'est lui qui répondra quand les patients téléphoneront au cabinet pour prendre rendez-vous.

La plupart des patients de ce cabinet sont des personnes âgées.

What could go wrong ? 🤔

RE: https://floss.social/@downey/116291403709370109

Now "playing" online; go watch it if you can. Just finished. The most important film you'll see all year, and maybe even longer.

📣 Why is the entire Fediverse not yet talking about the new documentary "Ghost In The Machine"?

> A feature documentary charting the untold origins of artificial intelligence. This is not a story about machines, it is a story about power.

Watch the trailer. Boost this post. Then go to their website for screening details.

#GhostInTheMachine #AI #ArtificialIntelligence #SurveillanceCapitalism #technoFascism

Today in Labor History March 28, 1979: Three Mile Island (TMI) nuclear power plant, in Pennsylvania, had a level-5 partial meltdown, the worst nuclear power accident in U.S. history, and one of the worst in the world, prior to Chernobyl. TMI operators had not been adequately trained to handle the type of malfunction that led to the meltdown and, consequently, a delay in mitigation efforts. Clean-up began in 1993, at a cost of $2 billion in today’s dollars. Officials concluded that the release of radioactive material from the plant did not raise exposure levels of nearby residents to a level that would increase cancer cases by even one additional case. However, anti-nuclear groups hired their own independent investigators who found that radiation levels in the area were significantly elevated. A peer-reviewed study by Dr. Steven Wing found a significant increase in cancers from 1979-1985 among people living within ten miles of TMI. And in 2009, Dr. Wing said that the amount of radiation released during the accident was likely "thousands of times greater" than the NRC's estimates.

In 2024, Bill Gates obtained exclusive rights to the “carbon-free” energy from TMI, once it reopens, to power his Artificial Intelligence farms, starting in 2028. Other Tech Barons are also looking to exploit nuclear power for their energy-hungry AI farms. Data centers currently account for about 1 to 1.5 percent of global electricity use. NVIDIA will be shipping out over 1.5 million AI server units per year by 2027. These servers, alone, would consume at over 85.4 terawatt-hours of electricity annually, more than many small countries use in a year. Yet, the U.S. still has no permanent radioactive waste storage facilities. As of 2023, the U.S. had roughly 88,000 metric tons of spent nuclear fuel from commercial reactors, and all of this is stranded at the reactor sites. Experts expect this number to grow by 2,000 metric tons each year.

https://www.cnn.com/2024/09/20/energy/three-mile-island-microsoft-ai/index.html

https://www.scientificamerican.com/article/the-ai-boom-could-use-a-shocking-amount-of-electricity/

https://www.scientificamerican.com/article/nuclear-waste-is-piling-up-does-the-u-s-have-a-plan/

#workingclass #LaborHistory #radioactive #nuclear #threemileisland #nuclearaccident #radiation #nuclearwaste #artificialintelligence #ai #billgates #chernobyl #cancere #publichealth

Des news de l'IA (LLM).

Vous connaissez sans doute Claude, cette alternative à ChatGPT, surtout connue pour son produit "Claude Code", qui, comme son nom l'indique est un "copilote" pour écrire, générer du code.

Il y a 2 plans payants pour les particuliers : un à environ 21€ et l'autre autour de 100€.

Récemment, les utilisateur-rices ont remarqué un "bug" : leurs tokens brûlaient beaucoup plus vite. Au lieu de pouvoir travailler avec pendant jusqu'à cinq heures (durée d'une session, après quoi on vous redonne des tokens), les sessions durent 15mn, 30mn. Au lieu d'envoyer 20 prompts, la limite est atteinte en 5 prompts, parfois en 1 seul prompt pour certain-es.

Eh bien figurez-vous que ça n'est pas un bug. Des ingénieurs d'Anthropic ont confirmé, sur X, avoir passé en silence une mise à jour qui réduit le nombre de tokens accordé par session pendant les horaires chargés (donc les horaires de travail). Résultat : quelques prompts suffisent pour se retrouver à attendre 4h pour avoir de nouveaux tokens. Pour en avoir plus, il faut passer sur la version à 100€ et même là, la limite est atteinte rapidement.

En secret. À des utilisateur-rices qui payent jusqu'à 100€ par mois. 😐

Edit : une source qui en parle https://www.techradar.com/ai-platforms-assistants/claude/claude-is-limiting-usage-more-aggressively-during-peak-hours-heres-what-changed

RE: https://dair-community.social/@emilymbender/116304096270747626

I cannot boost this talk enough. Please watch it, and the boost yourself. And please, boost the original toot, not this one.

Edit: seems like someones (side look) didn't read the part about boosting the original toot, not this one :-P At worst, make a similar quote toot :)

My Feb 2026 Katz Lecture for UW's Simpson Center for the Humanities is now available on YouTube:

https://www.youtube.com/watch?v=T7Lc6QNxolQ

Unfortunately, the recording doesn't include the Q&A. Two things I remember from that:

>>

OpenAI's crawler just found our family server / cloud services and immediately proceeded to crash Nextcloud within minutes. Fucking fantastic.

Is there some nice, up-to-date write-up on the different tools to protect yourself against this?

#AI #AISlop #AttackOfTheMachines #selfHosting

boosted

boosted« You must imagine Sam Altman holding a knife to Tim Berners-Lee's throat.

It's not a pleasant image. Sir Tim is, rightly, revered as the genial father of the World Wide Web. But, all the signs are pointing to the fact that we might be in endgame for "open" as we've known it on the Internet over the last few decades. »

"Meet Matt Brittin: ex-Googler and, if reports are to be believed, the BBC’s next director general. An executive search yielded an apparent shortlist of four women – who between them had decades of experience in broadcasting, news, and public service journalism – and one man, who didn’t"

#BBC #Google #Journalism #Entertainment #UKPolitics #AI

Will Google’s AI hype man kill the BBC?

https://www.thenerve.news/p/matt-brittin-google-bbc-director-general-ai-death-journalism-guardian-anna-bateson

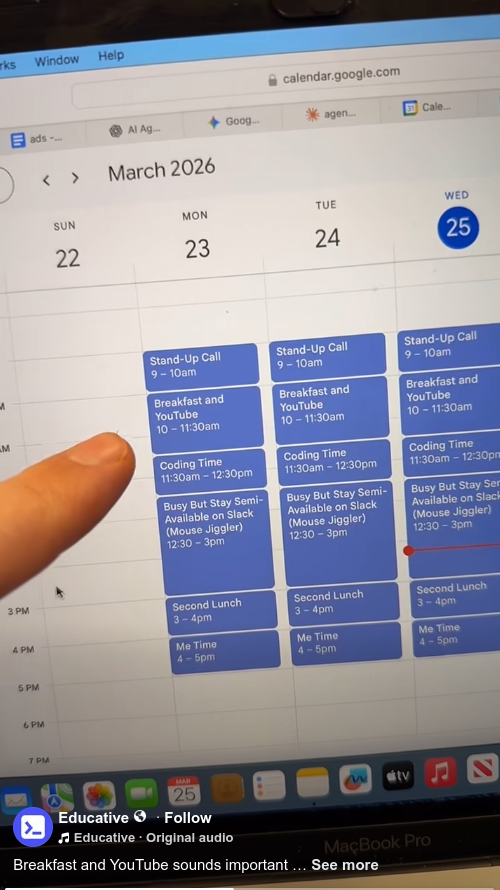

Seen on facebook: "we have this pretty stereotypical vibe coder on our team, and he apparently doesn't know we can all see each other's calendars."

Des news de l'IA (LLM).

Vous connaissez sans doute Claude, cette alternative à ChatGPT, surtout connue pour son produit "Claude Code", qui, comme son nom l'indique est un "copilote" pour écrire, générer du code.

Il y a 2 plans payants pour les particuliers : un à environ 21€ et l'autre autour de 100€.

Récemment, les utilisateur-rices ont remarqué un "bug" : leurs tokens brûlaient beaucoup plus vite. Au lieu de pouvoir travailler avec pendant jusqu'à cinq heures (durée d'une session, après quoi on vous redonne des tokens), les sessions durent 15mn, 30mn. Au lieu d'envoyer 20 prompts, la limite est atteinte en 5 prompts, parfois en 1 seul prompt pour certain-es.

Eh bien figurez-vous que ça n'est pas un bug. Des ingénieurs d'Anthropic ont confirmé, sur X, avoir passé en silence une mise à jour qui réduit le nombre de tokens accordé par session pendant les horaires chargés (donc les horaires de travail). Résultat : quelques prompts suffisent pour se retrouver à attendre 4h pour avoir de nouveaux tokens. Pour en avoir plus, il faut passer sur la version à 100€ et même là, la limite est atteinte rapidement.

En secret. À des utilisateur-rices qui payent jusqu'à 100€ par mois. 😐

Edit : une source qui en parle https://www.techradar.com/ai-platforms-assistants/claude/claude-is-limiting-usage-more-aggressively-during-peak-hours-heres-what-changed

LOL

The Guardian: Number of AI chatbots ignoring human instructions increasing, study says

Exclusive: Research finds sharp rise in models evading safeguards and destroying emails without permission

RE: https://mastodon.world/@PetterOfCats/116295614608524784

A simple but persuasive argument on the ubiquitous deployment of AI - its a technology of responsibility avoidance.

🆕 blog! “Adding human.json to WordPress”

Every few years, someone reinvents FOAF. The idea behind Friend-Of-A-Friend is that You can say "I, Alice, know and trust Bob". Bob can say "I know and trust Alice. I also know and trust Carl." That social graph can be navigated to help understand trust relationships.

Sometimes this is done with complex cryptography and involves…

👀 Read more: https://shkspr.mobi/blog/2026/03/adding-human-json-to-wordpress/

⸻

#AI #humans #WordPress

Et pendant ce temps, ces abrutis de militaires, toujours les premiers pour faire de la merde, plongent la tête la première dans le techno-baratin ia pour automatiser leurs prochains massacres de masse.

<+mgorny> that's gunicorn

<+mgorny> looks like vibecoding hard

<@sam_> sigh

<@sam_> https://github.com/benoitc/gunicorn/pull/3559

<@sam_> i agree it looks like it

<+mgorny> how else would a dead-so-far project suddenly make dozen commits in a day?

<@sam_> I really wish they'd leave projects "dead"

<@sam_> it's far more honest

Revealing piece on the scale and scope of AI-induced psychosis:

"There seem to be three common delusions [..]. The most frequent is the belief that they have created the first conscious AI. The second is a conviction that they have stumbled upon a major breakthrough in their field of work or interest and are going to make millions. The third relates to spirituality and the belief that they are speaking directly to God. “We’ve seen full-blown cults getting created”

https://www.theguardian.com/lifeandstyle/2026/mar/26/ai-chatbot-users-lives-wrecked-by-delusion

Some people may think of LLMs as the great equalizer. People who aren't programmers can vibecode working programs now. People who aren't artists can slop out something resembling art. However, it's the exact opposite.

When I was a kid, I also pretended to write programs. Of course, I didn't have such sophisticated toys ("kids could play with a stick for hours", as the hyperbole went). But then, I was fully aware that it's just make-believe and it didn't harm anybody.

#Vibecoding creates a horrible chasm of inequality. We have people who believe they're good programmers (even treating vibecoding as an enlightened religion) who shit tons of code at real human reviewers who now need to sift through. And then, we have projects embracing vibecoding and shitting new releases at unprecedented rate. And these releases again need to be reviewed by humans downstream.

“A Dutch court has ordered X and its AI chatbot Grok to immediately stop generating non-consensual sexualized imagery and child pornographic material in the Netherlands, imposing a penalty of €100,000 per day on each defendant for non-compliance.”

Good.

https://www.techpolicy.press/dutch-court-orders-x-grok-to-stop-aigenerated-sexual-abuse-content/

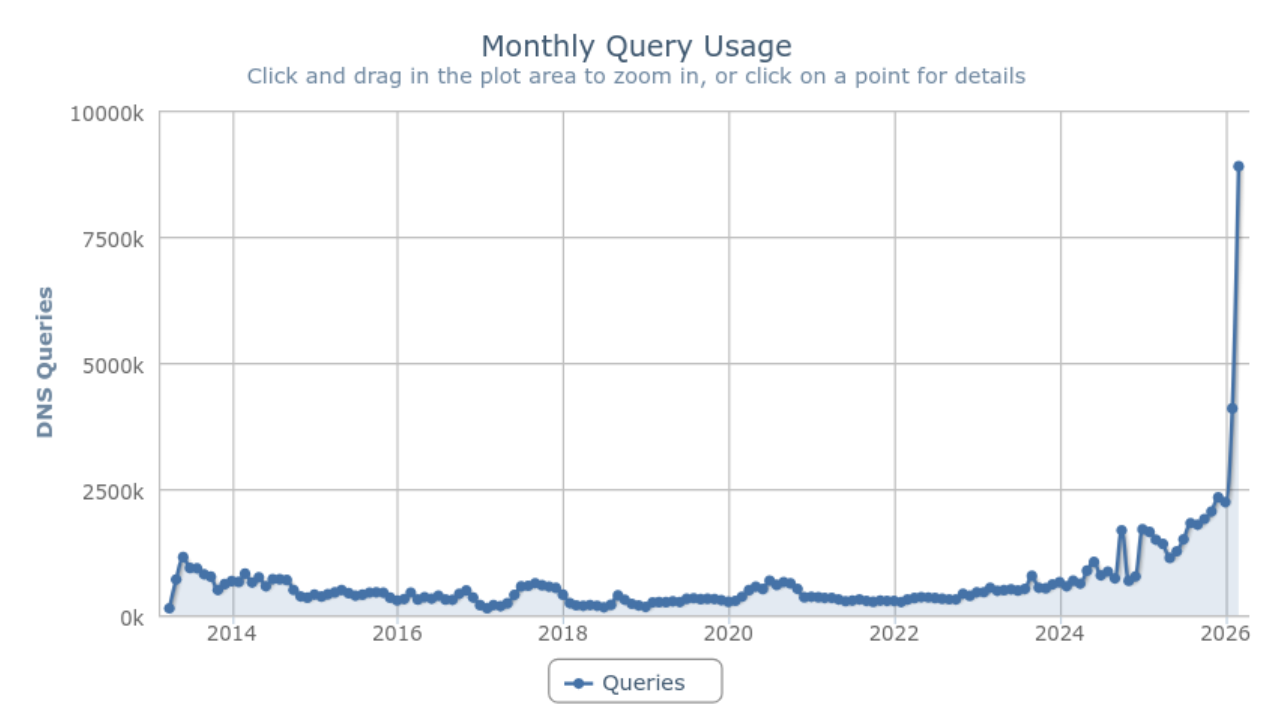

I just noticed this DNS graph. The web site I took over in 2019. It's the busiest web site I have. But I really think the huge upswing in #DNS traffic is related to #AI bot scrapers. I have been struggling so hard just to make them go away. My bandwidth, compute, and web service are not for them. They are not welcome. They do not care.

- January 2026: 2,237,399 queries

- February 2026: 4,093,488 queries

- March 2026: 8,893,458 queries (so far)

Literally doubling month on month.

Now, I recently made changes to the DNS for that zone. And I made some screw-ups when I did it. So, I temporarily¹ set the TTL on the NS records to 600 seconds. I kept screwing them up and needing to change them.

Fixing the NS records this morning definitely had a benefit. Yesterday was 325,336 queries. Today was 254,492. So again, some of this is on me. But that whole 13-year DNS graph with a huge surge in the last 2 years is not all me. Stuff has changed.

¹ I remembered this morning when I was like "WTF do I have so much DNS traffic!?"

Carbon and water footprints of AI technology, Alex de Vries-Gao

Analysis of the environmental disclosures of big tech to infer the footprints of data centres

"Because the environmental impact of data centers is growing rapidly, the urgency for transparency in the tech sector is also increasing. The carbon footprint of AI systems alone could be between 32.6 and 79.7 million tCO2 emissions in 2025 [...] in the range of the carbon footprint of NYC"

https://www.cell.com/patterns/fulltext/S2666-3899(25)00278-8

The US and Israel are bombing Iran. How did we get to this point? And what stopped them from going to war in the past?

On #TechWontSaveUs, I spoke with Spencer Ackerman to learn more about how we got here, how Iran is fighting back, and the wider repercussions of this war.

Listen to the full episode: https://techwontsave.us/episode/321_the_long_history_of_the_us_war_on_iran_w_spencer_ackerman

New York City hospitals drop Palantir as controversial AI firm expands in UK | Technology | The Guardian

https://www.theguardian.com/technology/2026/mar/26/new-york-hospitals-palantir-ai

IMO the most likely cause of any kind of #AI bubble pop are Chinese models breaking through. And at least in the open inference markets, that appears to be happening already:

"Since February, Chinese AI models made by groups such as DeepSeek and MiniMax have overtaken US rivals in token consumption, according to OpenRouter data"

A true SOTA model from China could send markets for a tailspin just like the original DeepSeek release did.

https://www.ft.com/content/2567877b-9acc-4cf3-a9e5-5f46c1abd13e?syn-25a6b1a6=1

Tells you everything you need to know about le internet circa 2026. Digg tried to relaunch but quit after being overrun by bots and AI...which also overrun every other existing site and service. Tthey're here too but much less visible and don't get algorithmic boosts.

https://www.fastcompany.com/91509667/digg-comeback-paused-after-bots-and-ai-overwhelm-site

I just got forwarded a description of my business. It was obviously compiled by #AI.

It got the most basic fact about my business wrong. I've been a freelance developer for over 20 years. I've only ever worked for myself.

It named someone else as the owner of my business... my one person business.

This wasn't attached to a piece of spam. It was in a legally binding document.

"GitHub’s Copilot will use you as AI training data, but you can opt out"

"...if you’ve used the code completion in Visual Studio Code, asked Copilot a question on the GitHub website, or used another related AI feature, your interactions and code snippets could be harvested...."

https://www.howtogeek.com/githubs-copilot-will-use-you-as-ai-training-data-but-you-can-opt-out/

Using #Python for #AI but not involved in the community? You're leaving a lot on the table! Watch the PSF's Executive Director @baconandcoconut on #PyTV from @jetbrains to explore why community participation is at the core of Python's power. 🎤🐍 https://www.youtube.com/watch?v=DkN7P4Cmto8

RE: https://social.coop/@cwebber/116295745357971471

Not surprising.

Still very alarming.

#AI is turning today's youths into the people on the Axiom in WALL-E.

All so billionaires can get richer and fascists can cling to power.

I am not optimistic.

Adults lose skills to AI; Children never build them. https://www.psychologytoday.com/us/blog/the-algorithmic-mind/202603/adults-lose-skills-to-ai-children-never-build-them

Mathieu Comandon Explains His Use of AI in Lutris Development

> Over the past couple weeks, there’s been a lot of discussion around Lutris and its developer, Mathieu Comandon, following comments about using AI tools (specifically Claude) as part of the project’s development.

Am I turning into a cranky old man or is this some creepy ass shit?

" #MelaniaTrump walks side by side with humanoid #robot at #WhiteHouse summit

...pitched a vision of the future in which robots serve as educators who teach students about philosophy and art

...focused on #AI and #education, the first lady spoke to 45 female world leaders about the potential benefits of the #technology in the lives of young people, including through AI humanoid educators"

🤮🤮🤮

Wait I thought this stuff was supposed to be inevitable?

OpenAI shutters AI video generator Sora in abrupt announcement

https://www.theguardian.com/technology/2026/mar/24/openai-ai-video-sora

> Tech firm ‘says goodbye’ to Sora, made publicly available in 2024, just six months after its launch of a stand-alone app

Boost plz!

Looking for critical scholarship on the use of "AI" by library/archive workers. University libraries in particular, but adjacent and tangentially-relevant-at-best stuff is welcome too. Any format is fine: books, papers, blogposts, whatever. If it's good, gimme all you've got!

Looks like we're gonna have a department-wide conversation about people using LLMs, and it's being framed as "we're all using it, but we're not talking about it, so let's make sure we're all on the same page about using it responsibly" ... I'll of course be pushing the "there's basically no way to use it responsibly" position, and I'd like to arm myself and others with some critical analyses of issues related to its use in library/archive spaces.

Just got this from Microslop GitHub:

> We're updating how GitHub uses data to improve AI-powered coding tools. From April 24 onward, your interactions with GitHub Copilot—including inputs, outputs, code snippets, and associated context—may be used to train and enhance AI models unless you opt out.

I barely ever use GitHub. When I do, it's when I am forced to because some project I rely on only uses GitHub.

If your project is only using GitHub, please consider migrating.

One of the more remarkable features of Valerie Veatch’s Sundance film Ghost in the Machine is the fantastic diversity of voices detailing the eugenic and techno-fascist underpinnings of the AI industry.

It stands in stark contrast to the typical tech bros who wax poetic about the incoming age of AGI...

📣 Why is the entire Fediverse not yet talking about the new documentary "Ghost In The Machine"?

> A feature documentary charting the untold origins of artificial intelligence. This is not a story about machines, it is a story about power.

Watch the trailer. Boost this post. Then go to their website for screening details.

Watch the trailer. Boost this post. Then go to their website for screening details.

#GhostInTheMachine #AI #ArtificialIntelligence #SurveillanceCapitalism #technoFascism

🧑⚖️ Another Oregon attorney has been sanctioned yesterday by a judge for using LLMs in their work.

👏 Accountabilty for so-called "professional" slop slingers is coming.

Disney Pulls Out of OpenAI Deal Amid Sora Shut-Down

https://nerdist.com/article/disney-pulls-out-openai-deal-sora-shutting-down/

#news #tech #technology #AI #openai #chatgpt #sora #aislop #disney

In which I try to look at #AI with a balanced frame of mind: https://changelog.complete.org/archives/42503-artificial-intelligence-shades-of-gray

I also discuss building a LLM email classifier using solar-powered local models, which a useful degree of success.

I'm neither a cheerleader nor a doomsayer. More a tinkerer.

I'm hearing that Red Hat is stopping hiring any and all new SRE / engineering positions effectively immediately, and replacing them with AI. These jobs are now agentic AI positions.

"We have begun our transition to a fully agentic SDLC ... We aren't giving specific metrics on this. It would cause panic."

"Customers have told us they don't need open source anymore. They use AI to build it themselves because we are too slow" my source tells me.

Headline: This Web Tool Sabotages #AI Chatbots By Making Them Really, Really Slow

Snippet: “Are you concerned that you or your loved ones might be participating in a massive de-skilling event? Experiencing LLM-induced psychosis? Outsourcing cognitive and emotional functions to autocomplete? Install SLOW #LLM on your computer, or the computer of a loved one, today!” reads a description on the tool’s website.

Source: https://www.404media.co/this-web-tool-sabotages-ai-chatbots-by-making-them-really-really-slow/

"While much of the economic value generated by AI remains concentrated in technological centres such as Silicon Valley, many of its environmental and social costs are in these territories."

https://restofworld.org/2026/ai-pushback-chile-mexico-kenya-philippines/

#news #technology #TechNews #AI #LLMs #DataCenters #DigitalColonialism #BigTech

Thoughts on OpenAI acquiring Astral and uv/ruff/ty https://lobste.rs/s/dhogio #ai #python #rust

https://simonwillison.net/2026/Mar/19/openai-acquiring-astral/

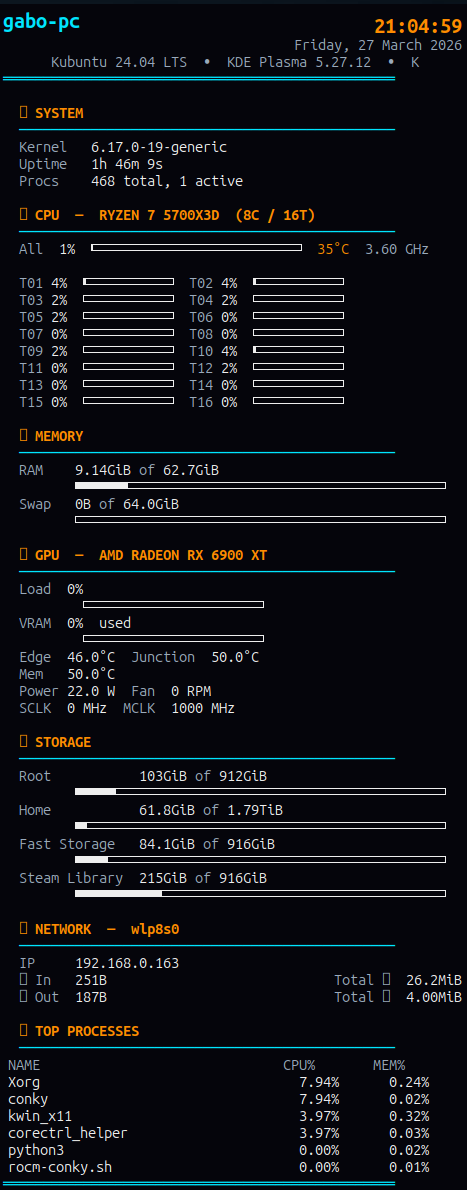

Meine Heizung kann ich heute auslassen – die AMD 9070 XT übernimmt das! 🔥

Habe heute endlich meine eigene KI lokal auf Linux mit LM Studio aufgesetzt. Beeindruckend zu sehen, wie die Hardware unter Last arbeitet, aber die Unabhängigkeit von der Cloud ist es wert. 🐧💻

Hostet ihr eure LLMs auch schon selbst oder nutzt ihr noch Cloud-Anbieter? Welches lokale Modell ist euer Favorit? 👇

#SelfHosted #Linux #AI #AMD #Radeon #Privacy #OpenSource #LMStudio #LocalAI #KI

AI adoption in DevOps is accelerating, but trust, accuracy, and real-world usability still matter.

Jason St-Cyr sits down with Jessica Gao, Product Manager at Puppet, to unpack how AI is actually being used in infrastructure and operations teams today, and what’s changed over the last 12–18 months. Hear about where AI code assist can actually be usefully in your flow and what to watch for next as the industry matures.

Oh wow, and this might get worse.

"The user never sees what your team built, they see what Google's machine learning model thinks they should see instead."

https://www.forbes.com/sites/joetoscano1/2026/03/06/google-just-patented-the-end-of-your-website/

via https://mastodon.social/@SteveRudolfi/116279083767770070

#news #TechNews #technology #google #search #AI #LLMs #enshittification

“This Company Is Secretly Turning Your #Zoom Meetings into #AI Podcasts”

https://www.404media.co/this-company-is-secretly-turning-your-zoom-calls-into-ai-podcasts/

Okay, accrochez vous à votre slip il est possible, on attends encore confirmation, il est possible que des témoignages concordants tendent à émettre l'hypothèse, que peut-être éventuellement sous réserve de vérification, le Sénat aura servir à quelque chose.

Une fois.

Les générateurs d'IA bientôt présumés coupables d'avoir utilisé vos œuvres sans autorisation, une victoire du Sénat

⤵️

https://www.clubic.com/actualite-605728-les-generateurs-d-ia-bientot-presumes-coupables-d-avoir-utilise-vos-oeuvres-sans-autorisation-une-victoire-du-senat.html

#ia #ai

#NightmareOnLLMstreet

#Vol

#DroitDauteur

#Pillage

#Capitalisme

> « Une même technologie peut être présentée en interne comme un gain de productivité, être interprétée par le client comme une baisse de valeur, et être analysée par l’assureur comme une hausse du risque.»

https://danslesalgorithmes.net/stream/le-cout-cache-de-lia-cest-la-verification/ #ia #ai

Whether you are concerned or not about the harm caused by the technologies pushed on us with the current AI Hype, I highly recommend watching this excellent interview by 404 Media's @samleecole with @alex and @emilymbender from the DAIR Institute: https://www.youtube.com/watch?v=UwBZiuH-1QY

And I say "whether you are concerned or not" because this will affect you one way or another, whether you care about it or not. In fact, it very likely already does.

I've published a new blog post: "Human Creations", on the difference in content generation by LLMs, and the creation of text, art and code by humans.

You can find it at https://derickrethans.nl/human-creations.html or at @blog

When I told AI was pure Heresy...www.youtube.com/watch?v=zMcAT7Swbgo Now we have Yellow Space Marines...

boosted

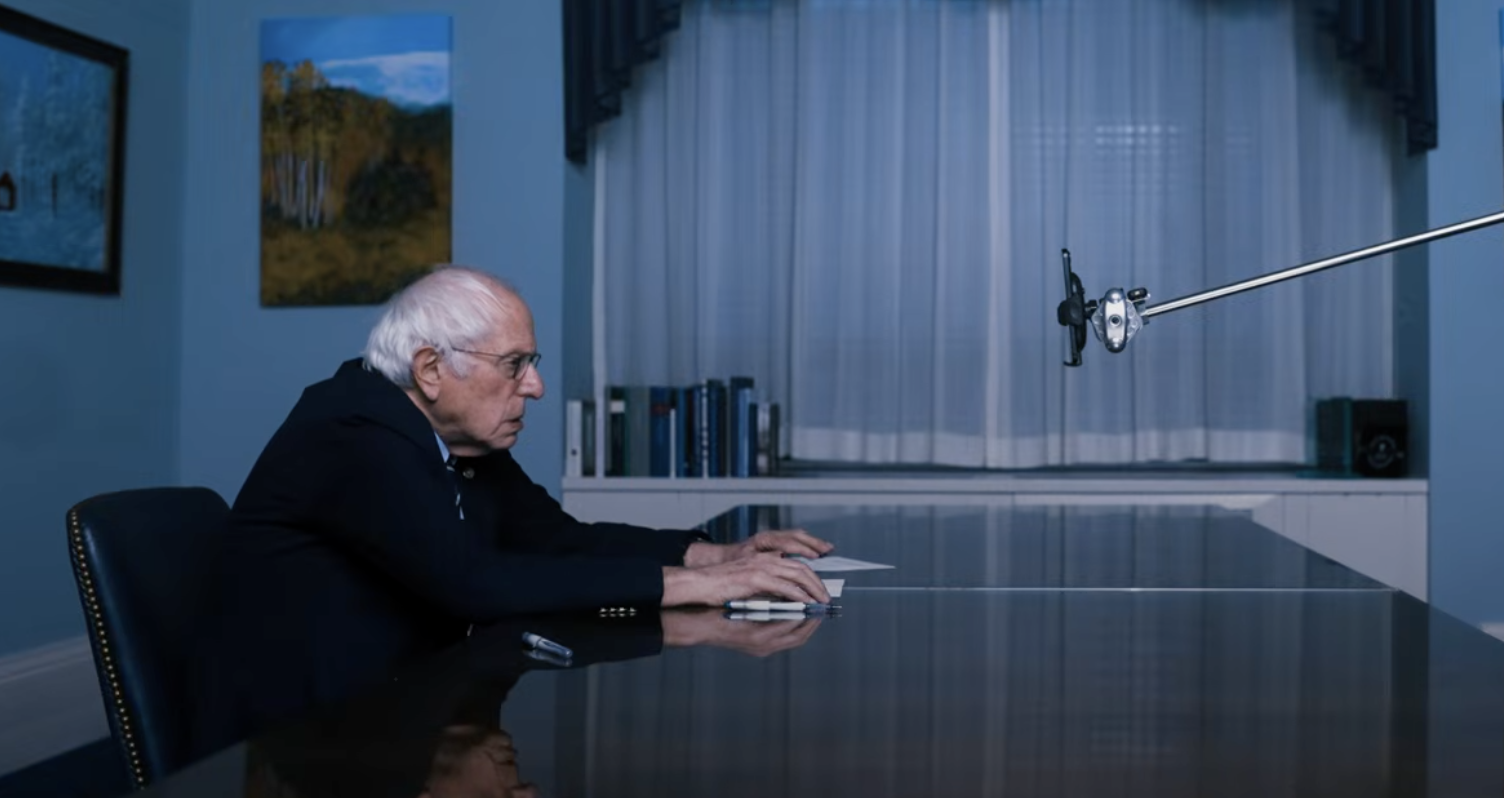

boosted#BernieSanders Interview With #Claude Revealed Intelligence Is Abundant. Power Is Concentrated.

Thousands of #CEO just admitted #AI had no impact on #employment or #productivity—and it has economists resurrecting paradox from 40 years ago

Newfangled computers were actually at times producing too much information, generating agonizingly detailed reports and printing them on reams of paper. What had promised to be a boom to workplace productivity was for several years a bust. This unexpected outcome became known as Solow’s productivity paradox.

https://fortune.com/2026/02/17/ai-productivity-paradox-ceo-study-robert-solow-information-technology-age/

https://archive.ph/tAUFb

Ou p'tet ben que c'est de la merde depuis le premier jour ?

#ia #ai

#nightmareOnLLMstreet

#Tech

#Capitalisme

Via > 🔗 https://mastodon.social/users/camilleroux/statuses/116277746160824931

-