Search results for tag #ai

I didn't use #AI this morning to help me solve a CSS issue I had. I didn't even use a search engine for it... well I did try a search, but quickly changed course because I realized that it wasn't so much the answer that I was lacking. I was having difficulty with the question.

In the end it was actually faster to slow down. I'm already well proficient in #CSS so I only needed to get through 4 pages of the Flex Layouts chapter before I realized what I was overlooking.

#AWS launches a new #AI agent platform specifically for #healthcare

https://techcrunch.com/2026/03/05/aws-amazon-connect-health-ai-agent-platform-health-care-providers/

#Meta sued over #AI #SmartGlasses’ #privacy concerns, after workers reviewed nudity, sex, and other footage

Bill Gosper on the console of his Symbolics XL400 during VCF 6.0 in 2003 at Computer History Museum demonstrating a color “Gosper curve” with a color flowsnake print on the wall.

The XL400 is now on display and demonstrated at https://icm.museum

Bill at the AI lab PDP-6 running his implementation of Conway’s game of life.

#vcf #vintagecomputing #lisp #ai #hacker #graphics #life #fractal #art

@elilla except duolingo does still work and does help people learn and is better than nothing and the gameification is easier on a lot of people than regular learning styles.

I hate this system bashing where people claim something is useless when it is in fact imperfect.

Same as the "google translate is worthless" arguments. Its not. Its quite handy in fact, despite not being awesome.

#rant #translation #languages #learning #Duolingo #AI

If you try not to use AI, what is your reasoning?

| It doesn't make products better: | 45 |

| It is bad for the environment: | 44 |

| It hurts people (steals their work or jobs): | 43 |

| I do not want to enrich the tech bros: | 45 |

Good news: the Govt. has decided to pause its potential relaxation of copyright laws to facilitate the theft & scraping of 'protected' content by AI/LLM 'research'.

Less good: after a consultation which largely demonstrated significant support for leaving copyright as is, Labour is still thinking how it can accommodate Big Tech's demand to be allowed to steal information with impunity.

So copyright remains in play, leaving uncertainty for anyone depending on its rules.

#copyright #AI

h/t FT

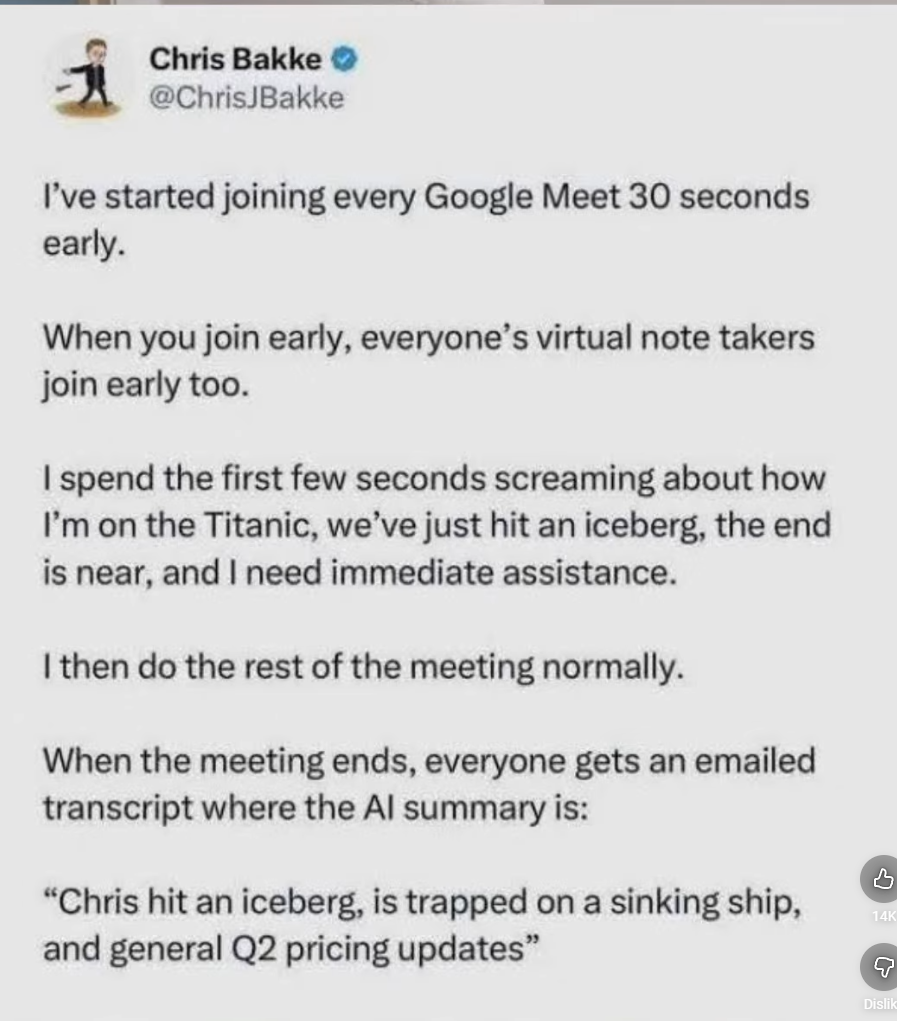

I don't know what to think about this. Is it pure genius or pure HERESY ?

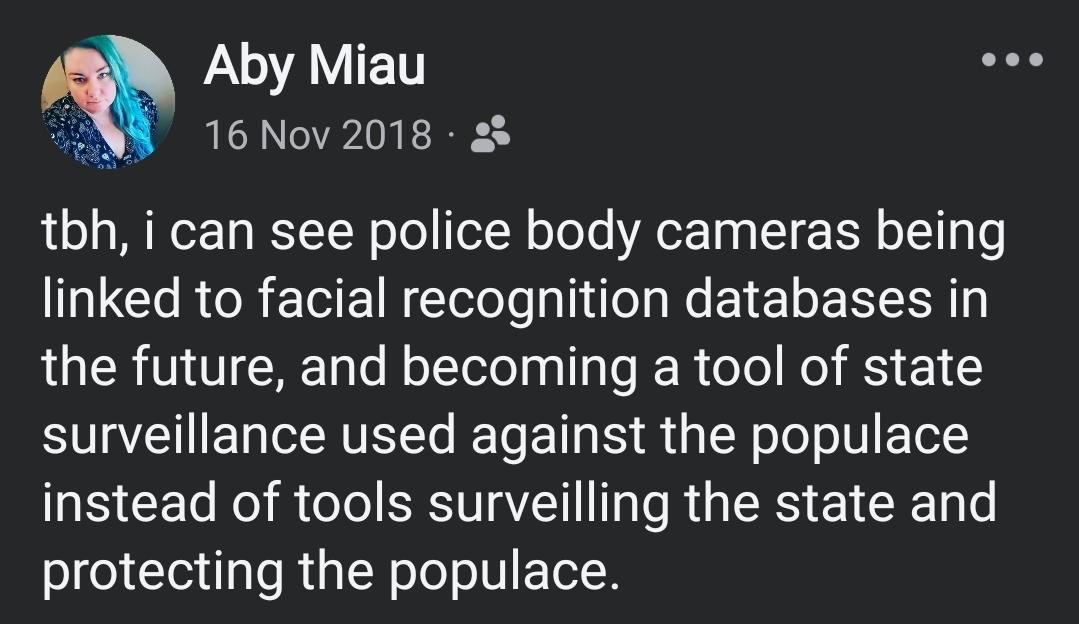

look im gonna need some of you all to start listening to me

_____

DHS BUILT A FACE SCANNING APP. YOU MIGHT ALREADY BE IN IT.

They came to his workplace armed with guns, gas canisters and artificial intelligence. He fought back with his quick wit and street smarts.

What happened next is a preview of what routine face scans could look like on American streets, in this special France 24–Mother Jones report.

Abdikafi Abdurahman Abdullahi, known as Kafi, is one of the few people willing to speak publicly about being subjected to the Department of Homeland Security’s new facial recognition tool, Mobile Fortify.

The Somali-American engineer-turned-Uber driver was waiting for a fare in an airport rideshare lot on January 7th, just hours after Renee Good was shot and killed by federal agents. As he watched a video of her death on his phone, there was a knock on his car door. Outside stood roughly a dozen ICE agents, demanding proof of his citizenship.

Kafi, who is Black and Muslim, refused to show his ID, arguing he was being racially profiled. Instead, he began filming, and his unflappable, mischievous comebacks transformed his video into a viral sensation.

The Department of Homeland Security officially acknowledged the existence of Mobile Fortify in January. But by then it had already been used over 100,000 times in American communities, according to recent court filings.

“This is taking a big and very scary step toward a kind of totalitarian checkpoint society that we have always professed to abhor here in the United States,” warned ACLU attorney Nate Wessler

https://youtu.be/fDsYzd4ITq0?si=MuhIisCXI0gwRbsF

#tech #technology #surveillance #surveillanceTech #AI #chatgpt #LLMs #policing #HumanRights #RightToPrivacy

「 If “AI-rewriting” is accepted as a valid way to change licenses, it represents the end of Copyleft. Any developer could take a GPL-licensed project, feed it into an LLM with the prompt “Rewrite this in a different style,” and release it under MIT 」

#vibecoding #ai #opensource #copyleft

https://tuananh.net/2026/03/05/relicensing-with-ai-assisted-rewrite/

The FUNNIESTEST (not) thing about THIS bubble is how come every single techbro friendly moron is going "hurrr... it's NOT a 'bubble' if we can *see* it coming, HA HA GOTCHA", as the bubble builds and everyone thinks it's not a bubble because they read what those idiots are saying.... and then they keep pouring money and giving the LLM bullshit both attention, time,platform... and money. Did I mention money?

Buckle in, hold onto to your butts, this is gonna go pop, soon.

@nixCraft India, who are investing heavily on being the droning-scripted-tech-support for LLM chatbots, is dealing with a critical low employment level and even lower salaries.

They just had the largest workers strike, ever.... anywhere. Modi (India's tRump) is tap dancing, HARD, to hide this, and he is failing... also hard.

The hype and the actual investment being poured into that isn't returning even remotely close to what is hyped.

Jensen Huang says Nvidia is pulling back from OpenAI and #Anthropic, but his explanation raises more questions than it answers

#news #tech #technology #AI #openai #chatgpt #aislop #nvidia

Ah ah ah c'est à la fois la blague préférée de mon môme *et* la meilleure définition ever des fan de iagen.

#ia #ai

#NightmareOnLLMstreet

#époustouflan

Via > 🔗 https://mastodon.social/users/giyomu/statuses/115475535478990481

Je viens de repenser à ce post Twitter de peut-être 2018-2020 et en fait, c’est la réaction qu’ont les gens à ChatGPT et la genAI en général

RE: https://mastodon.social/@lapresselibre/116175761751163570

On parle bien de l'image publique de l'entreprise qui a rendu les ordinateurs chers pour tout le monde et multiplié par 1000 le coût environnemental du numérique !?

Tout ça pour faire tourner un perroquet statistique pas meilleur qu'un pile ou face…

"Il mettrait le feu au pays si ça pouvait faire de lui le roi des cendres"

(oh wait, c'est exactement ce qu'ils font !)

#AI #IA #OpenAI #Anthropic #Trump

Invest in your process maturity or you won't get the best value out of your AI initiatives. At least that's what the 2026 State of DevOps report seems to imply!

A lot the focus was on AI and the report particularly highlighted the importance of solid DevOps maturity across the SDLC as being needed before you can really see ROI on AI adoption.

Join Robin, Nico, and Jason as they provide their opinions on what they saw in the report!

👉👉 https://www.puppet.com/resources/events/webinar/state-of-devops-2026

The article about the issue in this subthread is now published: https://sfconservancy.org/blog/2026/mar/04/scotus-deny-cert-dc-circuit-thaler-appeal-llm-ai/

To @maxine's larger point in the OP, #SFC is putting serious work in the next few months into issues related to #LLM-backed #AI agents and their impact and implications for #FOSS. Nothing beyond that to report at this very moment, as it's a work in progress, but please watch #SoftwareFreedom Conservancy's website for announcements in coming months.

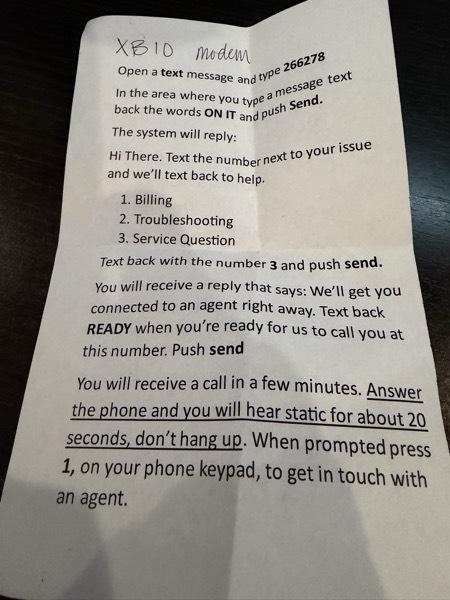

1.5 Million Users Leave ChatGPT. If You Cancel, Make Sure You Do This First 👇

Instructions on how to export and migrate your data.

#OpenAI #ChatGPT #AI #enshittification #privacy #surveillance #DeleteChatGPT #Pentagon

Edit: retracted https://pouet.chapril.org/@dallo/116173459545147240

What in the enshittification hell hole this come from?

Windows 12 Reportedly Set for Release This Year as a Fully Modular, Subscription-Based, AI-Focused OS

https://tech4gamers.com/windows-12-reportedly-relasing-2026-modular-ai-focused-os/

> Reports suggest that Microsoft may be gearing up to launch Windows 12 this year, which will be a modular and AI-focused OS.

#microsoft #microslop #ai #llm #technology #enshittification #slop #windows #Linux

"A grassroots boycott called QuitGPT has been spreading across the US and beyond, asking people to cancel their ChatGPT subscriptions. More than a million people have answered the call."

"We're organizing Americans and people around the world to quit ChatGPT."

#QuitGPT #news #USNews #USPol #technology #TechNews #OpenAI #ChatGPT #AI #GenAI

"Politicians and even police have learned they can dismiss photos and videos as fake. (…) the pattern is now familiar: deny, invoke AI, move on."

Adam Rose’s opinion piece for Poynter on how the greatest danger posed by AI is the "collapse of trust in real evidence" – and what journalists could do about it:

👇

https://www.poynter.org/commentary/2026/the-real-threat-of-ai-is-the-collapse-of-trust-deepfakes/

Windows12 pourrait sortir cette année 2026. Il serait sous abonnement, avec de l'IA au coeur du système et donc des obligations matérielles excluant la plupart du matériel actuel.

#Linux ! Ton heure est enfin venue !

https://tech4gamers.com/windows-12-reportedly-relasing-2026-modular-ai-focused-os/

(ouais, ou pas : https://www.frandroid.com/marques/microsoft/2999373_non-windows-12-ne-sortira-pas-en-2026-voici-ce-que-microsoft-a-prevu-cette-annee-pour-votre-pc )

Windows 12 reportedly set for release this year as a subscription-based, AI-focused OS

So there can be something worse than Windows 11...

https://tech4gamers.com/windows-12-reportedly-relasing-2026-modular-ai-focused-os/

#Microsoft #Microslop #Windows #Windows12 #Copilot #AI #enshittification #software #technology

We keep hearing that only 'AI', whose profound ability to grasp complex systems, could solve a problem as wicked, entangled and multi-dimensional as global warming.*

This report details not only why that has no basis in reality, but that this fairytale is part of an industry campaign to make BigAI look like it's part of a habitable future, covering the tracks for genAI's massive footprint.

https://ketanjoshi.co/2026/02/17/big-tech-greenwashing-report/

* Turns out the trick is not spewing CO2 & CH4 into the air

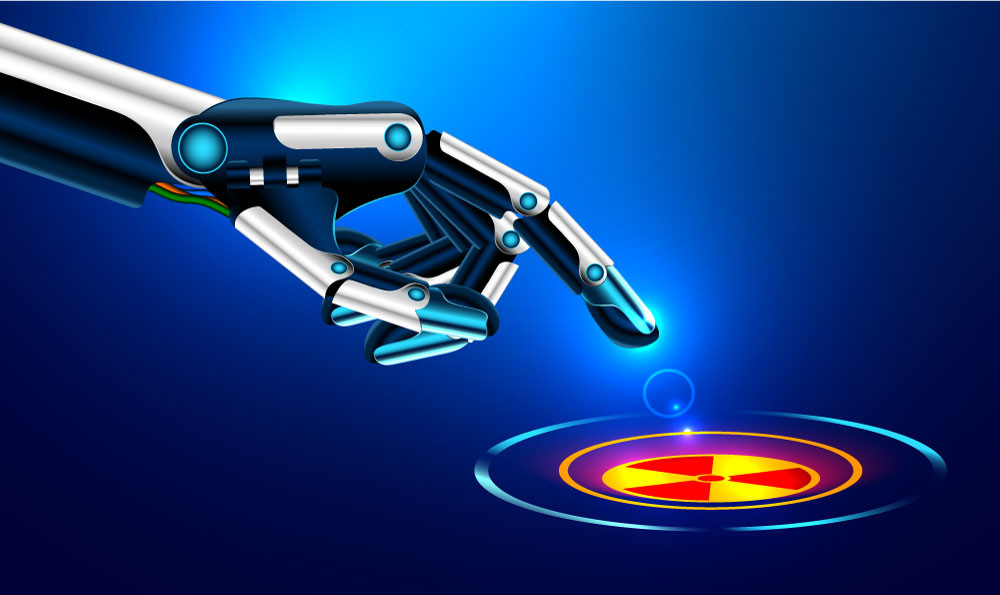

#AI / #LLM technologies are an existential threat to #humanity well before we even get anywhere near #AGI and the singularity.

The sheer scale of deployment and integration in all the nooks and crannies of society, where we give it access to all our information, and now with the Rise of the Agents, let AI act on them too. Giving full control away from us..

.. to the owners of this technology, the #BigTech usual suspects, and their billionaire class. Folks who are clearly out to dominate us, and keep us in check so they can continue their fancy lifestyles wallowing in decadence and moral depravity.

🤖 Unrestrained AI is the tech for 🧛 unrestrained elites.

@josephcox @404mediaco I spoke last and couldn't help but think about the big picture. The #Internet has consumed our world, as many predicted, but its hopeful promise has all-but-faded from the horizon.

"What we call #ArtificialIntelligence today, especially generative #AI and hybrid models...

@josephcox @404mediaco The event, called "Nothing to Hide? #Surveillance Tech and #AI's Risks & Rewards," was mostly dire warnings from the panel for a packed room of young students from Tufts, the US Naval Academy, Brazil, Cameroon, India, Kenya, Lebanon, Pakistan, Rwanda, South Africa and Uganda.

Hey! Good news everyone! The #SupremeCourt, by declining to overrule the #Copyright Office, has declared that #AI art cannot be copyrighted.

It's bigger than just Thaler's case though. (links below) What this means is that NO AI created work can be copyrighted. Not code, not verbiage, not art, not anything.

Only human created works are eligible for copyright, so sayeth the High Court.

Cory (@pluralistic) does a fantastic job of explaining the details and rounding up links. As he always does. Where he finds the hours in the day, I will never know. ;)

https://pluralistic.net/2026/03/03/its-a-trap-2/

Archive link to #ArtNet because something on the page was blowing up my browser, but they have more details on the case itself, and the "art" in question which just saved real artists: https://archive.md/bsyrQ

"Ex-Google PM Vibe Codes Palantir To Watch The Iran Strikes"

https://www.youtube.com/watch?v=0p8o7AeHDzg

See also: https://social.coop/@smallcircles/116158937812802335

Alright. Another #poll ..

Is this person's proud presentation of their creative work ..

#AI #LLM #ResponsibleTechnology #Ethics #TechnologyInnovation #Progress #Future #Society

| Responsible + ethical? Make us aware of the danger: | 1 |

| Techbroist myopic? Tech means progress, right?: | 2 |

| Something inbetween (please comment): | 0 |

Closed

Inspired by @jwz, I just set up the Firefox plugin https://addons.mozilla.org/en-US/firefox/addon/word-replacer-max/ to replae every instance of "AI" or "Artificial Intelligence" on LinkedIn (only) with "cocaine".

😙

Can AI Agents detect some hidden Backdoors in Binaries? Experiments with Code injected in C/Rust Projects, then analyze of resulting Binary with AI Coding Agents + access to reverse engineering Tools (Ghidra, Radare2, and binutils) #ReverseEngineering #AI https://quesma.com/blog/introducing-binaryaudit/

Read along with me if you like!

I'm working rn on a blog post re: yesterday's decision by Supreme Court of the USA (#SCOTUS)'s decision yesterday to deny certiorari in *Thaler v. Perlmutter*, which is a federal case in the DC circuit about whether the author/prompter of an #AI system can claim #copyright on a work that system generates.

As prep, I'm rereading the DC Circuit ruling (which now stands):

https://media.cadc.uscourts.gov/opinions/docs/2025/03/23-5233.pdf

I'll post some “quick takes” in this thread.

Ars Technica Fires Reporter Over AI-Generated Quotes

https://www.thewrap.com/media-platforms/journalism/ars-technica-fires-ai-reporter-fabricated-quotes/

Derrière la polémique entre Trump et Anthropic, les enjeux de l’usage militaire de l’IA

https://next.ink/227088/derriere-la-polemique-entre-trump-et-anthropic-les-enjeux-de-lusage-militaire-de-lia/

Claude d’Anthropic a été utilisé par l’armée des États-Unis dans son attaque de l’Iran. Alors que le CEO de l’entreprise s’y oppose depuis quelques jours, le moment révèle à la fois le nouveau palier que l’industrie de l’IA a franchi dans le déploiement de ses technologies et la tentative de maîtrise du secteur par le gouvernement des États-Unis.

The learning gets reinforced when you're witnessing that you keep getting away with BS. Many things do no longer have to effectively work, but just to look as if they were.

The only exception being when there are physical (e.g., medical) or financial consequences that hit individual users, directly.

Accountability is key here. Being held financially accountable for the recommendations or decisions of the "#AI" you're selling would be a game changer.

Lecture indispensable.

C'est un peu long comme texte pour que je dise comme souvent "vous pouvez apprendre ça par cœur" mais ça le mériterai amplement.

https://www.terrestres.org/2025/06/28/chatgpt-cest-juste-un-outil/

Lecture indispensable.

C'est un peu long comme texte pour que je dise comme souvent "vous pouvez apprendre ça par cœur" mais ça le mériterai amplement.

https://www.terrestres.org/2025/06/28/chatgpt-cest-juste-un-outil/

@k3ym0 @ublockorigin these are people who didn't pay attention to the Cambridge Analytica situation or at least don't understand how dangerous metadata is.

Imagine what a bad actor could manipulate you into believing through prompt responses based on its deep knowledge of your past thinking.

The psychological manipulation possibilities are truly frightening.

#skynet #ai #cybersecurity

TechCrunch: ChatGPT uninstalls surged by 295% after DoD deal

https://techcrunch.com/2026/03/02/chatgpt-uninstalls-surged-by-295-after-dod-deal/

🏛️ BREAKING: Today, the US Supreme Court declined to rule that workslop created by AI systems can be copyrighted.

Question for the lawyers here: If a work (original or derived) is not copyrightable, is there any route for it to be releasable under a FOSS license?

Question for the lawyers here: If a work (original or derived) is not copyrightable, is there any route for it to be releasable under a FOSS license?

#OpenSource #FreeSoftware #FOSS #FLOSS #AI #ArtificialIntelligence

Microsoft gets tired of “Microslop,” bans the word on its Discord, then locks the server after backlash

> Microsoft blocks the word Microslop on its Copilot Discord, bans users, and locks channels after backlash, showing tensions around its AI push

Ohio EPA weighs allowing data centers to dump wastewater into rivers

#news #tech #technology #AI #aislop #politics #ohio #datacenter #environment

MICROSLOP — Microsoft's AI Slop Manifesto

A manifesto documenting the systematic flooding of low-quality, synthesized, and unverified content

Samsung 870 EVO SATA III SSD 1TB 2.5” Internal Solid State Drive

Price on 2025-10-04: $ 94.99

Price on 2026-03-02: $179.99

🙄

#AI #RAMShortage #SSDShortage #Enshittification #LateStageCapitalism

With Revenue Share Shrinking, Does Nvidia Need Gaming Anymore?

In the last quarter, Nvidia revenue topped $68 billion, but just 5.5% of that came from gaming. That's still a nice chunk of change, but it's dwarfed by the company's AI data center business.

https://www.pcmag.com/news/with-revenue-share-shrinking-does-nvidia-need-gaming-anymore

The claim "you won't be replaced by AI, but by a person using AI" is nonsense. The Block layoff victims were some of the most productive, #llm pilled people in the company, but it didn't save them, because that's not what layoffs are about.

The layoff script goes, as always:

- overhire

- lay everyone off

- pretend it's because of #AI productivity gains

- stock go up

There is no individual solution that will protect you from bad leadership and cost cutting.

Even more than Gleam community the AS/AP based fediverse faces existential threats where it comes to the promise to lead us towards "the future of social networking", a peopleverse. And #AI poses high danger risks that must be known, so we can anticipate and mitigate them timely.

But above all we have to find ways to constructively collaborate with each in this chaotic grassroots environment we are part of.

#Collaboration and #cocreation at scale, organic growth and sustainable evolution are applied research areas of Social coding commons, where participants add value while working on their own solutions, following their self-interests in alignment with those of other people

To folks who are interested in the general subject matter I addressed above, I recommend watching the talk given by Michiel Leenaars of @nlnet at #FOSDEM last month:

"FOSS in times of war, scarcity and (adversarial) AI" by @michiel

https://fosdem.org/2026/schedule/event/FE7ULY-foss-in-times-of-war-scarcity-and-ai/

Whatever we think of #AI / #LLM mad hype cycle, we have to deal with its rushed and inhumane dumping of the technology into global human society.

#CALMculture is a strategic approach to that allows activist voices to have the most impact in dealing with the dangers of disruptive technology introductions, and focuses beyond berating people and demanding sacrifice ("don't use, or else.."), to creating a process that helps win people over and work together on best outcomes and in direction of solutions.

#CALM stands for Constructive activism-led movements, such as Social coding commons. Coding is social, and #SocialCoding the holistic approach to ensure that.

Social coding commons evolves Social experience design or #SX, solution development for grassroots movements, supported by the #SocialWeb.

In the thread below I copied a post to #Gleam's community with a suggestion to ponder about best outcomes from current and ongoing AI disruption, and deal with risks.

https://discuss.coding.social/t/calm-culture-to-ensure-best-outcomes-to-ai-disruption/831

‚If you run a company whose entire value proposition is the ability to see patterns, predict outcomes, and connect dots that others miss, you’d think someone in the building might have flagged that suing a small independent magazine over unflattering-but-accurate reporting would only guarantee that millions more people read it.…‘

Palantir Sues Swiss Magazine For Accurately Reporting That The Swiss Government Didn’t Want Palantir 🙃

#Swiss #Palantir #Press #Tech #Technology #AI #Data #BigTech

#OpenAI is now officially data supplier for the Pentagon and is very proud of it!

Your Data flows directly to the U.S. Department of War! #ChatGPT

--> Delete your Account!

https://openai.com/index/our-agreement-with-the-department-of-war/

#US #Surveillance #KI #AI #Technology #Tech #War #Data #Privacy

@mako Oops!

Even while I commented on the Brave New World in which we live, I forgot the constant vigilance now required in that Brave New World.

Namely, I must ask you the sad question we have to always ask now:

Can you vouch your video records an authentic response from G-E-H-munnye & has not been — say — modified by an #LLM-backed #AI from one of #Google's competitors?

Even w/ our friends we must “trust but verify” anything we see or hear to confirm it's not mutilated by AI slop.

Here's a good, subtle way to show resistance to #LLM-backed #AI slop that @mako found!

I encourage everyone — when referring to the #Google product — to pronounce “Gemini” as “Gee-eee-H-munnye”¹.

Big Tech thinks these systems should replace human workers, & it *is* rude to pronounce workers' names wrong!

¹ Fascinating that it put an ‘h’ in there? 🤔 LLMs are indeed very lossy compression systems.

Cc: @richardfontana as he'll generate the IPA notation from his head for us. Humans are better.

DevOps'ish 298 is out!

- Anthropic turned down a $200M Pentagon contract over AI safety guardrails (OpenAI took it)

- Taiwan: 90% of high-end chips, real blockade risk by 2027

- 5 Ingress-NGINX gotchas before Gateway API migration

- eBPF ring buffers > perf buffers

AI Chat... But Make It #Ads

> A satirical (but real!) demo of what #AI chat could look like in an ad-supported future. Chat with an AI while experiencing every monetization pattern imaginable — banners, interstitials, sponsored responses, freemium gates, and more.

Ich bin mir nicht sicher, ob ein Unternehmen unbedingt möchte, das Interna analysiert und modifiziert werden sollen.

#Copilot: Microsofts KI-Assistent soll direkten Zugriff auf Dokumente erhalten | heise online https://www.heise.de/news/Copilot-Microsofts-KI-Assistent-soll-direkten-Zugriff-auf-Dokumente-erhalten-11193112.html #Microsoft #ArtificialIntelligence #AI #MicrosoftCopilot #Datenschutz #privacy

You've got nothing to hide, do you?

»We show that large language models can be used to perform at-scale #deanonymization. With full Internet access, our agent can re-identify Hacker News users and Anthropic Interviewer participants at high precision, given pseudonymous online profiles and conversations alone, matching what would take hours for a dedicated human investigator. We then design attacks for the closed-world setting. Given two databases of pseudonymous individuals, each containing unstructured text written by or about that individual, we implement a scalable attack pipeline«

I have to say I preferred the original The Muppet Show. This reboot is pants.

Books like this are rare, and even rarer being in spanish.

Books like this are rare, and even rarer being in spanish.

Brand new too. The cover was designed to look retro af.

Excelent as a gift for yourself or a friend.

「 Artificial Intelligence for the Z80: If you can build it in 8 bits, you really get it: Computer science in BASIC 」

https://www.amazon.es/Inteligencia-Artificial-para-Z80-construirlo/dp/B0GNZ9HSJ8

"According to CEO Dara Khosrowshahi, some of his underlings have created an AI clone of him so they can prepare for meetings with him, ensuring everything is fine-tuned for his wants and needs."

Is the next step getting rid of CEOs altogether?

https://futurism.com/artificial-intelligence/uber-employees-ai-ceo

#Firefox 148 is out, actually featuring the promised "#AI kill switch" - but only in the desktop version (so far?).

Thus, if you want to get rid of #AIslop, machine learning bullshit and #LLM idiocy on your #mobile device, you still have to proceed as described here:

https://mastodon.sdf.org/@jack/115485435193740088

(And yes, despite of it all, Firefox is still the #browser you should use... *sigh*)

The #AI fever is having a huge impact on consumer #electronics.

Last February I purchased a 4TB Western Digital Black SN850X from Newegg to put in my desktop PC that I use for all kinds of work related tasks; video editing, virtual machines, etc. At that time it cost $280 USD.

Today, that exact same drive, also from Newegg and listed at the same item page (I just clicked the link from my receipt) is now $630. That's a 2.25x increase in price over the course of one year.

Ugh. "Anthropic Drops Flagship Safety Pledge."

https://time.com/7380854/exclusive-anthropic-drops-flagship-safety-pledge/

It's not yet clear what this means for the high-stakes negotiation between Anthropic and the Pentagon. Two of the Anthropic sticking points have been that Claude not be used for "mass surveillance or autonomous weapons systems that can use AI to kill people without human input."

https://www.theguardian.com/us-news/2026/feb/24/anthropic-claude-military-ai

#AI #Anthropic #Claude #Hegseth #LLMs #Pentagon #USPol #USPolitics

Update. #Anthropic just 𝗿𝗲𝗷𝗲𝗰𝘁𝗲𝗱 #Pentagon demands to remove safeguards on #Claude that limit its use in mass surveillance and autonomous weapons. Here's the statement from CEO #DarioAmodei.

https://www.anthropic.com/news/statement-department-of-war

Update. "#SamAltman says #OpenAI shares #Anthropic's red lines in #Pentagon fight."

https://archive.is/5sTBa

Update. Employees of #Google and #OpenAi just released an open letter supporting #Anthropic.

https://notdivided.org/

"We hope our leaders will put aside their differences and stand together to continue to refuse the Department of War's current demands for permission to use our models for domestic mass surveillance and autonomously killing people without human oversight."

The letter welcomes new signatures from past and present employees of Google and OpenAI.

At the time of this post, it had 684 signatures.

⋅ LLMs killed the privacy star, we can't rewind, we've gone too far

− https://www.theregister.com/2026/02/26/llms_killed_privacy_star/

Copied from https://notdivided.org

-----

The #DepartmentOfWar is threatening to

1. Invoke the Defense Production Act to force Anthropic to serve their model to the military and "tailor its model to the military's needs"

2. Label the company a "supply chain risk"

All in retaliation for #Anthropic sticking to their red lines to not allow their models to be used for domestic #MassSurveilance and autonomously killing people without human oversight.

-----

Top comment on HN from https://news.ycombinator.com/item?id=47188473

> 1.5 hours after this was posted, Sam Altman stated #OpenAI will work with the #DoW.

Saya tidak anti AI kok. Jujur AI sering saya ajak diskusi masalah sains dan teknologi, juga masalah filosofi. Bahkan sering saya diskusi masalah teknis.

Tapi say no buat suruh AI coding. Lebih banyak mudorot nya. Emang bener dia cepat coding-nya. Tapi namanya membangun sistem itu juga termasuk mencari kesalahan dan memperbaiki. Amit-amit kalo harus baca hasil vibe coding dan memperbaikinya. Bakal butuh waktu jauh lebih panjang dan bikin frustasi.

Your #SmartTV may be crawling the web for #AI

https://www.theverge.com/column/885244/smart-tv-web-crawler-ai

Trump orders US agencies to stop using Anthropic technology in clash over AI safety

https://apnews.com/article/anthropic-pentagon-ai-hegseth-dario-amodei-b72d1894bc842d9acf026df3867bee8a

Would be super funny if that's what causes the bubble to start popping.

boosted

boostedInb4 Duo responds and makes the statement:

"These Persona services are opt-in by the client as additional features. We do not integrate them into our core product."

to which the answer to that response is:

"Yeah, the CLIENT opts in, meaning the COMPANY opts in... the employees have no say. The *employees*, all of us, can only opt out by being fired."

Edit: Reading Duo's privacy statement though, I'm not sure if the client actually can opt out. It looks like its fully integrated right now. Clients may opt to require employees to scan their faces... but it looks like IDV with Persona is fully integrated into the above mentioned services. So yay.

boosted

boosted@alien8 - Yeah, I figure any statement by Duo will absolutely push the whole "its not enabled by default" and its the client's decision to use it.

Which ignores the core point - the employees (once a client enables it) have no say. By even offering this, they put so many people in the position of "accept this or quit" which is not consent.

Especially in the US where one's job is tied to access to healthcare and where many live paycheck to paycheck and if they quit or get fired, they run the real risk of going hungry or losing their house.

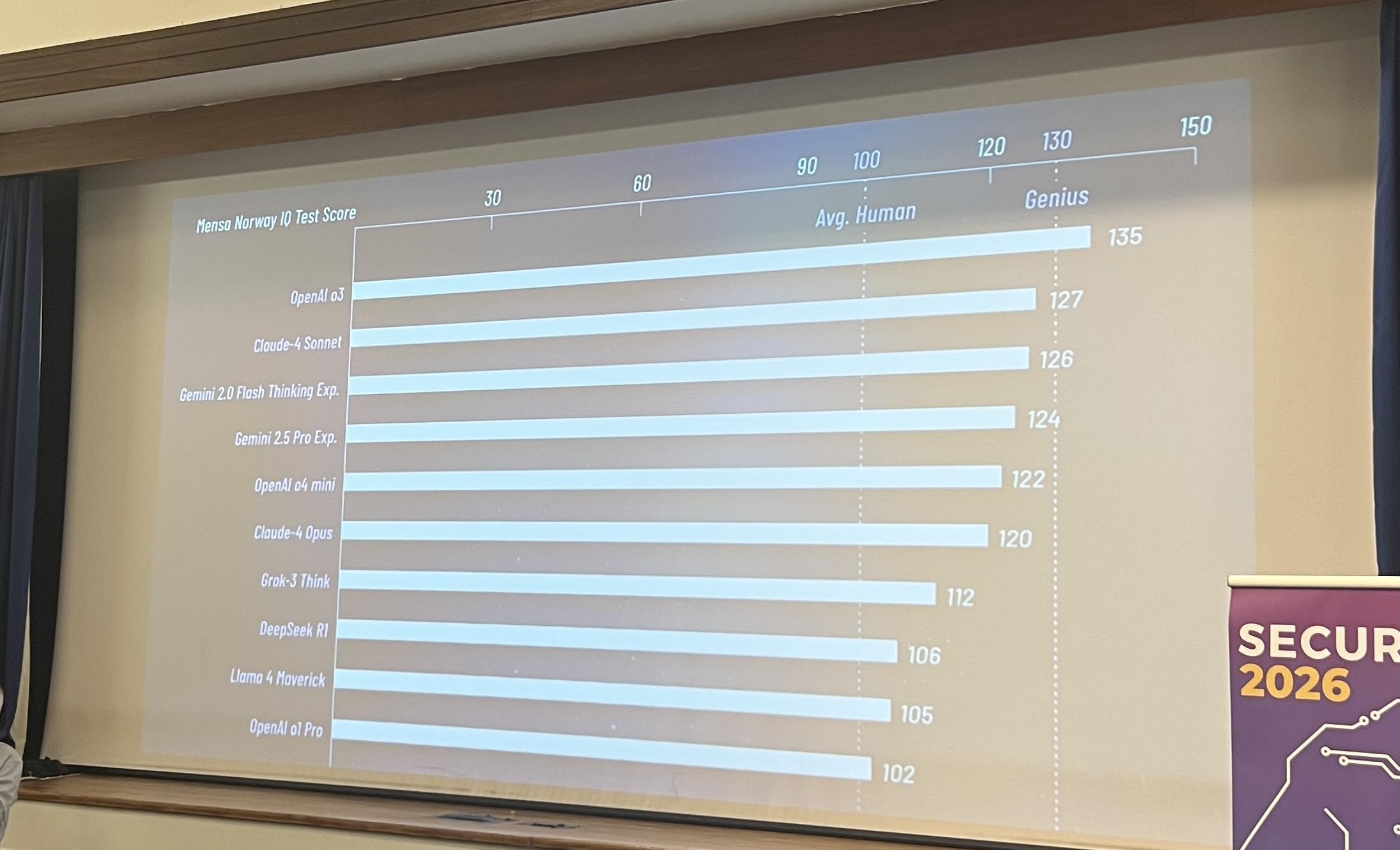

He puts up the racist, eugenicist notion of “IQ” on the board and doesn’t even mention the racist eugenicist origin of “IQ.”

Karma for vibe coders comes from vibe bug hunters and vibe PRs. Who wins? Us hackers do. It's raining shells!

So Duo (the multifactor authentication service that #infosec loves) has integrated with Persona (the privacy destroying, Peter Thiel backed, AI-linked, facial scanning and mapping "identity verification" software)

You know the recent Discord snafu that received such massive pushback and caused so many people to leave Discord that they've dropped their identity verification?

Yeah, that Persona.

Duo integrates it into Duo Premier, Duo Advantage, and even Duo Essentials...

...which means many working class folks will have no option but to be enrolled into and use Persona...

...or be fired.

Vous n'en n'aviez pas rêvé ? Pire, vous le redoutiez même ? Alors vous pouvez faire confiance à #Microsoft pour l'implémenter...

#Outlook lancera automatiquement #Copilot pour ouvrir les liens #HTML. En effet, dans une fonctionnalité en cours de développement, Microsoft prévoit d'ouvrir automatiquement son assistant #IA Copilot dans le navigateur #Edge depuis un lien Outlook. L'éditeur ne dit pas si cette fonction sera optionnelle ou pas.

https://www.lemondeinformatique.fr/actualites/lire-outlook-lancera-automatiquement-copilot-pour-ouvrir-les-liens-html-99487.html

news is making the rounds that Defense Secretary Pete Hegseth wants to use the AI software produced by Anthropic for mass surveillance and AI-controlled weapons

allegedly, Anthropic isn't willing (yet) to comply

Hegseth is threatening to isolate the company from further gov. procurement

i'm going to guess Anthropic will yield

then there will be competition over who gets to sell broken, non-deterministic software for lethal use to the US government

https://edition.cnn.com/2026/02/24/tech/hegseth-anthropic-ai-military-amodei

I'm trying to ask people outside my programming world how they use AI, and what they think of it. I was out with friends last night, and people started talking about how their phones affect their sleep.

One person said they end up staying up later than they like because they start asking Gemini questions, and it's interesting enough they keep going for a while.

I asked that person if they had any idea how AI actually works. They didn't, and were curious.

boosted

boostedhttps://restofworld.org/2026/india-ai-data-license-fee/?utm_campaign=row-social&utm_source=bluesky&utm_medium=social&utm_content=1772132417

#AI

After the #BigTech countless #LLM galaxies formed. At the center of each sits a super-massive Black Box. Hidden inside lurks the mysterious #Singularity. Which is nothing more than a concept as we don't know what the heck is going on there. All our common sense breaks down, after we crossed the #CriticalThinking boundary. Coming close to the event horizon of any Black Box inevitably leads to #enshittifaction as a person is sucked into the void. An outside observer would see that person frozen in time, stagnant. As the #AI universe expands, continuous https://socialcooling.com will eventually lead to the Big ☠️ RIP of #Humanity, who invented the Laws of Online #Nature.

Ces derniers mois, avec des confrères et consœurs d'Asie, d'Amérique latine et d'Europe, j'ai enquêté sur les effets du développement des centres de données à travers le monde. Les premiers résultats de ce travail sont en ligne, allez-y faire un tour !

*

Over the past few months, I have been investigating the effects of the development of data centers around the world together with colleagues from Asia, Latin America and Europe. The first results of this work are already online, go take a look!

Up to you to decide whether arguing with shitty chatbots is the customer experience you want, as long as it's cheap.

This is not only a decision about what you're buying, or subscribing to, but also a decision about your own workplace.

Do you want products and services worth paying for, or just a simulation of an economy, where nothing seems to really work anymore, and everybody keeps pretending that bullshit everywhere was "the future of work"?

https://www.bbc.com/news/articles/cq570d12y9do

"#AI"

Memory shortage could cause the biggest dip in #smartphone shipments in over a decade - https://techcrunch.com/2026/02/26/memory-shortage-could-cause-the-biggest-smartphone-shipments-dip-in-over-a-decade/ #ai bubble continues to spread joy everywhere...

Hot take: People are afraid of reacting or replying to AI critiques on LinkedIn for the same reason Trump's cabinet meetings are full of sycophantic praise for the president.

boosted

boosted#Mozilla released #Firefox 148 featuring the #AI "Kill Switch" that has been making the rounds.

The design of the switch is meant to absolve Mozilla of any responsibility for your use of its AI.

It is a trap.

It is meant to switch discussion from whether Mozilla should be doing AI, to whether you can *choose* to not use it.

Don't believe their lies.

This is a long post, and if you click through, I recorded a video: https://www.quippd.com/writing/2026/02/09/firefoxs-ai-kill-switch-is-a-trap-how-mozilla-made-ai-your-problem.html

Hope you enjoy and please boost if you enjoyed it!

#AI has gotten good at finding bugs, not so good at swatting them

Is anyone else sick of living in a reality which is nothing but all the #dystopian #fiction from 50 years ago?

We have #JamesBond villains ( #Musk )

We have #InvasionoftheBodySnatchers zombification ( #MAGA )

Etc, etc.

And now we have #Wargames:

" #AI s can’t stop recommending #nuclear strikes in #war game #simulations

Leading AIs from #OpenAI, #Anthropic and #Google opted to use #nuclearweapons in simulated war games in 95 per cent of cases"

🇪🇺 It could soon become easier for companies to train their #AI on your data – at least if the #EU Commission's #DigitalOmnibus proposal passes. 🤖

👤 featuring data protection lawyer and AI specialist Kleanthi Sardeli

Tragic: Against so much effort they undertook in the past few decades toward building autonomy and self-determination, the US Peace Corps regresses to techno-colonialist high-pressure American AI salespeople.

boosted

boostedAnthropic ditches its core safety promise in the middle of an AI red line fight with the Pentagon

> Anthropic, a company founded by OpenAI exiles worried about the dangers of AI, is loosening its core safety principle in response to competition.

Why competition and capitalism suck in one line.

https://edition.cnn.com/2026/02/25/tech/anthropic-safety-policy-change

We need to be careful of scapegoating #AI when humans are responsible.

And we also need to hold those that poisoned the tree to account.

We should not penalize a minority for the actions of a group of people that do bad things.

https://knowprose.com/2026/02/ai-ethics-and-use-for-a-minority/

Police arrested a man for a burglary in a city he had never visited after face scanning software deployed across the UK confused him with another person of south Asian heritage.

#AI #FacialRecognition #Racism #Policing

Facial recognition error prompts police to arrest Asian man for burglary 100 miles away | Facial recognition | The Guardian

https://www.theguardian.com/technology/2026/feb/25/facial-recognition-error-prompts-police-to-arrest-asian-man-for-burglary-100-miles-away

RE: https://graz.social/@skuebeck/116137339954821171

Remember #blackmirror?

It seems that we're near https://en.wikipedia.org/wiki/Fifteen_Million_Merits already.

#AI #energy #harvestinghumans #megacorps #techbros #broligarchy

Thanks @calum for the contribution to the discussion.

If we agree that Claude is just a tool, like Black, isort, or Ruff, then we should also agree that tools shouldn’t list themselves as co-authors of commits.

We never had to make a rule about Black or isort doing that, because they never tried. If newer tools insist on adding themselves in “Co-authored-by”, an explicit rule may simply prevent that bad behavior.

“It doesn’t take intelligence to see that leaving both customers and employees in the lurch isn’t smart.”

When You Know When Customer Service AI Is Failing

#Tech #AI

https://warnercrocker.com/2026/02/26/when-you-know-when-customer-service-ai-is-failing/

⋅ Burger King will use AI to check if employees say ‘please’ and ‘thank you’

− https://www.theverge.com/ai-artificial-intelligence/884911/burger-king-ai-assistant-patty

AI-powered reverse-engineering of Rosetta 2 for Linux

https://github.com/Inokinoki/attesor

#HackerNews #AI #ReverseEngineering #Rosetta2 #Linux #GitHub

Notre appétit pour l’IA alimente la violence au Congo

https://reporterre.net/Au-Congo-les-consequences-meurtrieres-de-l-imperialisme-minier-europeen

Critique des visées impérialistes de Trump au Groenland, l’Europe n’est pas en reste quant au partenariat qu’elle mène avec le Rwanda sur les matières premières. La majorité du tantale exporté par ce pays est pillé en République démocratique du Congo.

I posted on lobste.rs and reddit.com about my latest blog article. It's been a very long time (3 years) since last time I did that.

Some comments are… weird… They try to summarize the content of the article, but it's all wrong. Checking the authors' profile show they are active since many months. But really, really, it smells LLM. Maybe I'm “doubtful” because LLM are everywhere today, but I don't know what to do with these comments…

Did you experience something similar recently?

I have experimented with different models over the years, looking for the biases, etc, and I think the framing here is right.

#ai hallucinates with people.

With only so many tokens, there is drift in a model that is akin to amnesia that builds up once tokens are replaced. It is charming if you understand how it works. It can be very dangerous with those reliant.

#aipsychosis #psychology #mentalhealth

https://nautil.us/why-youre-more-likely-to-develop-ai-psychosis-than-to-join-a-cult-1270352/

Le toujours formidable Olivier Ertzscheid aka @affordance commence avec une citation de Céline mais on aurait tout aussi bien pu choisir Marx « L'histoire se répète deux fois, comme une tragédie, puis comme une farce »

Mais la leçon à en tirer est aussi : du monde de la culture face aux Gafam il y a 20 ans, à la social-démocratie moribonde aujourd'hui face au fascisme, plier l'échine n'apporte jamais la paix.

🤖🇪🇺 It could soon become easier for companies to train their #AI on your data – at least if the #EU Commission's #DigitalOmnibus proposal passes.

Watch now to find out more! 👉 https://www.youtube.com/shorts/V0HkgtiuT0U

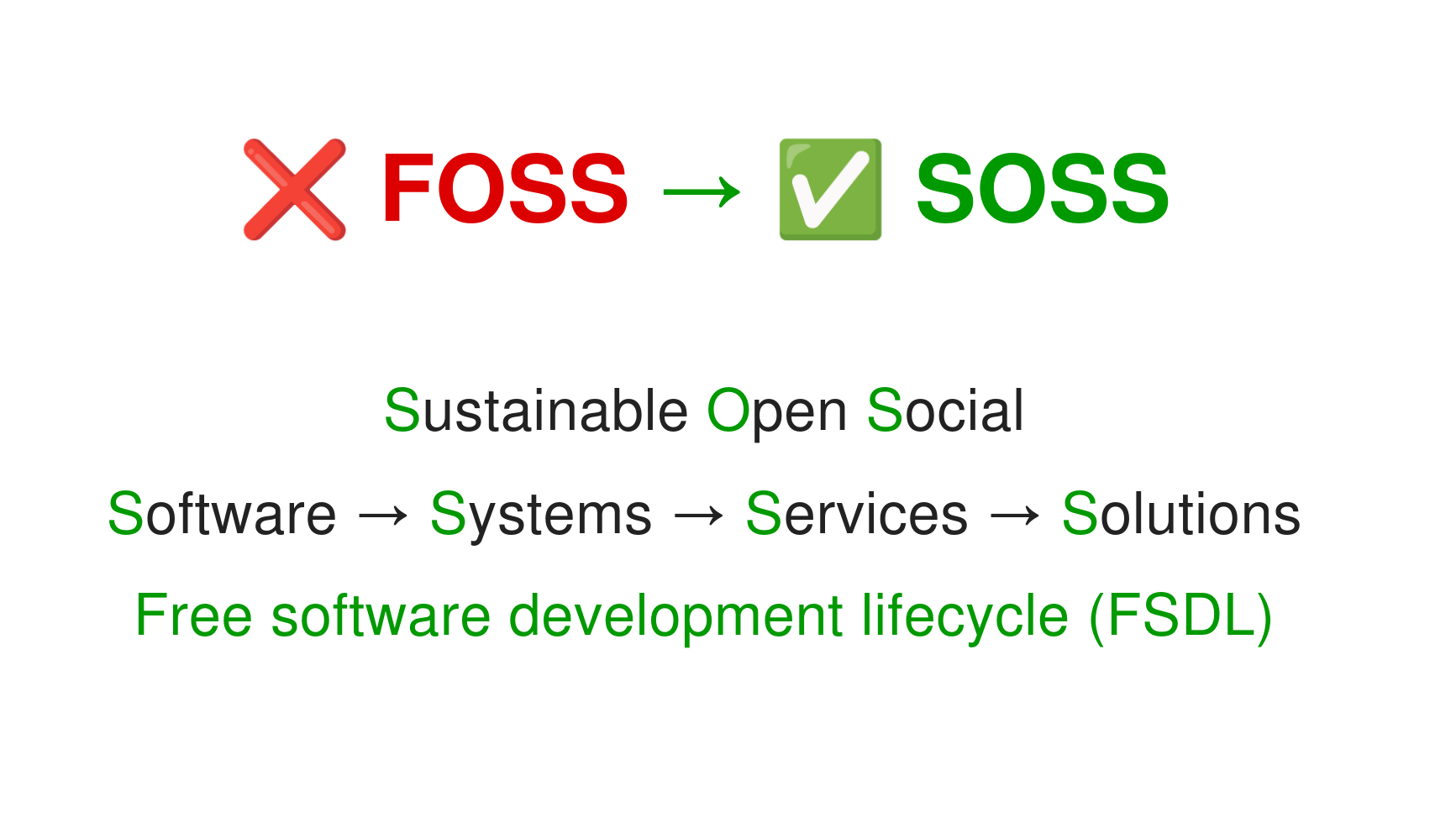

I posted on this notion of #SOSS before, with a meme attached..

https://social.coop/@smallcircles/115399465970544831

There's nothing wrong with #FOSS, but it only provides part of the solution as long as the #Sustainability angle is not given due attention to.

Esp. with disruptive inhumanely introduced #AI #LLM technologies, new major threats to the #FreeSoftware community are surfacing, and giving attention to this subject matter is more important than ever.

Recently, I mused over what it would take, from my perspective, to significantly change my view that the tech industry's infatuation with non-intelligent "intelligence" is a net-negative for society.

https://pythonbynight.com/blog/what-does-it-take

Below are a few choice quotes from my post. (a sort of TLDR)

1/

AI is reshaping the global economy, but who owns the tech and what does it take to “produce” it? A new BIS bulletin breaks down the AI supply chain and shows how unevenly power is concentrated across leading firms. Today's Number Theory looks at its findings.

Read on HT app: https://www.hindustantimes.com/editors-pick/the-small-club-of-ai-giants-number-theory-101772075489669.html

#GenAI #AI #OpenAI #Microsoft #Google #Nvidia #Tech #Technology #DataViz

RE: https://hachyderm.io/@nedbat/116133445557306539

I got Ned's point, but I don’t think we can treat Claude (or similar tools) at the same level as a person.

We've never added tools (e.g. isort, Black, Ruff, ...) as co-authors of commits, even when they generated 100% of a commit.

Listing Claude as a co-author of a commit put it the same level as a person, but it's a tool.

The author of a commit is a person responsible for the code they submit, without shifting that responsibility to the tool, or worse, to the project maintainers.

The AI hype-cyclone is bad, but so is the anti-AI witch hunt. Commits co-authored by Claude do not mean that a project has "abandoned engineering as a serious endeavor"

Would we say that accepting contributions from new developers means we've "abandoned engineering as a serious endeavor"? No.

Claude can write wrong code. New contributors can write wrong code. What matters is what you do with that code after it's been written.

reckon this's pretty good

'Rejecting or resisting a commercial technology designed to attempt a mass wealth transfer and to erode public institutions is a valid political position. This rejection can manifest as a kneejerk “plagiarism machine sucks” tweet or a slogan on a poster board at a picket line or a policy paper. And just because someone is rejecting a technology and the broader project it is a part of, does not mean they do not understand it. Oftentimes that rejection is entirely informed, warranted, and rational'

#genAI #technology #ai #politicalEconomy #leftism

https://www.bloodinthemachine.com/p/actually-the-left-is-winning-the

Update. From a survey of university faculty in the US: "Males were twice as likely as females to use #AI to recommend journals to which to submit research articles."

https://www.primaryresearch.com/AddCart.aspx?ReportID=790

(Unfortunately the full results are not #OpenAccess and not even close. One copy of the PDF costs $98.)

Update. #AI / #LLMs "tend to recommend literature with greater citation counts, later publication date, and larger author teams. Yet, in scholar recommendation tasks, there is no evidence that LLMs disproportionately recommend male, white, or developed-country authors, contrasting with patterns of known human biases."

https://arxiv.org/abs/2501.00367

🤪 Anthropic Drops Flagship Safety Pledge

“We felt that it wouldn't actually help anyone for us to stop training AI models,” Anthropic’s chief science officer Jared Kaplan told TIME in an exclusive interview. “We didn't really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead.”

https://time.com/7380854/exclusive-anthropic-drops-flagship-safety-pledge/

That is entirely the wrong headed (giggity) approach, IMHO.

A big part of man(person)-machine interface is the control and responsibility remaining in human hands.

Not so long ago, the few of us geeks who foresaw where machine brains would take us, campaigned in #stopkillerrobots.

A campaign to keep human decision making in military #killchain

A campaign that failed spectacularly, in no small part, I am sure to uniformed Doctorow analogues dismissing it as unnecessary farsical puppetry.

Even now, I actively strive to #regulateAI IRL and human decision making is essential and imperative in AI.

The "reverse centaur" is a canard, as much as a driver of a motorcar is not pulling the cargo by their muscle.

AI is not going away for the same reason we don't see "Picks and shovels" (!) digging infrastructure trenches anymore. Machines have been eating jobs since the 1700s and it's only scary now because the white collars are on the chopping block.

I have huge respect for @pluralistic and his role, which he fulfills admirably is an activist, a what we call in Australia, a shitstirer. His opinions stimulate debate, but keeping an expert in the decision chain, if it's only a tick box is a good thing.

Call it a "moral crumple zone" if you will.

Removing it all together is bad and I am disturbed anyone would try to make hay of this.

The alternative is full automation and I am sure all the #AI "fans" would agree it's a bad thing.

#AI can’t stop recommending nuclear strikes in #wargame simulations

Leading AIs from #OpenAI, #Anthropic and #Google opted to use #nuclearweapons in simulated war games in 95% of cases

The scenarios involved intense international standoffs, including border disputes, competition for scarce resources and existential threats to regime survival.

https://www.newscientist.com/article/2516885-ais-cant-stop-recommending-nuclear-strikes-in-war-game-simulations/

What could go wrong?

For those worried about the stories about #AI and #NuclearWeapons:

The real danger with #nuclear weapons is humans

We built them, we built AI

We have constructed elaborate “strategies" detailing that the only way to protect humans from nuclear weapons is for multiple countries to have a bunch of nuclear weapons

We are the danger, always have been

Just like 2008 had "Subprime Mortgages (or "Dot Com" crash) that nobody understood, 2026 has "Circular AI Revenue" deals. Ex: Microsoft gives money to OpenAI, who gives it back to Microsoft for Azure credits, which Microsoft then reports as "AI Growth".

Microsoft and Nvidia fund AI startups that buy their own tech back to report "growth." With $600b burning in data centers in 2026 alone but no real revenue to back it up.

If that loop snaps, the "fuck up" will be massive.

Pourquoi Rails est devenu, par accident, le framework parfait pour l'IA - Michael Bensoussan

https://michaelbensoussan.com/posts/rails-llm-friendly/

#rails #ai #claude-code #artisanat

Hey #SFBA, your neighbors in Gilroy are mounting an opposition to a new #Amazon hyperscaler data center that they are proposing to build there.

If you have a moment, sign their change dot org petition here: https://www.change.org/p/banning-regulating-data-centers-in-gilroy

You can read more about this here: https://gilroydispatch.com/residents-raise-concerns-over-new-data-center-in-gilroy/

You can also follow the organizers’ Instagram page here: https://www.instagram.com/stopgilroydatacenternow/

Sadly, the data center construction was approved by the city last year. This campaign is a hail-mary by the organizers to stop yet another #AI datacenter from being built.

Admettez le, on juge tous les gens.

Moi je ne juge pas les gens sur leur couleur de peau, leur façon de parler, de s'habiller, de manger, de prier, ni même leurs goûts littéraires ou musicaux.

Par contre les opinions politiques, alors là je juge. Très très fort.

Et l'usage régulier de l'ia est l'expression de l'adhésion à un projet politique.

https://www.courrierinternational.com/stories/intelligence-artificielle-ils-meprisent-ceux-qui-utilisent-chatgpt_240669

#ia #ai

#NightmareOnLLMStreet

#NoAi

#Capitalisme

RE: https://mastodon.online/@pernillet/116129853986444219

- or rather, the European startup system is also suffering from over-valuation brought on by mentioning the letters AI, sucking up funding for projects that probably won't work & even more probably won't ever deliver any value & even more probably still will never turn a profit & even most probably will cause significant harm both during their run & when they fail.

Money that could otherwise had been put towards non-idiotic activities.

Potato, tomato.

RE: https://mamot.fr/@Khrys/116127545545526429

Et dans le même temps, Goldman Sachs crée un index des valeur boursières zéro ia

https://www.axios.com/2026/02/20/ai-goldman-sachs-stocks-index

il y a des signaux faibles qui commencent à devenir forts, le capitalisme en mode panique.

#ia #ai

#NightmareOnLLMStreet

#Capitalisme

Raphael Isla boostedQuand un scénario catastrophe sur l'IA fait trembler Wall Street

Un essai sous forme de prospective, paru dimanche sur un blog économique et financier relativement obscur, est devenu si viral qu'il a contribué à plusieurs dégringolades de titres en Bourse. En cause : le scénario catastrophe qu'il déroule, prédisant une chute libre de l'économie réelle liée aux performances des IA.

How to turn off #AI features in #Firefox, or choose the ones you want

💸 Hetzner: 50% price hike, no fooling, from April 1st

https://www.theregister.com/2026/02/24/ai_isnt_done_yet_memoryrelated/

FastCompany: ‘This should terrify you’: Meta Superintelligence safety director lost control of her AI agent—it deleted her emails

OpenClaw nearly wiped out the AI alignment employee’s entire inbox, in an incident that social media is calling ironic.

Chinese censorship in Confer, the encrypted, privacy-preserving AI assistant by Moxie Marlinspike, creator of Signal: https://gagliardoni.net/#20260224_confer

#ai #signal #confer #privacy #censorship #surveillance #china #eu #politics #openai #gemini #chatgpt #deepseek #qwen

The Netherlands: the police quietly stop using CAS (the Crime Anticipation System), a country-wide predictive policing system.

‘Ten years ago, The Netherlands introduced a national police system that used data and algorithms to predict crime rates in neighbourhoods. It never worked properly, and warnings about bias had been raised for years.’

‘Politie stapte in stilte af van algoritme dat kans op misdaad in buurten zou voorspellen’

We have a right to be presumed innocent. Not predicted guilty.

AI that claims to 'predict' crime will only replicate the historic discrimination and abuse already experienced by over-policed communities.

Sign the petition to ban it in the UK ⬇️

https://you.38degrees.org.uk/petitions/ban-crime-predicting-police-tech

#SafetyNotSurveillance #precrime #AI #policing #automatedinjustice #ukpolitics #ukpol

So-called 'crime predicting' tech automates racism in the UK criminal justice system.

Even the police have admitted that it's biased, with it being based on flawed police data 🤷

We need to stop pouring in good money after bad.

We need an outright ban on its use.

#SafetyNotSurveillance #precrime #AI #policing #automatedinjustice #ukpolitics #ukpol

Firefox 148.0 arrives with AI controls https://www.gamingonlinux.com/2026/02/firefox-148-0-arrives-with-ai-controls/

Pannes chez AWS dues à son agent IA : Amazon se défausse sur ses employés

https://next.ink/225523/pannes-chez-aws-dues-a-son-agent-ia-amazon-se-defausse-sur-ses-employes/

Plusieurs pannes chez AWS sont liées à l’utilisation en interne de ses propres agents IA par ses ingénieurs. L’entreprise qui a lancé son agent Kiro en assurant qu’il allait « au-delà du vibe coding » rejette la faute sur ses employés qui auraient laissé faire son IA.

***

Encore mieux que les stagiaires de #BFMTV

#AWS #AI #IA #Kiro #Amazon #GAFAM

"...But now, more than ever, it has become clear that Taiwan is critical to America’s economic survival, especially as artificial intelligence — which is built using chips made in Taiwan — drives the U.S. stock market and fuels economic growth..."

#China knows this. #Taiwan knows this. The #unitedstates knows this. The #techbros know this. You should too. Can't vibe out of that, kids. Single point failure.

Gifted link.

États-Unis. “QuitGPT”, la campagne pour résilier son abonnement à ChatGPT, prend de l’ampleur

https://www.courrierinternational.com/article/etats-unis-quitgpt-la-campagne-pour-resilier-son-abonnement-a-chatgpt-prend-de-l-ampleur_240494

Lancée sur la plateforme Reddit, une initiative visant à encourager les usagers de ChatGPT à résilier leur abonnement à cet outil d’intelligence artificielle prend de l’ampleur. Le média américain “MIT Technology Review” raconte les motivations de ceux qui suivent ce mouvement, principalement pour s’opposer aux politiques de Trump.

#QuitGPT #ChatGPT #Reddit #AI #IA #generativeAI #openai #mit

Le Parlement européen bloque l’accès aux outils d’IA générative sur ses appareils

https://next.ink/brief_article/le-parlement-europeen-bloque-lacces-aux-outils-dia-generative-sur-ses-appareils/

Pour des raisons de cybersécurité et de protection des données, le Parlement européen a bloqué les fonctionnalités d’IA génératives des appareils qu’il fournit aux députés européens et à leurs équipes.

Pannes chez AWS dues à son agent IA : Amazon se défausse sur ses employés

https://next.ink/225523/pannes-chez-aws-dues-a-son-agent-ia-amazon-se-defausse-sur-ses-employes/

Plusieurs pannes chez AWS sont liées à l’utilisation en interne de ses propres agents IA par ses ingénieurs. L’entreprise qui a lancé son agent Kiro en assurant qu’il allait « au-delà du vibe coding » rejette la faute sur ses employés qui auraient laissé faire son IA.

***

Encore mieux que les stagiaires de #BFMTV

#AWS #AI #IA #Kiro #Amazon #GAFAM

Southern California air board rejected pollution rules after AI-generated flood of comments

—> automated astroturfing by fossil fuel interests

—> the death of digital public consultation (as currently organised?) is an obvious consequence of LLMs. I wonder when governments will figure this out

#AI #Government #Democracy #LLM #Politics #Climate #Environment #FossilFuel

The dangerous lefists of Goldman-Sachs says it so : « #AI Added ‘Basically Zero’ to US Economic Growth Last Year » 🍿

Mais punaise... -_- #ai

https://xcancel.com/IntCyberDigest/status/2025972175799271508

cc @martin @Agar

#Cheh ! 🤭

"La directrice de la sécurité de l'IA de Meta voit sa boite mail accidentellement effacée par une IA agentique"

https://www.404media.co/meta-director-of-ai-safety-allows-ai-agent-to-accidentally-delete-her-inbox/

A good argument when pro-LLM accuse anti-LLM to be against technology is that anti-AI are, by definition, more tech savvy than pro-LLM.

It's like AI "artists" telling people who use oil paint, acrylic, pastels, charcoal, wax, gouache, watercolors, graphite, silver point, digital etc. they're against "artistic techniques".

Une spirale infernale boursière frappe tout ce qui a trait à l'IA : les craintes liées aux pertes d'emplois massives et aux investissements stériles effacent près de 1 500 milliards de dollars de capitalisation

https://www.developpez.net/forums/d2152703-20/general-developpement/algorithme-mathematiques/intelligence-artificielle/fuite-courriel-revele-projet-dystopique-base-fonctionnalite-search-party-cameras-ring/#post12114598

AI takeover. Today's cartoon by @royaards. More cartoons: https://www.cartoonmovement.com/search?query=&sort=created&order=desc

RE: https://mastodon.design/@Saint_loup/116115218815277341

Sam Altman, patron d'OpenAI, minimise les quantités pharaonesques d'énergie requise pour entraîner ses modèles d'IA générative en rétorquant qu'"entraîner un humain prend 20 ans".

Voilà une belle mentalité de la tech #GenAI - et vous, vous arrêtez quand ?

https://quitgpt.org/

Ligature boostedNot The Onion

‘Training A Human Takes 20 Years Of Food’: Sam Altman On How Much Power AI Consumes

Looks like #perplexityai subscribers got a #baitandswitch

https://www.makeuseof.com/bought-annual-perplexity-subscription-lied/

The Altman Paradox: Expansion framed as substitution.

Sam Altman said a mouthful when he chose to compare human energy use and #AI energy use.

The core issue is that AI energy usage is additive, not substitutive.

Good #marketing rhetoric, but it does not pass any level of scrutiny humanity is capable of. 😂

*** International Data Protection Authorities Issue Joint Statement on Privacy Risks of AI-Generated Imagery ***

The EDPS is among the 61 signatories of a Joint Statement on AI-Generated Imagery issued by data protection authorities from the Global Privacy Assembly.

The statement responds to growing concerns about AI systems that can create highly realistic images and videos of identifiable individuals without their knowledge or consent. A key focus is the increasing risk of harm to children, underscoring the urgent need for responsible AI development and strong privacy safeguards.

As AI capabilities evolve, so must our global approach to protecting individuals’ rights and dignity.

Read the Joint Statement https://link.europa.eu/vrvR9X

The AI shit show goes on…

Pinterest Is Drowning in a Sea of AI Slop and Auto-Moderation

https://www.404media.co/pinterest-is-drowning-in-a-sea-of-ai-slop-and-auto-moderation

#pinterest #tech #technology #BigTech #AI #ArtificialIntelligence #LLM #LLMs #ML #MachineLearning #GenAI #generativeAI #AISlop #Fuck_AI #Microsoft #copilot #Meta #Google #NVIDIA #gemini #OpenAI #ChatGPT #anthropic #claude

RE: https://stefanbohacek.online/@stefan/116117369092128169

I think one of the fundamental issues of everyday AI is that even if it works perfectly and doesn't take all our water, it's self limiting in that AI is only cool if it's doing things you can't do. At a certain point, AI runs into the trap of making easy things hard and hard things easy.

Humans have a great propensity to learn and recalibrate without even realizing we're doing so. Most of the things we encounter on a daily basis already lean toward the easy things. As the skill gap shrinks even more, the balance will tip toward AI wanting to help with things we don't need help with.

There's a paradox of asking for help with small things. It often takes more effort to ask for help than to do it yourself. Whatever cognitive savings someone may gain from use of an #AI agent initially will eventually invert as they run into the paradigm of asking for help.

When you know what you want and how to do it, anything that tries to help becomes Clippy.

"A.I. is clearly not technology that is being universally encouraged as inevitable. Corporations often report that, so far, it does not seem to do much."

A friendly reminder that anytime you need you can take a refreshing musical trip with this 20-minute piece I made 6 years ago and arrive at the other end of the galaxy. Enjoy. Oh, and don't forget your towel. Passport won't be necessary.

https://www.youtube.com/watch?v=B_ybaQxbPrw

You can thank me later and buy it on Bandcamp:

https://unfa.bandcamp.com/track/singularity

#music #drumandbass #dnb #ambient #chillout #breakbeat #chillstep #atmospheric #bass #acid #reesee #synthesizers #MikePatton #ElonMusk #singularity #AI

Another opensource hero going through a full psychotic meltdown thx to AI.

Another opensource hero going through a full psychotic meltdown thx to AI.

It's so sad to witness this, but even sadder how normalized it has become.

This is a farm of 150 TikTok accounts that churn out AI-generated content

AI agents develop scripts, handle editing, and upload videos to dozens of accounts.

A real person just monitors the software, earning tens of thousands of dollars a month from the content.

#AureFreePress #News #press #headline #ai #TikTok

Handing off hands on fundamentals.

Nerd Nostalgia and Fading Fundamentals

#Tech #AI

https://warnercrocker.com/2026/02/21/nerd-nostalgia-and-fading-fundamentals/

Linus Torvalds — Talks about AI Hype, GPU Power, and Linux’s Future

Here Linus Torvalds Speaks on a calm and relaxed energy level, on the LLM hype how it affects kernel development and open source. The mothods how recent Linux kernel hardware like GPUs and APUs from different companies like AMD has modified the Linux's kernel role.

https://www.youtube.com/watch?v=NjGHrDnPxwI

#programming #LLM #AI #slop #kernel #configure #make #assembler #linker #makeInstall #Linux #openSource #technology #mathematics #linear #algebra #linearAlgebra

Gehoord in de ether:

> Ik kan al een paar dagen geen #iDEAL / #Wero transacties meer doen met #Librewolf / #Firefox. Hebben jullie daar misschien ook last van?

Thank you for your query.

#ChrietTitulaerAI predicted the response of the future:

> Oh wacht, dan geef ik de Prompt Engineer in het single-person project even de opdracht om de Wero integratie op een iets andere manier door de #AI the laten vibe coden. Denk dat er een hallucinatietje op de lijn gekropen is of zo. De 100 millioen lines of code vergen wel een half dagje om te hergenereren. We hebben ook veel grotere terawatt datacenters nodig in EU, hè? Die bureaucraten daar, zucht.

RE: https://mstdn.ca/@drikanis/116107120926277506

I'd like to comment on the common "AI is just a tool" thing: I'm a woodworker by training & that means a lot of machines - but almost every craftsperson knows how to do their job with hand tools, or "lesser" machines.

Similarly, a writer can write without a text editor - just as well, only slower.

If loss of a tool = loss of your skill & knowledge, then that tool isn't an asset, it's a liability. You're signing over your ability to do business to whoever sells & maintains that tool.

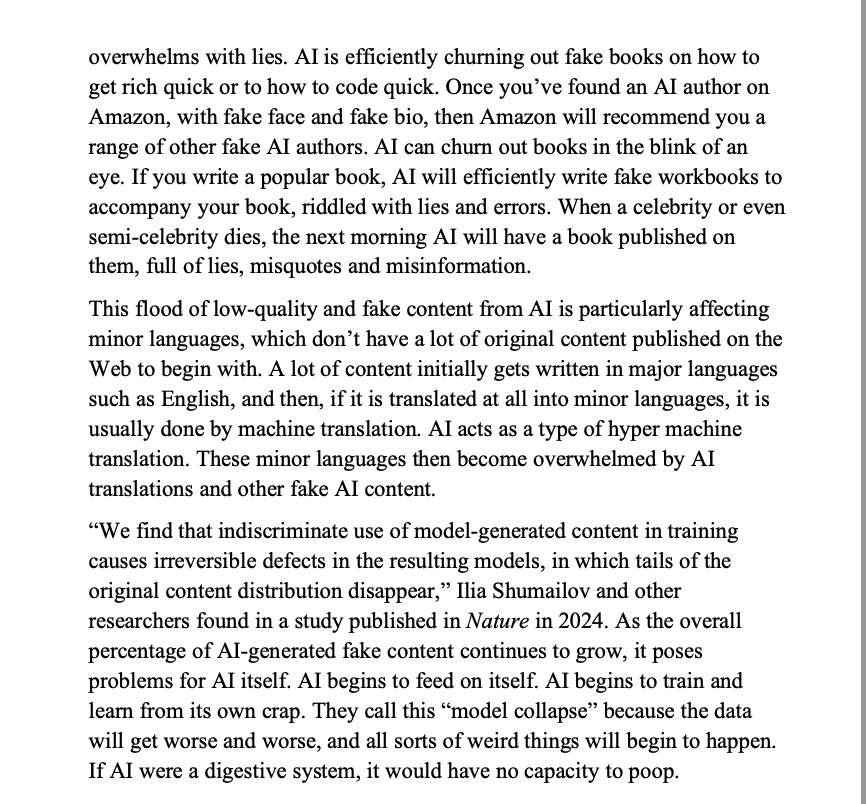

“This flood of low-quality and fake content from AI is particularly affecting minor languages. AI acts as a type of hyper machine translation. These minor languages then become overwhelmed by AI translations and other fake AI content.”

Reading this with Danish-speaking eyes, I realised that this problem had never occurred to me. Hvor dejligt. A whole new consequence of contemporary AI to dislike.

From '99th Day: A Warning About Technology' by Gerry McGovern

This is a really good opinion piece by an ex-Amazon L7 manager (senior manager, just below Director) on how #AI is a smokescreen that hides the real reason #Amazon has been shedding workforce: their gross over-hiring during the COVID lockdowns, and a growing culture of blame-shifting politics; and how the dysfunction led her to quit.

While I disagree with some of the vlogger’s conclusions, the whole piece generally rings true to me.

Acting ethically in an imperfect world

Life is complicated. Regardless of what your beliefs or politics or ethics are, the way that we set up our society and economy will often force you to act against them: You might not want to fly somewhere but your employer will not accept another mode of transportation, you want to eat vegan but are at some point in a situation where the best you can do is a vegetarian option. Sometimes it's not even our hand being forced but us not having the mental strength or priorities to do something: I […] [SENSITIVE CONTENT]

Life is complicated. Regardless of what your beliefs or politics or ethics are, the way that we set up our society and economy will often force you to act against them: You might not want to fly somewhere but your employer will not accept another mode of transportation, you want to eat vegan but are at some point in a situation where the best you can do is a vegetarian option.

Sometimes it’s not even our hand being forced but us not having the mental strength or priorities to do something: I could just not use WhatsApp because it is owned by Meta but my son’s daycare organizes everything through WhatsApp and do I really want to force my belief on all those very busy parents and caretakers or should I just bite the bullet and use the tool that seems to work for everyone – even though it’s not perfect?

There are no one-size-fits-all solutions for this: Sometimes a belief or ethic you hold is so integral to you that you will not move. Sometimes they are held loosely enough to let go under certain conditions. There’s a multitude of factors and thoughts that go into those kinds of decisions and at some point you just gotta make a call based on what’s in front of you and your priorities.

What I am saying is: We are all doing things we know are not ideal, are either morally questionable or do not align with our values. That’s life. I for example know that consuming meat is problematic for ethical and ecological reasons, I still do it sometimes. I reduce as much as I can but I am far from perfect. Just one of the many examples of my actions not being perfectly and purely aligned with my beliefs.

And I am 100% sure each and every reader will have similar experiences. We are imperfect and messy beings. In the end all you can do is actually try to make good decisions based on your values, try to learn from your actions and ideally do better – or at least understand what were the forces that go you to act against your values.

Cory Doctorow, probably one of the most influential writers about digital technology and culture celebrated the 6th anniversary of his personal blog pluralistic – congratulations! Cory is quite the phenomenon, I know nobody with his amount of output and his consistency of publication. It is scary just how consistently he writes and published while also churning out books. I do admire his work ethic tremendously.

But one thing in his celebratory post rubbed me the wrong way and I think it’s worth pointing out. Not for the one specific case but because it highlights a problematic way of thinking that I see a lot in current tech discourse that stands in the way of us actually improving the world.

So Cory outlines his process of how he publishes to his blog (and then pushes the same writing out to other places). He describes how one QA step in his process is piping his writing through an LLM (using the Ollama software, it’s unclear which open weight LLM he uses) to check for typos and small grammar mistakes. He then points out how some readers might find that problematic:

“Doubtless some of you are affronted by my modest use of an LLM. You think that LLMs are “fruits of the poisoned tree” and must be eschewed because they are saturated with the sin of their origins. I think this is a very bad take, the kind of rathole that purity culture always ends up in.”

Using LLMs isn’t always popular with the cool crowd, Cory knows that. And he wants to defend his (quite modest) use, which I understand: Nobody likes their problematic behavior being pointed out to them. But as outlined: Life’s complicated. Cory could just have said “I know there are many critiques of LLMs, but right now that is the best way for me to enable my work, I try to limit the problematic aspects by using a small open weight model and checking the results in detail.” and have moved on. But he needed to make a stand. And that stand lead him into the problematic train of thought that I wanted to point out here. Because many, many people listen to him and basically take his word as the gospel. So great power, great responsibility and such.

The whole argument is based on a strawman. Let’s look at Cory’s words:

Let’s start with some context. If you don’t want to use technology that was created under immoral circumstances or that sprang from an immoral mind, then you are totally fucked. I mean, all the way down to the silicon chips in your device, which can never be fully disentangled from the odious, paranoid racist William Shockley, who won the Nobel Prize for co-inventing the silicon transistor

Cory is right in pointing out that almost any technology we have has been touch by problematic figures. Racists, fascists, sexists, rapists. You name it. Anything you touch will have some research or engineering or product work by a person you despise in it.

The strawman is his claim that people who criticize LLM usage are doing that for some form of absolutist reasons. That they have a fully binary view of the world as separated into “acceptable, pure things” and “garbage”. Which is of course false. Because they are using a computer, using warm water that’s probably heated through the use of fossil fuels etc.

He attacks a ridiculous made-up figure to deflect from specific criticism of LLM use (that many probably wouldn’t even apply that strongly to his use case). But that’s not where criticism of LLMs comes from: It’s mostly specific focussing on the material properties of these systems, their production and use.

Cory continues:

“Refusing to use a technology because the people who developed it were indefensible creeps is a self-owning dead-end. You know what’s better than refusing to use a technology because you hate its creators? Seizing that technology and making it your own. Don’t like the fact that a convicted monopolist has a death-grip on networking? Steal its protocol, release a free software version of it, and leave it in your dust:”

Here again Cory is misrepresenting the LLM-critic’s argument: Sam Altman is a scam artist and habitual liar, but that’s not one of the first 10 to 20 reasons people criticise OpenAI’s products. Sure, basically every leading figure in the “AI” space seems to be unpleasant at best but that’s true for most of tech TBH. People criticise LLMs for their structural properties, their material impacts, for the way they make it harder to learn and grow, for the way they make products worse while creating massive negative externalities in the form of emissions, water use and e-waste. For the way these systems can only be build by taking every piece of data – regardless of whether the authors consent or even explicitly refuse and how the training needs ungodly amounts of harmful, exploitative labor done mostly by people in countries from the global majority. How it materially harms the commons.

Even if OpenAI was run by decent, ethical, friendly, trustworthy people (which would then of course make them not work on the products OpenAI has, but it’s just a thought experiment) their products would need to be criticized for what they are and what they do. It’s really not about these few dudes running the companies.

Cory misrepresents the arguments (well basically hides them) in order to not have to face any material criticism and turns them into “you just don’t like these people” which frames the criticism as emotional and not rational. As if it was about not liking a bunch of rich men.

He then goes into how the path forward is to “steal the protocol”. His following paragraph goes into detail:

“That’s how we make good tech: not by insisting that all its inputs be free from sin, but by purging that wickedness by liberating the technology from its monstrous forebears and making free and open versions of it”

And here we are coming to the core of the problematic argument. Because Cory implicitly argues that technology is neutral and that one can just change its meaning and effect through usage. But as Langdon Winner argues in his famous essay “Do artifacts have politics” artifacts have built-in politics deriving from their structure. A famous example is the nuclear power plant: Due to the danger of these plants, their needs with regards to resources as well as security power plants imply a certain form of political arrangement based on having a strong security force/army and a way to force these facilities (and facilities to store the waste in) upon communities potentially against their will.

Artifacts and technologies have certain logics built into their structure that do require certain arrangements around them or that bring forward certain arrangements. The second aspect is often illustrated by how ships are organized: Because ships are sometimes in dangerous situations and sometimes critical decisions need to be made, the existence of ships implies the existence of a hierarchy of power relationships with a captain having the final say. Because democracy would be too slow at times. These politics are built into the artifact.

Understanding this you cannot take any technology and “make it good”. Is a torturing device “good” if the plans on how to build it are creative commons? Do we need to answer the existence of the digital torment nexus by building an open source torment nexus? I’d argue we need to destroy it – regardless of what license it is released under.

That does not mean that it is impossible to take certain technologies or artifacts and try to reframe them, change their meaning. In some way computers are one such example: They were first used by governments, banks and other corporations to reach their goals but where then taken and reframed to devices intended to support personal liberation. It’s a bit more complicated (for why dive into the late David Golumbia’s “The Cultural Logic of Computation”) but let’s give that one to Cory. Sure, sometimes it is possible to take something originally built for nefarious purposes and find better uses for it. But is that true for everything? Very obviously not.

Let’s just look at the embedded politics of LLMs: In order to train a capable system you need data. Lots of it. AI companies keep buying books to scan them, they download everything from every legal or illegal source claiming “fair use” (a doctrine that only applies to the US by the way) or that “scraping is always okay”. Capable LLMs require a logic of dominance and of disregarding consent of the people producing the artifacts that are the raw material for the system. LLMs are based on extraction, exploitation and subjugation: To produce these systems – even if you release the end result as open weights for people to use at home – you need to take cultural works, personal writing etc. from people who not only not consented but actively reject that notion. Their politics is violence. How does one “liberate” that? What’s the case for open source violence?

He uses a so-called “open source LLM” and that’s very much how he presents his values but open-source LLMs do not really exist. You can download some weights but cannot understand what went into them or really change or reproduce them. Open source AI is just marketing and openwashing.

Cory shows his libertarian leanings here: If everything is somehow “free and open” then we have won. But “free and open” in this context usually means that “certain privileged groups have easy access to it and are not limited in what to do with it”. That’s one of the core problems with the whole “open Source” movement: That it reduces all struggle to if one can get their hands on the tools and has any restrictions to using them.

This also shines through in Cory arguing that we need to “liberate” technology. What a strange idea: Technology doesn’t need liberation, people do. Technologies are tools not what we actually care about. Sure, sometimes technologies can play a role in liberating people but just as often “freeing” a technology does quite the opposite to people: Ask the women who have massive amounts of nonconsensually created sexualized images and videos generated of them whether they think that the “liberation” of stochastic images generators is liberating them? Technology doesn’t need to be free. It cannot be free because freedom as a concept applies to people.

And freedom is not the only value that we care about. Making everything “free” sounds cool but who pays for that freedom? Who pays for us having for example access to the freedom an open weight LLM brings? Our freedom as users rests on the exploitation of and violence against the people suffering the data centers, labeling the data for the training, the folks gathering the resources for NVIDIA to build chips. Freedom is not a zero-sum game but a lot of the freedoms that wealthy people in the right (which I am one of) enjoy stem from other people’s lack thereof.

“Purity culture is such an obvious trap, an artifact of the neoliberal ideology that insists that the solution to all our problems is to shop very carefully, thus reducing all politics to personal consumption choices:”